Overview

Gait recognition is an emerging biometric modality. Compared with face or fingerprint recognition, it is more adaptable to different environments and harder to spoof.

System Architecture

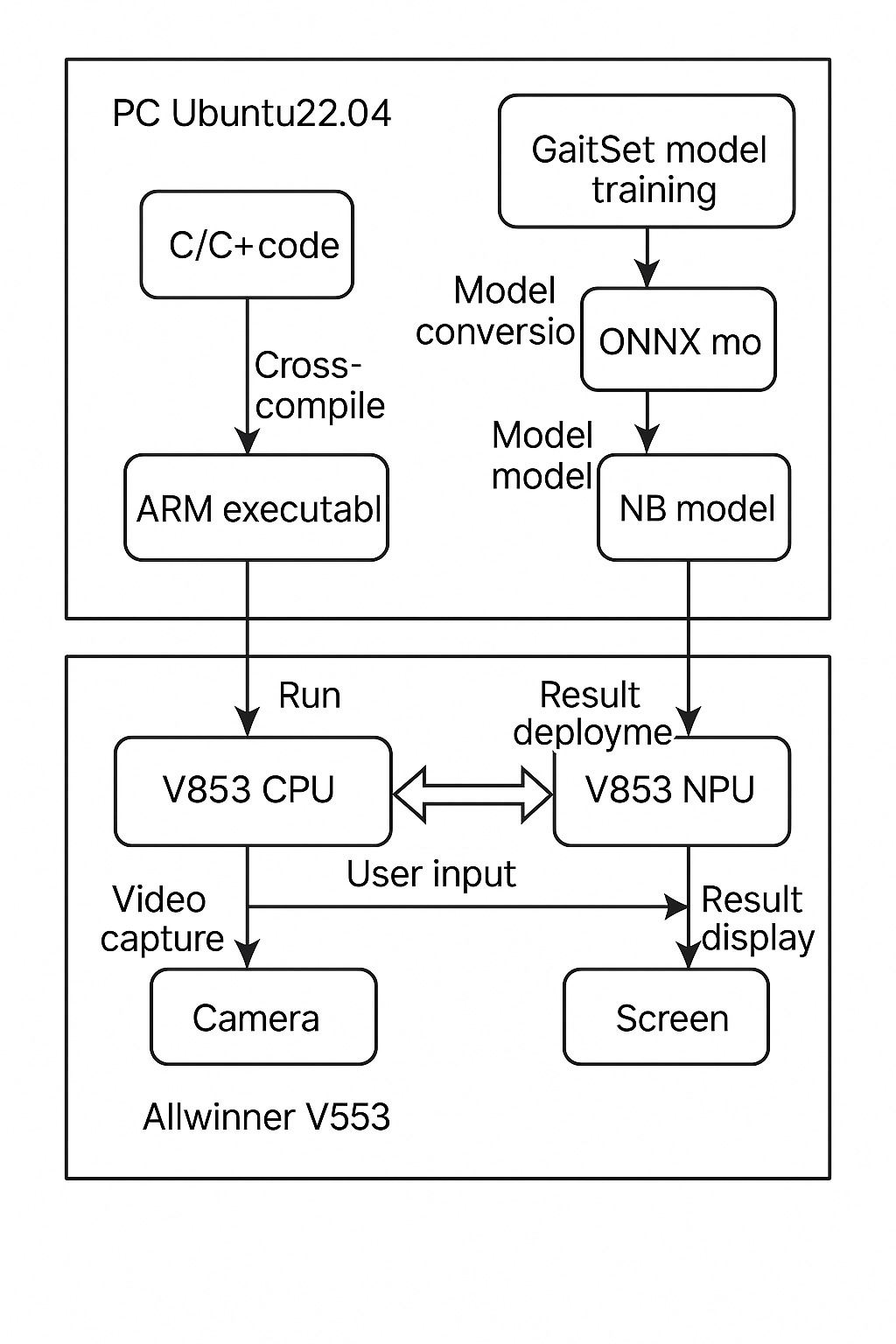

The gait recognition system in this work is implemented on an Allwinner V853 development board. It leverages onboard peripherals, the CPU and the NPU to run a real-time gait recognition system on an embedded platform.

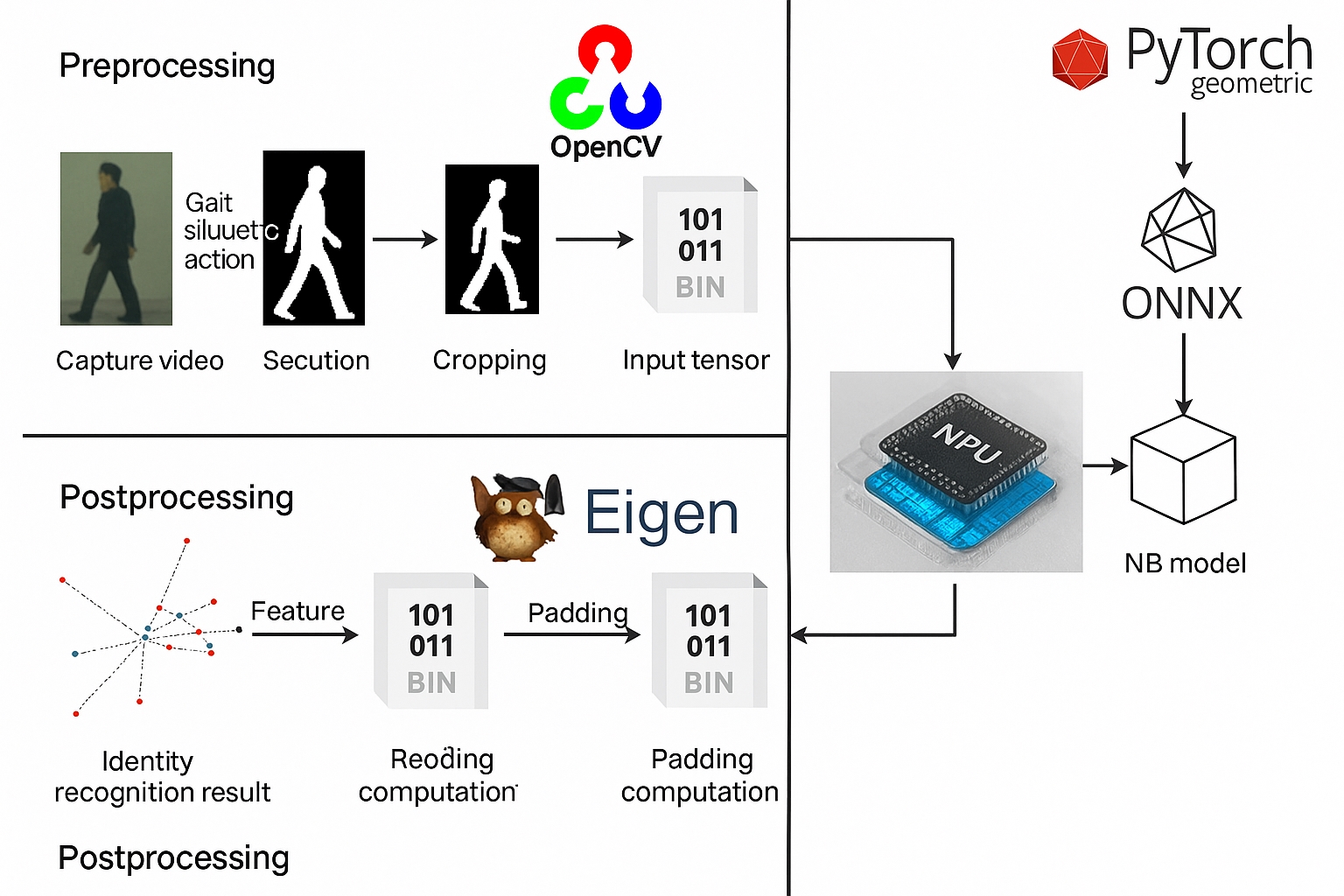

Training is performed on a PC using PyTorch. The trained PyTorch model is converted with a model conversion tool into an NB model runnable on the V853 NPU, and inference is executed on the NPU. The system runtime is organized into four parts: preprocessing, model inference, postprocessing, and UI display.

Gait recognition system architecture diagram

Onboard Processing

Most deep learning operators run on the onboard NPU, while a small subset execute on the onboard CPU. Preprocessing and postprocessing run on the CPU using OpenCV and Eigen. Preprocessing extracts gait silhouettes from video captured by the onboard camera, crops and concatenates them, and prepares the input tensor for the NPU model. Postprocessing supplements the NPU output with additional computation and performs feature comparison to achieve identity verification. The UI is implemented with a Qt application.

Dataset and Evaluation Protocol

Evaluation used the CASIA-B dataset, a large multi-view gait dataset containing 124 subjects. Each subject has 10 walking sequences: 6 normal walking sequences (NM), 2 with a long coat (CL), and 2 with a backpack (BG). Each walking sequence includes 11 viewing angles. The dataset split used 74 subjects for training and 50 subjects for testing.

Overall gait recognition algorithm flow

In the test set, the first four normal walking sequences of each subject were used as the gallery. Three probe sets were evaluated to study the system performance under different appearance conditions: the last two normal walking sequences, the two coat-wearing sequences, and the two backpack-wearing sequences. Recognition accuracy was measured separately for each viewing angle to quantify angle-dependent performance.

Accuracy Results

The NB model used in the GaitCircle implementation was compared with the GaitSet model under identical conditions. Results are summarized in the following table.

Real-Time Performance

Real-time performance was measured. For single-person gait recognition, preprocessing averaged 58 ms per frame, model inference averaged 77 ms, and postprocessing averaged 0.73 s.

Real-time recognition tests were conducted using the development board camera to capture video and output pedestrian identity in real time. The system was configured to autostart the gait recognition program on boot in Tina Linux by adding the program start command to /etc/profile.

ALLPCB

ALLPCB