AR/VR/XR and the Metaverse

"AR/VR/XR x Metaverse" scenarios are driving changes in immersive interaction modes. Virtual reality (VR) creates immersive virtual worlds with multi-sensory experiences including three-dimensional vision, touch, smell, and hearing. Augmented reality (AR) overlays digital content such as text, images, 3D models, and audio/video on the real world via display terminals, enhancing real-world information. Mixed reality (XR) combines VR and AR technologies and scenes to enable real-time, dynamic interactions and feedback between the real world, virtual world, and users, supporting richer spatial experiences.

Technologies that merge virtual and real environments are collectively called extended reality, or XR. With immersive interaction models and ongoing innovation, XR is changing how spaces and experiences are constructed. China’s 14th Five-Year Plan identifies virtual reality and augmented reality as key sectors in the digital economy.

The metaverse concept further blurs the boundary between physical and virtual spaces. The metaverse integrates XR, brain-computer interfaces, blockchain, cloud computing, digital twins, and artificial intelligence, shifting focus from pure technology to multi-space construction and novel interaction paradigms. XR- and metaverse-based scenarios are increasingly applied across industry, education, healthcare, entertainment, and services, such as virtual operation training for complex industrial equipment, immersive classroom experiences, remote assistance in surgery, immersive gaming, historical scene reconstructions, and virtual apparel try-on.

Sensing as a Primary Human-Computer Interaction Channel in the Metaverse

In metaverse spaces that blend virtual and real worlds, gesture interaction, voice, and brain-computer interfaces form the most direct interaction modalities and could become primary human-computer interaction channels for next-generation intelligent networks.

Within XR-created multi-modal scenarios, display devices provide visual links to virtual content; seating and pedals provide motion perception; environmental effects such as scent or mist can add olfactory and tactile cues; and interaction modes such as gesture recognition, voice recognition, and brain-computer interfaces enable continuous control between user and virtual environment.

Gesture recognition is an important sensing and interaction method in XR due to its relative maturity and interaction diversity. It offers immediate, efficient, three-dimensional, and sustained sensory interaction, and plays an increasing role in XR scene control and interaction.

Gesture Recognition in Immersive Experiences

Hands are natural control organs. In virtual environments, hand-eye coordination is an intuitive control method without additional hardware. Gesture recognition combined with wearable haptics can provide more natural and convincing control experiences, enabling interactions such as picking up a virtual cup, deforming an object, or opening a door.

Gesture recognition can establish a direct mapping between hand movements and cognitive intent, coordinating visual, tactile, and auditory perception to create richer, more realistic immersive experiences. This allows creation of detailed and imaginative interactive content and scenarios.

Gesture Interaction Implementations

Current gesture recognition approaches include bare-hand vision-based recognition, haptic controllers, haptic gloves, and electromyography wristbands. Bare-hand recognition uses camera-based multi-point visual analysis to detect hand position and pose; headset-mounted bare-hand solutions are already deployed and are moving toward higher accuracy. Haptic controllers add simple tactile feedback such as vibration or force, while haptic gloves offer dense actuator points for feedback, improving accuracy, fluidity, and fine tactile perception.

For tracking and positioning, inside-out solutions that combine cameras and inertial measurement units (IMUs) enable six degrees of freedom including three translations and three rotations, supporting micro-movements and body motion. These inside-out systems are widely used in consumer VR standalone headsets.

High-perception gesture recognition is maturing and enabling broader deployment of gesture-based interaction in XR scenarios.

Gesture Recognition Chip Technology

In XR scenarios such as VR, AR, and MR, sensing and interaction extend beyond 2D screens. Bare-hand gesture recognition requires coordinated sensor, chip, and algorithm stacks. Sensors must offer higher accuracy, faster response, wider coverage, smaller form factor, and competitive cost. Algorithms need continual improvement with higher-quality datasets. Chip computing power must support complex inference workloads.

Multi-view imaging chips become mainstream

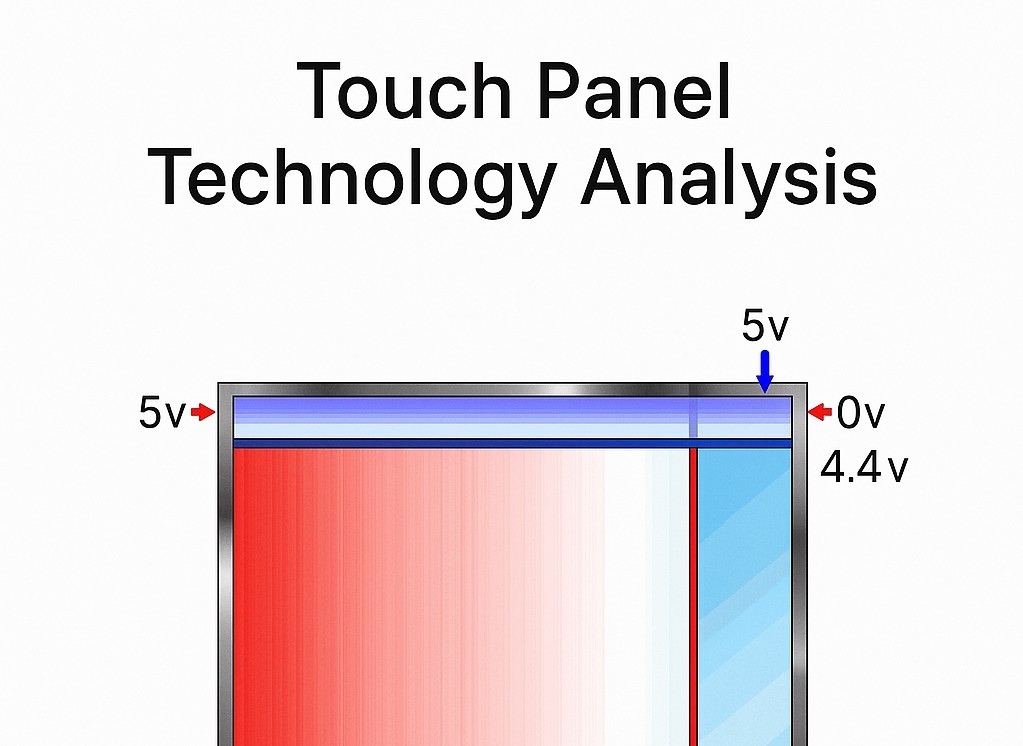

Gesture recognition primarily involves tracking hand motion and inferring hand position and pose. Depending on hardware, three main chip approaches are used:

- Structured light: projects patterns and uses algorithms to compute depth and reconstruct 3D scenes. Early representative product: Microsoft Kinect. Depth computation and recognition range can be challenging.

- Time-of-flight (ToF): emits light and measures photon flight time via CMOS sensors to estimate depth. Representative product: Intel 3D cameras with gesture capabilities.

- Multi-view imaging: uses two or more ordinary cameras to capture simultaneous images and compute depth from differences, producing 3D images.

Compared with dedicated depth cameras, solutions based on one or multiple ordinary cameras may become the mainstream hand-tracking mode for head-mounted devices when balancing cost, technical complexity, and recognition accuracy.

Requirements for high-perception gesture recognition

Multi-view imaging-based gesture systems perform background subtraction, motion detection and thresholding, contour extraction, and hand identification including left/right differentiation. They track 21 or 26 keypoints per hand in real time to determine hand position and gesture semantics, and they compute depth from multi-camera image differences to form 3D representations. Gesture information is mapped to interface targeting, selection, and control commands.

High-perception performance requires high-quality hand models that predict 3D joints, high-precision training datasets, and deep learning inference capable of handling complex hand motions and resisting environmental interference. Achieving this requires efficient compute resources and places strict constraints on chip power, latency, and cost.

Compatibility with SLAM cameras

As inside-out tracking becomes mainstream, simultaneous localization and mapping (SLAM) is increasingly applied in XR. VR headsets, standalone mobile VR devices, and AR glasses widely integrate SLAM. SLAM cameras typically use fisheye or wide-angle grayscale sensors; compared with RGB cameras, SLAM cameras often offer higher gesture recognition precision and good compatibility.

Devices with SLAM can repurpose SLAM grayscale cameras for gesture recognition without additional camera hardware, maintaining recognition precision while avoiding extra camera cost and power burden. Running SLAM and gesture recognition concurrently can be feasible within constrained CPU utilization. Analog computing architectures and near-sensor processing can improve energy efficiency by reducing data movement and pre-processing sensor data before conversion to full digital domains.

ALLPCB

ALLPCB