Introduction

Recently things have been intense, so I will start with a quotation from Marx's political economy to set the tone: "Productive forces determine relations of production." Applied globally, as productive forces develop, new production relations emerge—this trend underlies globalization. Opposition to globalization is often a conservative reflex that does not change the underlying economic forces. 5G

You are in the right place; the real article begins here.

Electromagnetic Waves

To understand 5G, some knowledge of electromagnetic waves is necessary. Question: Can a cactus shield you from computer radiation? If you already know the answer, skip to the latter part of the article; the following is written for readers who want the basics.

In daily life, apart from atomic electrons, most phenomena we encounter are electromagnetic waves: infrared, ultraviolet, sunlight, electric lighting, Wi-Fi signals, mobile phone signals, computer radiation, nuclear radiation, and so on. Any wave can be characterized by three parameters: propagation speed, wavelength, and amplitude. For electromagnetic waves the speed is fixed at the speed of light, so the key parameters are wavelength (or frequency) and amplitude. Among these, frequency is especially important.

The higher the frequency, the shorter the wavelength, the higher the energy, the faster the attenuation, the poorer the penetration, the less scattering, and the greater potential harm to biological tissue. Based on this principle, let us step through the spectrum.

Very long electromagnetic waves can have wavelengths up to 100 million meters, with frequencies around 3 Hz—three cycles per second. If such a frequency were used for communication, a single sentence could take months to transmit.

Slightly shorter waves with wavelengths of tens of thousands of meters are much more reliable for communication: terrain and large obstacles are less of a problem, and these waves can even penetrate tens of meters into seawater (though seawater is conductive and strongly attenuates many electromagnetic waves). However, their low frequency limits data throughput. Extremely low-rate but robust applications, such as one-way commands from shore to submerged submarines, use these frequencies.

At wavelengths of tens of meters, frequencies enter the MHz range. These frequencies can carry a reasonable amount of information and still travel hundreds of kilometers, which is why AM/FM broadcasting, shortwave radio, and amateur radio operate in these bands.

As a practical tip: if you were stranded on an island and see an aircraft, use 121.5 MHz to send an emergency call—this is a civil emergency frequency. There is also a military emergency frequency at 243 MHz. These frequencies are unencrypted and public. There have been incidents where military aircraft communications were recorded by radio enthusiasts and posted online, which raised concerns about the security of such channels.

When wavelengths are in the 1 meter to 1 centimeter range, interesting things happen. Although attenuation becomes noticeable, signals can still cover tens to hundreds of kilometers. Frequencies in the GHz range can carry large amounts of information, making practical two-way voice, data, and encrypted communications feasible. This band covers many cellular generations, satellite communications, and radar systems and is commonly referred to as microwave communication.

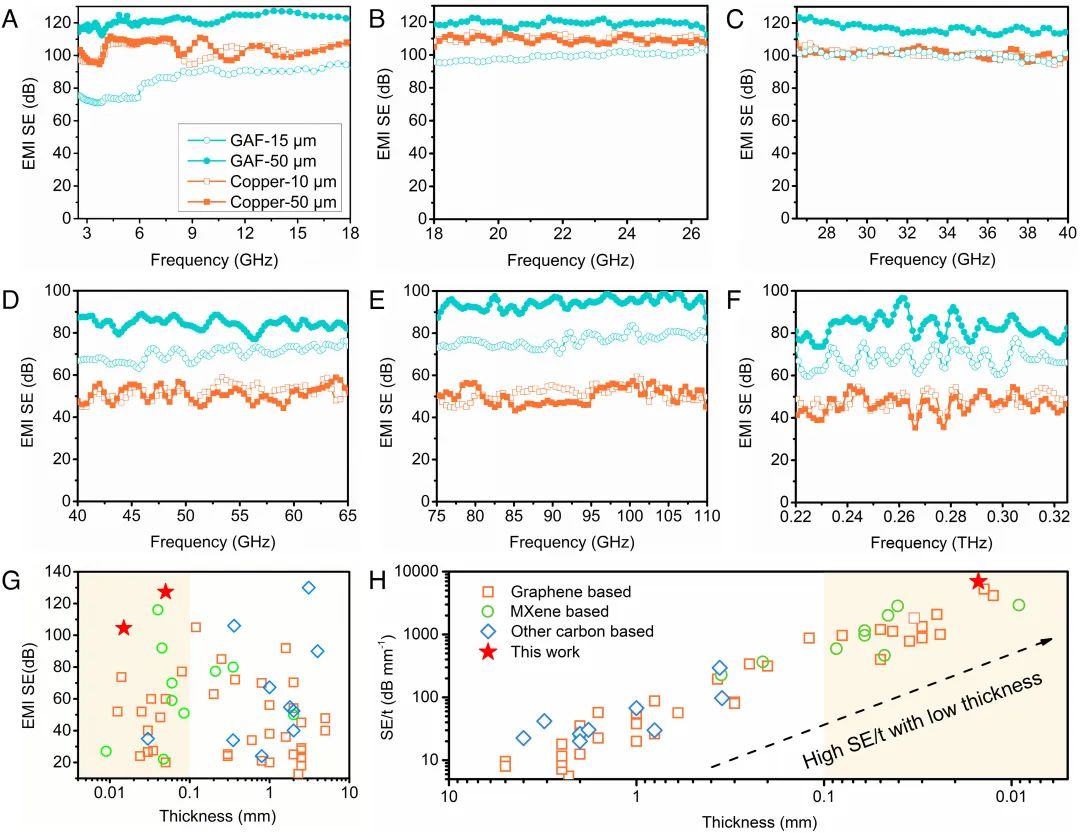

At millimeter wavelengths, signals do not travel far. Millimeter waves are less prone to diffraction, and they are more easily absorbed or reflected by surrounding materials. Penetration is poor, which makes such frequencies less useful for long-range communication but suitable for applications like missile guidance radar and microwave heating. However, frequencies above 30 GHz offer very high data capacity, which motivated their adoption in 5G systems.

Going further down to micrometer wavelengths, data capacity continues to increase, but at around 0.7 micrometers the waves enter the visible light range. Visible light cannot penetrate walls and is attenuated even by paper, so repeating the cellular generation progression into optical wavelengths is not practical. Instead, optical or laser communications are used, which require precise alignment and unobstructed line of sight.

Wavelengths near 0.3 micrometers (300 nm) are ultraviolet and can be harmful to humans. Solar ultraviolet contributes only a small percentage of sunlight energy, and normal exposure risks are well understood. In strong sunlight conditions, ultraviolet communication using narrow-beam optical signals can be a useful supplement to laser communication because it offers some concealment and does not require as strict alignment, and it is an area of recent military communications research.

Shorter wavelengths in the nanometer range are X-rays, used in medical imaging. Below about 0.01 nm are gamma rays, associated with nuclear processes and extremely high energies.

Amplitude is the other waveform parameter. If you do not know whether a cactus blocks radiation, you probably do not need to dive into amplitude details, so we will skip amplitude for now.

Representing 1 and 0

Back to microwave communication.

Why does higher frequency enable higher information throughput? With digital signals, information is a sequence of 1s and 0s, so first we need to know how electromagnetic waves represent binary data.

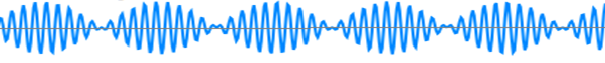

The first method is amplitude modulation (AM). The basic idea is to vary the wave amplitude: a large amplitude represents 1, and a small amplitude represents 0. AM radio uses this method. It has several disadvantages.

The second method is frequency modulation (FM). The idea is to vary the carrier frequency: denser cycles represent 1, sparser cycles represent 0. FM radio uses this method and has advantages over AM.

Clearly, the more waveform cycles transmitted per unit time, the more bits can be represented. In other words, higher frequency provides higher potential data rates.

By this reasoning, a frequency of 800 MHz corresponds to 800 million cycles per second. If each cycle represented one bit, that would suggest a theoretical rate of 100 Mbps. Why don't we observe such straightforward rates in practice?

Radio channels are lossy and prone to bit errors. A simple error-control approach is redundancy. For example, you can represent one logical "1" by transmitting ten thousand consecutive 1 bits. Even if some bits are lost, the receiver can still decide the intended bit from the pattern. This naive method works for some civil applications but is inefficient and easy to intercept or spoof, which is problematic for security-critical uses.

Civilian systems prioritize distinguishability from other signals and practical efficiency, so they tend to use simpler schemes. With legacy 2G systems, an 800 MHz carrier might deliver only tens of kilobits per second under some configurations.

Military communications, to resist interception and jamming, use complex signaling combinations, frequency hopping, spread spectrum, and constantly changing patterns. These schemes consume more spectral and temporal resources to achieve security and robustness, which is why some military bands and implementations use higher frequencies or wider bandwidths.

At present, classical cryptographic and spread-spectrum techniques remain practical defenses against interception, aside from nascent quantum communication methods.

Electronic warfare is an ongoing contest: when interception and decryption are difficult, adversaries may attempt jamming or spoofing by injecting their own signals to confuse receivers. That topic is outside this article; we return to 5G.

Key Technologies

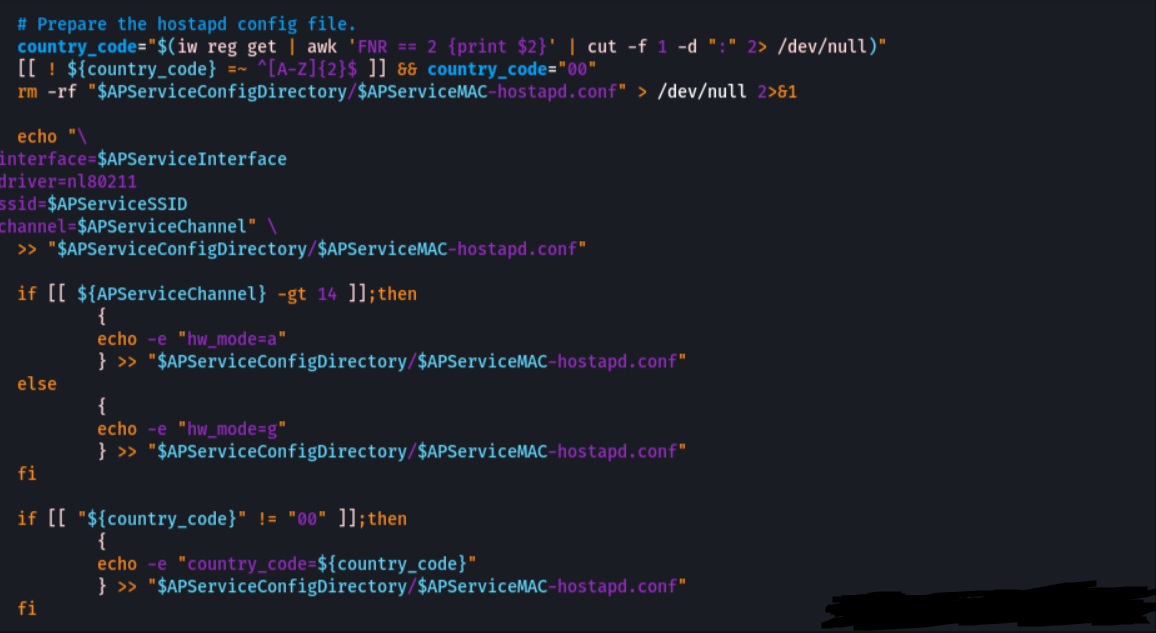

The preceding discussion covered basic principles. Now for the engineering details. 5G relies on several key technologies; here they are grouped and summarized.

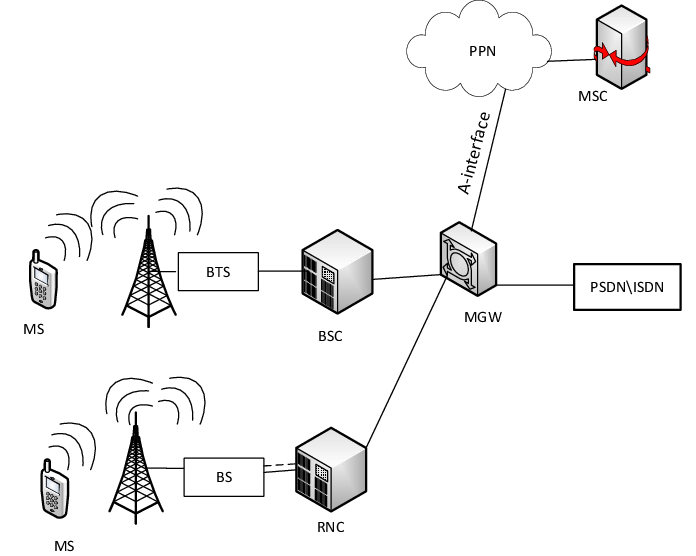

An oscillator feeding an antenna generates electromagnetic waves. Modulation is the process of varying frequency, amplitude, or phase to encode data. Demodulation at the receiver recovers the transmitted bits. Both transmission and reception require antennas—modern handsets still need antennas even when they appear sleek. 5G uses millimeter-wave bands that attenuate quickly in air, but regulations limit transmit power, so antenna design is critical.

First key 5G technology: massive multiple-antenna arrays. Plainly, increase the number of antennas from one or a few to dozens or hundreds. This approach improves effective radiated power patterns, beamforming, spectral efficiency, and interference rejection. However, coordinating many antennas to transmit coherent signals requires new channel models and complex signal processing.

Massive antennas help overcome millimeter-wave attenuation and significantly boost throughput and interference resilience. Early demonstrations of large antenna arrays attracted industry attention, though commercial and financial outcomes have varied across vendors.

After addressing base station antennas, attention turns to user equipment antennas and full-duplex operation.

Most phones use a single antenna and alternate between transmit and receive. Full duplex separates transmit and receive paths so both operate simultaneously. The challenge is self-interference: the transmit signal can overwhelm the receive path if not suppressed. Two broad approaches exist: physical isolation, such as shielding between antennas, and signal processing cancellation, including passive analog cancellation and active digital cancellation.

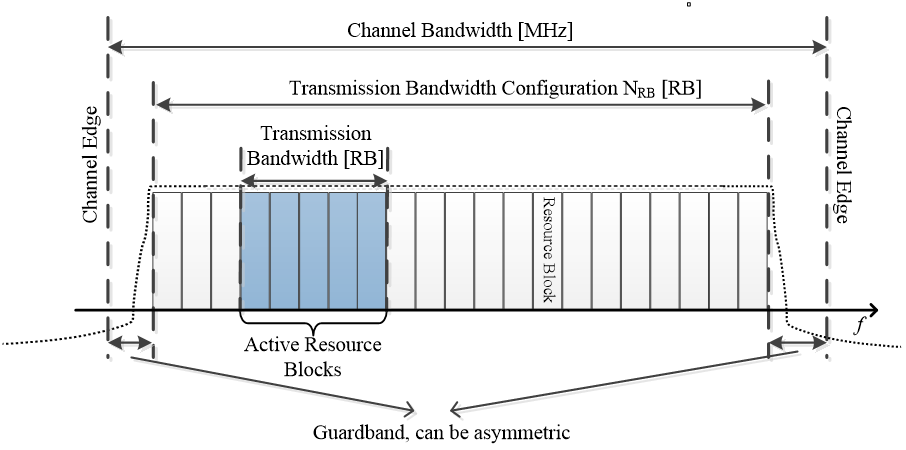

Another essential technology is new multiple access methods. Historically, mobile systems used:

- FDMA: each call occupies a distinct frequency band.

- TDMA: users share a frequency by taking turns in time slots.

- CDMA: users share the same frequency and time by using orthogonal or pseudorandom spreading codes so receivers pick out their intended code.

- OFDMA: orthogonal frequency-division multiple access overlays many orthogonal subcarriers to serve multiple users simultaneously.

These approaches collectively are referred to as multiple access techniques. 5G introduces "new multiple access" schemes, such as sparse code multiple access, non-orthogonal multiple access, and graph-based multiple access, which combine and extend previous ideas to support many simultaneous connections efficiently. The details involve advanced mathematics and signal processing.

To meet target metrics—peak rates up to 10 Gbit/s, massive connection densities per square kilometer, and end-to-end latencies around 1 ms—5G addresses massive MIMO, full duplex, and new multiple access together.

Industry validation efforts demonstrated these technologies in stages. For example, combined techniques including filtered OFDM, sparse code multiple access, and polar coding with large antenna arrays increased throughput significantly. Self-interference cancellation frameworks combining passive analog, active analog, and digital cancellation achieved very high suppression levels, yielding substantial throughput gains.

Later integration tests with wider bandwidths showed multi-gigabit downlink rates per user and even higher peak cell rates, supporting many simultaneous high-definition video streams per base station. These demonstrations progressed across testing phases as the ecosystem matured.

Beyond core radio technologies, system-level issues also matter: network resource allocation is analogous to traffic control—poor allocation can degrade entire networks. After validating key radio techniques, efforts shift to independent network trials, power consumption optimization, and cost control for mass deployment.

Chips

5G handles far larger data volumes than previous generations, and where data bits are handled, silicon is involved. RF front-ends, baseband processing, and encoding/decoding require specialized chips.

Several vendors developed millimeter-wave capable BB and RF chips. The main 5G bands around 28 GHz require RF ICs designed for those frequencies, and only a handful of manufacturers have such capabilities. Major players developed multi-mode 5G modems and base station silicon, with progress toward smaller, power-efficient implementations for handsets and infrastructure.

Standards

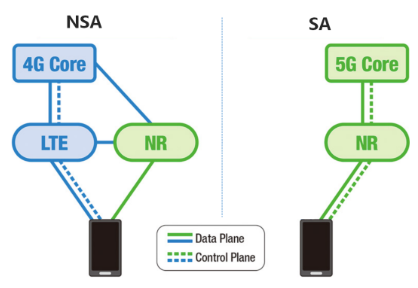

5G comprises many technologies and requires agreed rules. Standardization is as important as technical development. The first phase of 5G standards was completed in mid-2018, marking the first comprehensive international 5G specification, with remaining parts finalized in subsequent releases.

The standardization process involved many companies worldwide. In channel coding, different camps favored different codes: one side preferred turbo codes, another favored LDPC, and another advocated polar codes. The standardization outcome used LDPC codes for data channels and polar codes for control channels, reflecting compromises among industry participants.

Standards work is iterative, and until the full standards suite is finalized and implemented in silicon and systems, commercial deployments continue to evolve.

Use Cases and Significance

The International Telecommunication Union defined key 5G use-case categories: enhanced mobile broadband, massive machine-type communications, and ultra-reliable low-latency communications. These categories correspond to requirements such as high throughput, very large numbers of connected devices, and very low latency with high reliability.

In plain terms, 5G promises higher speed, wider coverage of services, and lower latency. The technology is foundational for more comprehensive digital transformation and can enable many Internet of Things scenarios and new applications that were not practical with earlier mobile generations.

To put it figuratively: removing 2G, 3G, and 4G and returning to the early cellular era would highlight how transformative 5G can be. The differences between 5G and 4G are comparable to the differences that existed between 4G and 1G.

ALLPCB

ALLPCB