Abstract

Human-machine hybrid intelligence arises from interactions among humans, machines, and the environment. This article reviews recent research directions and application progress in the field. It first outlines DARPA's recent initiatives in human-machine hybrid intelligence; then summarizes the latest research and application advances in core technologies, including brain-computer interfaces, trustworthy artificial intelligence, virtual and augmented reality, and environmental perception; finally, it discusses the main challenges facing human-machine hybrid intelligence and its implications for future military development. The review indicates that machines will increasingly act as human colleagues rather than mere tools. Core technologies have reached varying degrees of operational application, while issues such as role and responsibility allocation between humans and machines, capability distribution, interaction interfaces, and ethical regulation remain key obstacles. Human-machine hybrid intelligence will accelerate military transformation and produce fundamental changes in operational patterns, equipment systems, and force generation models.

Keywords

human-machine hybrid intelligence; artificial intelligence; military applications; brain-computer interface; trustworthy AI; virtual reality; environmental perception

1. Introduction

Artificial intelligence captures only the programmable, describable portion of human intelligence. Human intelligence emerges from interactions among people, machines (objects), and the environment. Objective intelligence (artificial intelligence) and subjective intelligence (human intelligence) are evolving into a complementary system that can be described as human-machine hybrid intelligence. Current AI development should prioritize human-machine collaboration, cooperation, and mutual trust rather than simply replacing humans with machines. The organic integration produced by human-machine interaction has become central to AI's future development. The goal is to extend and amplify human intelligence through human-machine collaboration to address complex problems more effectively. Recent reports and surveys have identified AI that augments human capabilities as one of the most promising directions and forecast closer human-robot collaboration in the coming decade, including in military applications.

2. DARPA’s Accelerated Initiatives in Human-Machine Hybrid Intelligence

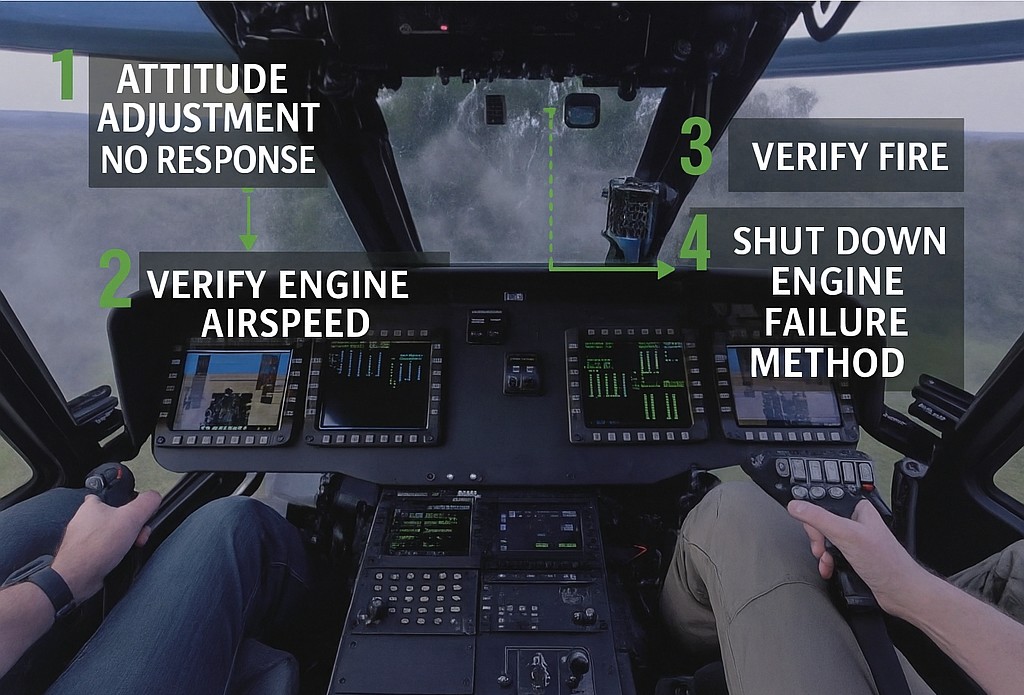

The U.S. Defense Advanced Research Projects Agency (DARPA) has prioritized research on human-machine hybrid intelligence, with many projects focused on air combat. In 2018 DARPA launched the Next-Generation Non-invasive Neurotechnology (N3) program to develop high-resolution portable neural interfaces capable of reading and writing at multiple brain locations without surgery, enabling high-bandwidth brain-system communication for healthy service members. In August 2018 DARPA started the Neural Engineering System Design (NESD) program to develop an implantable, biocompatible neural interface (volume under 1 cm3) to connect the human brain directly with computers and translate electrochemical neural signals to electronic representations. In February 2021 DARPA’s Information Innovation Office announced the Perception-Enabled Task Guidance (PTG) program to develop AI that extends skill sets, improves proficiency by reducing errors, and adapts soldiers to more complex tasks. PTG includes AI task guidance assistants using video and audio analysis via deep learning, automated reasoning for task and plan monitoring, and augmented reality human-machine interfaces. In June 2022 DARPA launched the Assured Neural-Symbolic Learning and Reasoning (ANSR) program to evaluate human-machine hybrid intelligence in military contexts and improve transparency, interoperability, and robustness of autonomous platforms.

Fig.1 AI task guidance assistant from the PTG project (example: UH-60 Black Hawk pilot training)

Within the Air Combat Evolution (ACE) program, public attention focused on the "AlphaDogfight" demonstration in which an AI outperformed a human pilot; however, DARPA's primary objective was to explore cooperation and mutual trust between humans and AI. In March 2021 DARPA reported key ACE milestones, including human-machine flight trials to observe pilot physiology and trust in AI, and preliminary modification of a full-size trainer aircraft to support an AI "pilot" in later phases. ACE aims to solve air combat human-machine collaboration challenges and develop trusted, scalable, human-level, AI-driven, hybrid autonomous systems. A milestone occurred in February 2021 with the "Scrimmage 1" simulated dogfight demonstrating virtual human-machine hybrid engagements, including use of more complex weapons such as a gun for short-range precision and a missile for long-range targets.

3. Overview of Core Technologies for Human-Machine Hybrid Intelligence

Human-machine hybrid intelligence spans many technical domains. Currently, brain-computer interfaces, trustworthy AI, virtual and augmented reality, and environmental perception form the core technology cluster—these represent the most mature research and the deepest application penetration within the field.

3.1 Brain-Computer Interfaces

A brain-computer interface (BCI) establishes a direct communication pathway between the brain and external devices to transmit signals. BCI research has a long history: the discovery of the electroencephalogram in 1924 marked the beginning, and the BCI concept was proposed in the early 1970s. Around 2000, advances in EEG detection enabled major progress; clinical and commercial interest intensified after milestones such as FDA approval for some devices in the mid-2000s. Since 2005 BCI has moved toward clinical application and commercialization, with strong relevance in medical monitoring, autonomous driving, education, and military equipment.

BCIs are commonly categorized as invasive or non-invasive. Non-invasive BCIs have begun commercial promotion; invasive BCIs remain largely in laboratory settings due to technical and ethical constraints, with potential clinical applications in the future. Market research projects substantial growth for BCIs globally. The United States currently leads in BCI research, with broad activity across government and commercial organizations, creating significant technical barriers.

Invasive BCIs require surgical implantation of neural sensors and offer higher communication bandwidth. Neuralink is a representative company that has reported several milestones: inserting flexible electrodes into rodent brains, implanting devices in paralyzed patients to control external devices, the LINK V0.9 implant with demonstrations in pigs, and primate experiments enabling control of a video game via intention. These experiments aim to establish safe, effective, wireless, implantable clinical BCI systems. Surgical trauma complicates broad clinical application, and current research seeks methods to reduce or avoid craniotomy-related risks.

Non-invasive BCIs avoid surgical harm but face weak signals and limited recognition accuracy and real-time performance. Examples include visualizations of mental imagery using neural networks and EEG, and products that translate thought into device commands. Balancing communication rate (invasive) against invasiveness (non-invasive)—that is, achieving minimal brain injury while maximizing neural information use—remains a central research challenge.

Recent clinical progress includes a minimally invasive approach by a neurovascular bioelectronic company that enabled a patient with amyotrophic lateral sclerosis to send messages via intention, and subsequent human clinical trials and implant cases. Teams have explored strategies using preoperative functional MRI to precisely localize target areas and perform microinvasive implantation with very few intracranial electrodes to enable typing at competitive speeds. Other teams have demonstrated intracortical arrays decoding handwriting-related neural signals for high-speed typing comparable to smartphone rates among peers.

3.2 Trustworthy Artificial Intelligence

Trustworthy AI has become a major research focus because effective human management of AI "partners" requires trust and collaboration. Research in this area seeks methods for human-machine interoperability and mutual trust, enabling human expert knowledge to be fed back into machines so that systems can self-improve and produce interpretable, trustworthy decisions. Trusted AI is considered essential for military decision-making across operator, commander, political, and public levels.

DARPA’s eXplainable Artificial Intelligence (XAI) program, launched in 2016, is regarded as a comprehensive military initiative addressing these issues. XAI covered explainable learning methods, psychologically informed interpretable models, and an evaluation framework. Research teams explored probabilistic and causal models, reinforcement learning–derived state machines, Bayesian rule lists, visual saliency maps, and other explainability techniques. New AI prototype systems from XAI can provide initial explanations of their logic, describe strengths and weaknesses, and clarify future behaviors.

Trustworthy AI is now a focus across academia and government. Key research dimensions include privacy, security and robustness, interpretability, non-discrimination and fairness, environmental wellbeing, and accountability and auditability. For graph neural networks—an emerging deep-learning technique—efforts to enhance trustworthiness are gaining attention. Recent reviews highlight deficiencies in privacy, robustness, fairness, and interpretability for current graph neural network applications and outline future trustworthy-design directions.

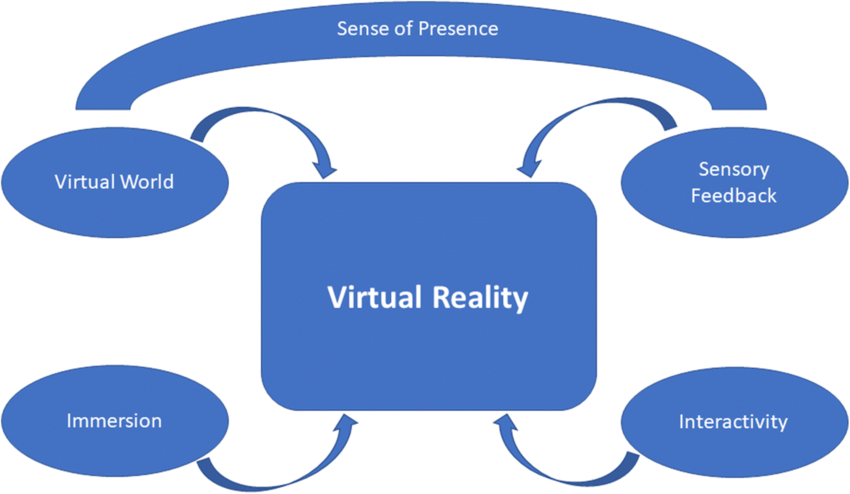

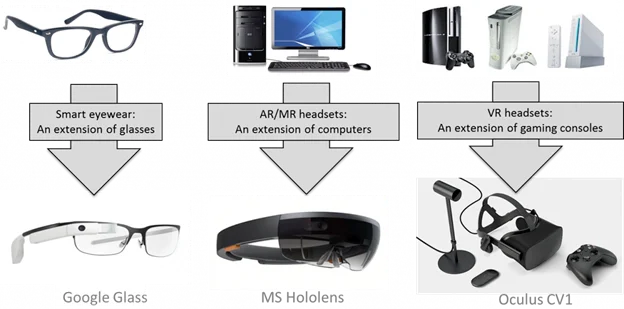

3.3 Virtual and Augmented Reality

Virtual reality (VR), augmented reality (AR), and extended reality (XR) are among the earliest technical directions for human-machine hybrid intelligence research. The idea of a metaverse has brought renewed attention to VR/AR technologies and investment in immersive computing platforms. VR/AR technologies have made measurable advances and are increasingly applied in military training. Immersive training can address limitations of traditional training—such as location, cost, and logistics—by providing interactive, repeatable experiences that engage trainees cognitively and emotionally.

In the United States, the Air Force and Army have been significant users of these technologies. For example, a virtual testing and training center enables fighter pilots to practice advanced tactics through simulation. Under DARPA PTG funding, prototypes are being developed to embed AI assistants into AR helmets for pilots, providing voice and graphical guidance at appropriate times and locations. The Army has developed the Integrated Visual Augmentation System (IVAS) to deliver clearer, information-rich battlefield imagery, and synthetic training environments are being advanced to more accurately simulate weapon systems and interactive effects.

Challenges for VR/AR adoption span four main areas: (1) technological uncertainty including compute, network bandwidth, and latency constraints for specialized equipment; (2) energy supply, since large-scale VR scenarios require extensive infrastructure such as data centers and communication networks; (3) financial and regulatory issues tied to virtual assets and their valuation; and (4) legal and ethical governance, including addiction, virtual crime, privacy protection, digital asset ownership, and regulatory compliance.

3.4 Environmental Perception

Human-machine hybrid intelligence depends critically on perception of and adaptation to the environment as well as interaction and co-existence with environmental elements. Adaptive AI that senses and adapts to rapidly changing external environments is identified as a strategic technology trend.

The concept that an intelligent agent must interact fully with its environment dates back to Turing’s imitation game. In 2022, embodied intelligence regained attention as a leading AI research direction. "Embodiment" refers to the capacity to interact with the environment and perform tasks within it. Leading researchers have emphasized that general intelligence relies on sensorimotor interaction between agents and the world, proposing an embodied Turing test as an ultimate challenge. Recent models aimed at multi-modal, complex environments enable robots to follow natural language instructions to perform real-world tasks. Research has also improved object detection accuracy in complex environments by focusing on environmental interaction.

DARPA has continued emphasis on environment-aware learning. Initiatives include language acquisition programs to enable systems to learn language similarly to how children learn—through auditory and visual perception of the surrounding environment—and projects to create agents that continuously perceive and learn language and vision from the environment to support robust human-machine collaborative analysis of image, video, and multimedia documents during time-sensitive defense tasks.

4. Challenges Facing Human-Machine Hybrid Intelligence

The main challenges include the following:

- Role and responsibility allocation between humans and machines. Effective task-sharing requires clear understanding of objectives, constraints, and available resources. Ambiguity can lead to poor function allocation, organizational confusion, and failure to realize the full capabilities of humans, machines, and the environment.

- Human-machine interaction interfaces. The interface bridges human and artificial intelligence. Humans must be able to modify machine-generated outputs (for example, operational plans) via interaction interfaces so that AI systems can perform incremental retraining and learn human expertise and constraints. Graphical interfaces are common but have limited scalability. Natural language and voice interfaces are likely future directions, but current intelligent voice assistants still lag in latency, complex question-answering, and leveraging historical dialogues.

- Ethical and regulatory issues. Privacy and security are central concerns: thought-based communication could eliminate personal privacy and expose the brain to hacking. These technologies may exacerbate inequality if access is limited. Researchers and developers must treat ethical dimensions seriously, subjecting each step to ethical review to ensure systems remain under human control and that hybrid intelligence augments rather than intervenes improperly in human agency.

5. Implications for Future Military Development

Human-machine hybrid intelligence will accelerate military transformation and fundamentally alter operational styles, equipment systems, and force generation. Examples include:

- Intelligence analysis. Tasks are characterized by multiple objectives, unknown outcomes, and complex processes. Hybrid approaches combining large-scale data analysis with interactive, iterative visualization enable subject-matter experts to apply domain knowledge and adjust machine-generated analyses. Human feedback can be incorporated via retraining loops to produce combined human-machine analytic outcomes that extract key intelligence from heterogeneous multimodal sources.

- Combat decision support. Collaboration among various unmanned systems to complete tasks across scenarios has become common. Introducing human expertise into the attack-defense decision loop helps address ambiguity and uncertainty, compensating for AI shortcomings and producing amplified intelligence effects. Machine-generated operational decisions must be explainable and trustworthy to human operators.

- Human-machine formations. Across land, air, and sea, human-machine collaboration and trust relationships will transform force composition. Pure AI or pure human systems cannot achieve optimal effectiveness; hybrid formations where many unmanned systems engage autonomously at the front while humans perform supervisory control will become standard—humans shifting from frontline operators to commanders focused on cognitive tasks and control.

6. Conclusion

Future warfare will integrate humans, machines, and the environment. This paradigm is not just automation but a move toward wisdom-based operations. Human intelligence retains advantages in perception, reasoning, induction, and learning, while machine intelligence excels at search, computation, storage, and optimization. Their complementarities will produce an enhanced intelligence where collaborative fusion yields effects greater than the sum of parts (1+1>2). Human-machine hybrid intelligence is a bidirectional closed-loop system: machines present information to humans and machines can also read human signals, enabling mutual influence and improvement. Under this framework, the fundamental objective of artificial intelligence evolves toward augmenting human intelligence to assist in complex dynamic tasks more effectively.

ALLPCB

ALLPCB