Introduction

Passwords are widely used in daily life: phone unlocking, website logins, and online or mobile payments. For security, users often set different passwords for different accounts and change them periodically. Over time this can cause confusion or forgotten passwords, leading to password-recovery procedures.

Fingerprints are unique, difficult to replicate, and generally hard for others to steal, making them an effective biometric credential.

Concept and Origins of Fingerprint Recognition

A fingerprint consists of the raised and recessed lines on the pad of a human fingertip. The ordered arrangement of these lines forms distinct patterns. Ridge endings, bifurcations, and minutiae points are fingerprint detail features. Because fingerprints are permanent, unique, and convenient to collect, they have become synonymous with biometric identification.

Fingerprint study has a long history. In 1788 Mayer first suggested that no two people have identical fingerprints. In 1823 Purkinje classified fingerprint patterns into nine types. In 1889 Henry proposed the minutiae-based fingerprint identification theory, laying the foundation of modern fingerprint science.

Manual comparison is inefficient and slow. In the 1960s, computers, image processing, and pattern recognition began to be used for fingerprint analysis, giving rise to automated fingerprint identification systems. By the late 1970s and early 1980s, automated fingerprint systems were used in criminal investigations. In the 1990s, AFIS entered civilian use as civil automated fingerprint identification systems.

Each person's skin ridge patterns, including fingerprints, differ in pattern, breaks, and intersections, and remain constant for life. By matching a person's fingerprint to stored fingerprint data, their identity can be verified. This is the principle of fingerprint recognition.

Fingerprint recognition identifies individuals based on ridge patterns and minutiae features. Advances in electronic manufacturing and algorithm research have brought fingerprint recognition into everyday use, making it one of the most mature and widely applied biometric technologies.

Before 2013 fingerprint sensors were mainly used in industrial and security applications. After Apple introduced a phone with an integrated fingerprint sensor in 2013, the fingerprint sensor market expanded rapidly, and sensors moved from simple device unlock use to authentication and mobile payment security functions.

Operating Principle of Fingerprint Recognition

Although fingerprint recognition is now common in civilian applications, its operating principle is still relatively complex. Unlike manual methods, biometric systems do not store raw fingerprint images because legal and privacy considerations generally prohibit storing fingerprint images directly. Over the years, many fingerprint recognition algorithms have been developed, but they all reduce to detecting and comparing fingerprint features on an image. The fundamental process is to capture a fingerprint image and compare its features to stored templates.

Fingerprint Features

Fingerprint features used for verification are generally categorized into global features and local features.

Global Features

Global features are those observable directly by eye. They include:

- Basic pattern types: common fingerprint patterns include loops, arches, and whorls. Other patterns are variations of these three basic types. Pattern classification helps search large databases but is insufficient alone for identification.

- Pattern area: the region containing the primary pattern features, which helps determine the fingerprint type.

- Core point: the center region of fingerprint ridges used as a reference for extraction and matching.

- Delta point: the first bifurcation, break, convergence, or turning point originating from the core; it provides a starting point for counting and tracing ridge flow.

- Pattern lines: cross lines appearing where ridge lines around the pattern area become parallel; they are usually short.

- Ridge count: the number of ridges within the pattern area, typically measured by counting ridge intersections along a line connecting core and delta points.

Local Features

Local features are minutiae on the fingerprint. Two fingerprints may share global features but will not have identical local features. Types of local features include:

- Minutiae: interruptions, bifurcations, or turns in ridge lines provide unique identification information.

- Ridge endings: points where a ridge terminates.

- Bifurcations: points where a ridge splits into two.

- Center points: geometric centers where ridge curvature is maximal.

- Deltas: convergence points of ridges in different directions.

- Crossings: locations where two ridges cross.

- Islands: very short ridges.

- Sweat pores: small holes on ridges corresponding to sweat gland openings.

Usage notes:

- Ridge endings and bifurcations are the most commonly used features; algorithms record their positions and orientations.

- Core and delta points are widely used in forensic systems but less so in consumer systems because many consumer sensors are small and may not capture these points.

- Crossings and islands are often omitted in practical systems due to computational difficulty.

- Sweat pores have been proposed for identification but require very high-resolution sensors and are rarely used in practical systems.

Fingerprint Recognition Process

Fingerprint recognition consists of enrollment and recognition phases. During enrollment, a user’s fingerprint is captured and the system extracts features to create a template stored in a database or designated storage. During recognition or verification, the user’s fingerprint is captured and automatically compared against stored templates, and the system returns a match result.

In many applications, users may also provide auxiliary information such as an account or username to assist the matching process. This enrollment/recognition flow is common across biometric systems.

Main Types of Fingerprint Sensing Technologies

Current fingerprint image acquisition methods in the market fall into four main categories: optical scanning devices, thermal/temperature-based sensors, semiconductor-based fingerprint sensors, and ultrasonic fingerprint scanning.

1. Optical Recognition

Optical fingerprint capture is the oldest and most widely used technique. The finger is placed on an optical platen. Under internal illumination, a prism projects the fingerprint onto a charge-coupled device (CCD) to form a digital gray-scale image where ridges appear dark and valleys appear light.

Optical sensors have several advantages: long-term use has validated the approach, they tolerate some temperature variation, and they can achieve relatively high resolution such as 500 DPI. The primary advantage is low cost.

Limitations include the need for a sufficiently long optical path and thus larger size, and degraded performance with excessively dry or oily fingers. Latent prints left on the platen can reduce image quality and may cause overlapping impressions. Platen coatings and CCD arrays can degrade over time, lowering image quality. Optical sensors also generally cannot perform reliable liveness detection and are sensitive to finger cleanliness.

Because light cannot penetrate beyond the surface stratum corneum, optical sensors read surface detail, so dirt on the finger surface can affect recognition. Spoofing with gelatin or other molds of a fingerprint can sometimes succeed against optical sensors, reducing security.

2. Thermal/Temperature-Sensing Technology

Thermal sensors detect temperature differences. Each pixel functions as a miniature thermal sensor detecting temperature variations between the finger and the sensor area, producing an electrical signal representing image information.

Advantages include very fast capture (on the order of 0.1 s) and minimal sensor area, which enables compact sliding fingerprint modules.

However, thermal detection is constrained by temperature equalization: after a short time the finger and sensor reach similar temperatures, reducing contrast and degrading the captured image.

3. Semiconductor Capacitive Technology

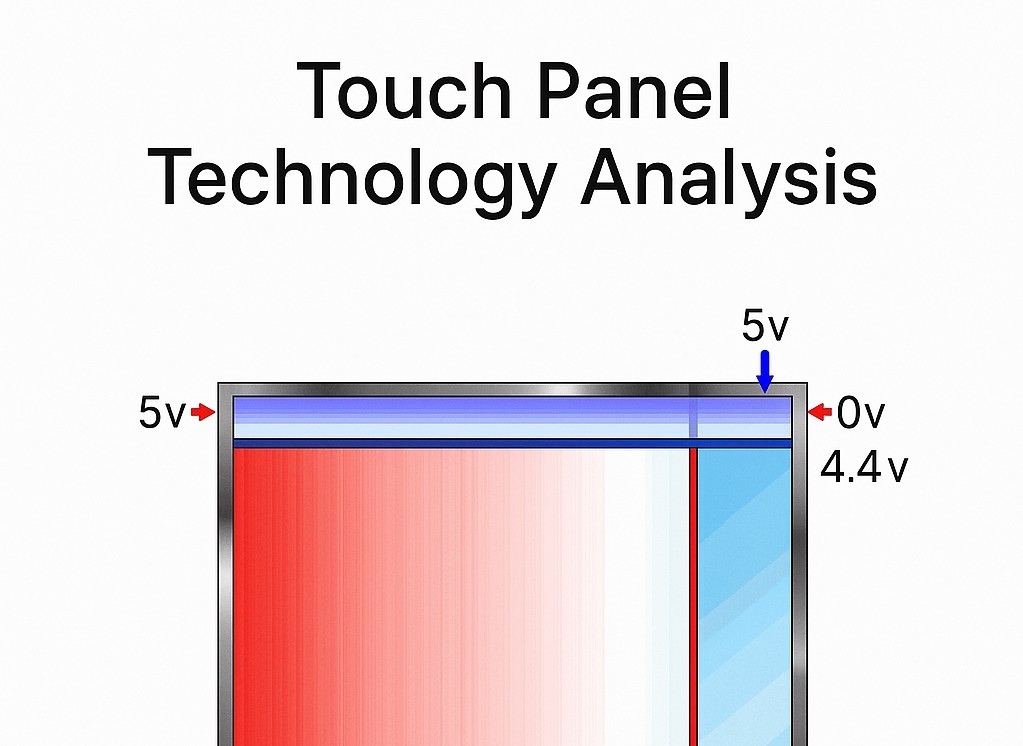

From the late 1990s, semiconductor capacitive sensing matured. The silicon sensor forms one plate of a capacitor and the finger forms the other. Ridges and valleys create capacitance differences relative to a flat silicon surface, producing an 8-bit gray-scale image.

Capacitive sensors transmit electronic signals that can penetrate the finger’s surface and the dead skin layer to reach deeper live skin layers, directly reading ridge details. Because they sense deeper structure, they capture more reliable data and are less affected by surface contamination, improving accuracy and reducing false matches.

Semiconductor fingerprint sensors include pressure-sensing and thermal-sensing variants, but capacitive sensors are the most widely used.

Capacitive sensors work by precharging sensing elements to a reference voltage. When the finger contacts the sensor, ridges (closer to the surface) and valleys (further from the sensor) form different capacitance values. The discharge rate varies accordingly: pixels under ridges (higher capacitance) discharge more slowly than pixels under valleys (lower capacitance). By measuring discharge rates, ridges and valleys are detected and a fingerprint image is formed.

Unlike optical devices that often require manual tuning, capacitive sensors use automatic control to adjust pixel sensitivity and local response, generating high-quality images across conditions. They can boost sensitivity in low-contrast areas (for example, lightly pressed regions) during capture to produce usable images.

Capacitive sensors deliver good image quality with minimal distortion, small size, and easy integration. They read beyond the dead skin layer into live tissue, improving security. Their small form factor, low cost, high resolution, and low power consumption made capacitive sensors a second-generation alternative to optical sensors and well suited for security and consumer electronics integration.

4. Ultrasonic Recognition

Ultrasonic fingerprint capture is a newer technology that uses ultrasound's ability to penetrate materials and generate different echoes depending on material and surface structure, enabling differentiation between ridges and valleys by acoustic impedance contrast.

Ultrasound frequencies used range from 1×10^4 Hz to 1×10^9 Hz, with energy levels controlled to be safe for humans (comparable to medical diagnostic ultrasound). Ultrasonic systems can achieve high accuracy and are less sensitive to finger or platen cleanliness, but capture time is typically longer and cost is higher. Some ultrasonic approaches also face challenges with liveness detection, and their current use is relatively limited.

Micro-Optical Fingerprint Sensing

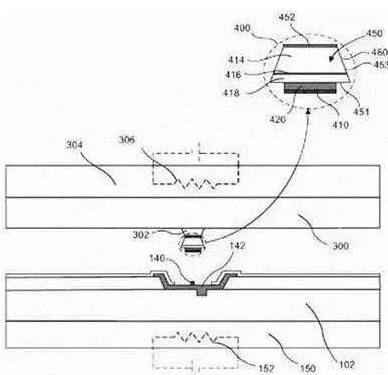

Both mainstream capacitive sensors and emerging ultrasonic sensors typically require a separate fingerprint sensing element. Capacitive sensors often require a visible opening in the display, whereas ultrasonic sensors can be placed under the display. In 2017 Apple and Goodix introduced micro-optical sensing that integrates the display and fingerprint sensor, enabling multi-point fingerprint recognition across the full screen.

MicroLED technology, involving miniaturized, matrixed LEDs, allows high-density arrays of tiny LEDs on a chip. Each pixel can be individually addressed and driven, reducing pixel spacing from millimeter to micrometer scale. MicroLED displays use thin-film, miniaturized LED arrays transferred to a circuit substrate, enabling compact display integration.

According to a U.S. patent published in 2017, a display panel integrating infrared LEDs and photodiodes can include interactive pixels that combine RGB and infrared emitters and infrared detectors at very high panel resolution. During fingerprint sensing, a specific screen region or selected rows scan the user’s fingerprint. The captured bitmap, including incident light intensity, allows analysis of surface features: dark and bright spots correspond to ridges and valleys, enabling fingerprint recognition while integrating sensing into the display.

Outlook

Fingerprint recognition remains a mainstream biometric method for identity authentication and is widely applied across industries. Historically, devices with fingerprint functions required a dedicated physical sensor area, but with advances in touch and display integration, the trend is moving toward fingerprint sensing anywhere on the screen. Embedded fingerprint sensing integrated into core components reduces constraints on industrial design and expands implementation options.

As integration of fingerprint sensing and display technologies matures, broader applications such as fast payments, identification, and personalized preferences are likely to rely more on fingerprint-based authentication, potentially replacing many password-based use cases.

ALLPCB

ALLPCB