Introduction

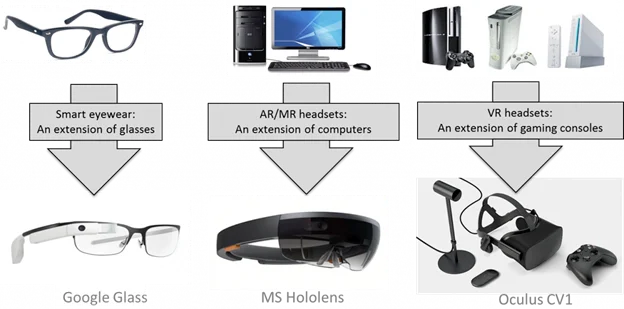

Virtual reality (Virtual Reality, VR), augmented reality (Augmented Reality, AR) and related technologies offer three-dimensional interfaces, natural interaction, and spatial computing that differ fundamentally from current mobile internet paradigms. They are considered potential next-generation general computing platforms. Since Google introduced Google Glass in 2012 and Facebook acquired VR headset maker Oculus in 2014, the VR/AR industry experienced a wave of startups and investment from 2015 to 2017, followed by a downturn in 2018. With global 5G deployment accelerating from late 2019, VR and AR regained attention as important 5G use cases.

Although 2020 disrupted production and daily life worldwide, the VR/AR industry saw increased demand in some areas because social distancing drove interest in VR gaming, virtual meetings, and AR-based temperature checks. VR active users on the Steam platform roughly doubled, and virtual conferences and cloud-based exhibitions proliferated. Various terms—VR, AR, MR, XR—are often used together, which can be confusing. This article first clarifies these concepts and then sets the stage for deeper discussions of XR core technologies, application scenarios, and industry trends.

1. Virtual Reality (VR)

Concept

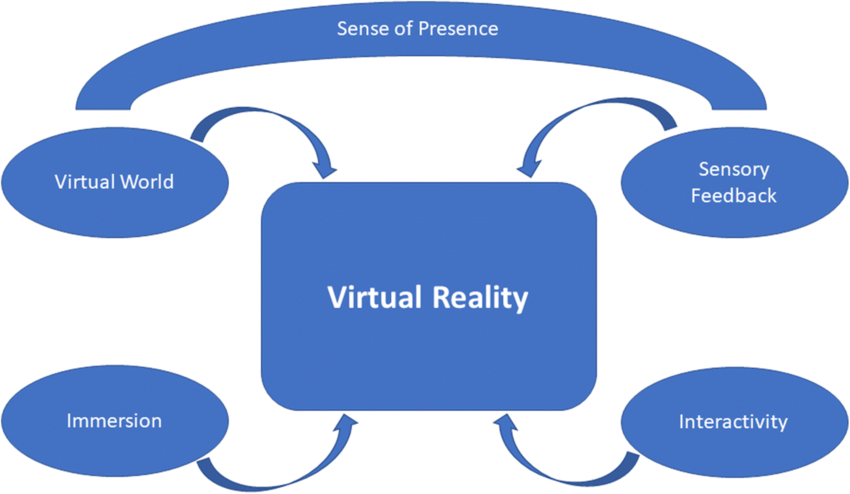

Virtual reality is a rendered version of visual and audio scenes that are packaged to the user. Rendering aims to simulate visual and auditory stimuli as naturally as possible when an observer or user moves within an application-defined space. VR typically, though not always, requires a head-mounted display that replaces the user's visual field and headphones to provide accompanying audio. VR systems usually include head and motion tracking to update rendered visuals and audio so that images and sound sources remain consistent with user movements. Other interaction methods can be provided for interacting with the virtual simulation, but they are not mandatory [1].

History

VR has long been an aspirational technology aimed at making dreams feel real. In 1935, author Stanley Weinbaum described a VR headset in fiction that included multisensory immersion such as vision, smell, and touch, an early articulation of the VR concept. In 1957 filmographer Morton Heilig invented the Sensorama, a large simulator that used multiple displays to create a sense of space; users experienced immersion by positioning their heads inside the device.

In 1968, Ivan Sutherland, a pioneer of computer graphics and Turing Award recipient, developed the first computer-graphics-driven head-mounted display, The Sword of Damocles, which also foreshadowed early augmented reality. VR presents users with a fully virtual environment separated from the real world, typically via a head-mounted display. Because of its strong immersion, VR has become prominent in entertainment and social applications, and consumer-level products have proliferated.

Common headset categories

Phone-based headsets: Visual quality depends on the phone inserted into the headset, including screen resolution, processor speed, and sensor accuracy. Google Cardboard and Samsung Gear VR belong to this category and are the most affordable options.

PC/Console-tethered headsets: For the best visual experience, these headsets connect to a PC or a game console (Sony's PSVR connects to a PS4) and use the host CPU and GPU for rendering. They typically require many cables and offer excellent visuals but limited mobility. Representative products include HTC Vive Pro Eye and Sony PlayStation VR.

Standalone headsets: Standalone devices use mobile chips (for example, Qualcomm Snapdragon series) for rendering and tracking. Freed from connections to a PC, console, or phone, they are plug-and-play and highly convenient. Typical devices include Oculus Quest and the Pico Neo series. Standalone headsets are becoming mainstream.

VR Glasses: The lightest VR headsets, similar to console-tethered units but driven by a connected phone chip. For example, Huawei has released lightweight VR glasses weighing about 200 g.

The isolation and immersion of VR are both strengths and limitations; because VR is detached from the real world, its practical utility can be limited, which has driven the development of alternative approaches such as AR.

2. Augmented Reality (AR)

Concept

Augmented reality overlays additional information or computer-generated objects onto a user's view of the real environment. These augmentations are usually visual or auditory. Observation of the environment can be direct, without intermediate processing, or indirect when sensors relay perceptions of the environment and the inputs are processed or enhanced [1]. In AR the user still sees the real scene; virtual content is integrated into that view via displays or glasses. Often the virtual content is simply composited without deep real-time understanding of the physical environment.

History

AR emerged to address practical needs. The term was coined in 1990 by Boeing researcher Tom Caudell. AR first found applications in professional B2B fields, such as virtual assistance systems for aircraft maintenance and Columbia University's KARM A repair assistance system. AR entered the public eye through flat-screen displays (computer, TV, phone) that composite real images with virtual objects. In 1998 AR was used in live broadcasts to display the first-down line in American football. A major milestone was the release of the first open-source AR SDK, ARToolkit, which allowed many developers to create AR applications. Today multiple AR engines support mobile app development and AR is increasingly present in daily life, though flat-screen AR offers limited immersion.

Wearable AR devices remained challenging. Google launched Google Glass in June 2012, but the product did not achieve broad success. Consumer-grade AR glasses remain immature, though breakthroughs could appear in the near future.

3. Mixed Reality (MR)

Concept

Mixed reality is an advanced form of AR in which virtual elements are integrated into the physical scene so that they become part of it. In MR, most virtual content is generated based on an understanding of the physical environment, creating a stronger sense that virtual objects are part of the real world [1].

History

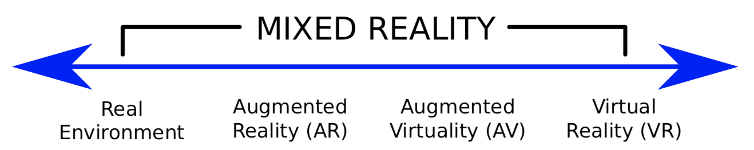

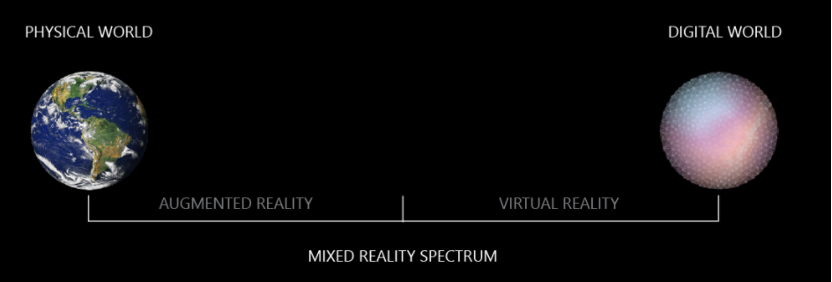

MR blends dreams and reality. The term and a formal definition date back to a 1994 paper by Paul Milgram and Fumio Kishino, which introduced the concept of the Virtuality Continuum to illustrate the relationship among reality, augmented reality, and virtual reality. The continuum ranges from the fully real environment on one end to the fully virtual environment on the other. AR lies closer to the real end, VR is at the fully virtual end, and MR describes the transition between them.

Originally MR described a process rather than a specific technology stack, characterizing products by how much virtual content is blended with the user's view of reality. Over time some companies, notably Microsoft, defined MR as the fusion of VR and AR that provides virtualized experiences grounded in digital representations of real scenes. Microsoft distinguishes the three as follows: overlaying graphics onto a video stream of the physical world is AR; blocking the real world to present digital imagery is VR; experiences that fall between these two extremes constitute MR.

Compared with AR, which typically composites virtual objects on top of a view of the real world, MR aims to make virtual items exist in a more realistic, integrated manner. MR can also bring real-world objects into a virtual space so that entities from both realms interact. This breaks the separation between the two spaces and produces a unified experience. MR is technically the most demanding of the three. MR functionality is more realistically a capability of AR or VR headsets rather than a standalone product category. Current commercial MR-capable devices include Microsoft HoloLens and Magic Leap, though they remain early-stage in maturity.

For example, consider a real office scene. If a system detects a flat surface in the real office and places virtual objects such as a dog, a globe, a monitor, or a vase on that plane, the result is a typical AR scenario. If the environment is digitally modeled so the entire office becomes virtualized but preserves real-world boundaries and the observer can navigate around virtualized desks, walls, and people as if they were physical obstacles, then the experience is MR because the digital and physical realms understand and merge with each other.

4. Extended Reality (XR)

Concept

Extended reality refers to all real-and-virtual combined environments and human-machine interactions generated by computer technology and wearable devices. Representative forms include AR, MR, VR, and crossover scenarios between them. The level of virtuality ranges from partial sensory input in AR to fully immersive VR. A key aspect of XR is the extension of human experience, including presence (as in VR) and enhanced cognition (as in AR) [1].

History

Because MR and AR can be difficult to distinguish and share underlying technologies, the term XR gained traction around November 2016. Qualcomm actively promoted XR and introduced integrated chips for both virtual reality and augmented reality. Under this broader definition, XR encompasses AR, VR, MR, and the continuum between them: similar foundational technologies drive all these experiences.

Historically the term XR has also been used in optics to denote an expanded visible spectrum (for example, ultraviolet or infrared imaging), but that usage is distinct from the immersive-computing context discussed here.

This article introduced the XR concept. Subsequent coverage will examine XR core technologies, application scenarios, industry dynamics, and future trends.

ALLPCB

ALLPCB