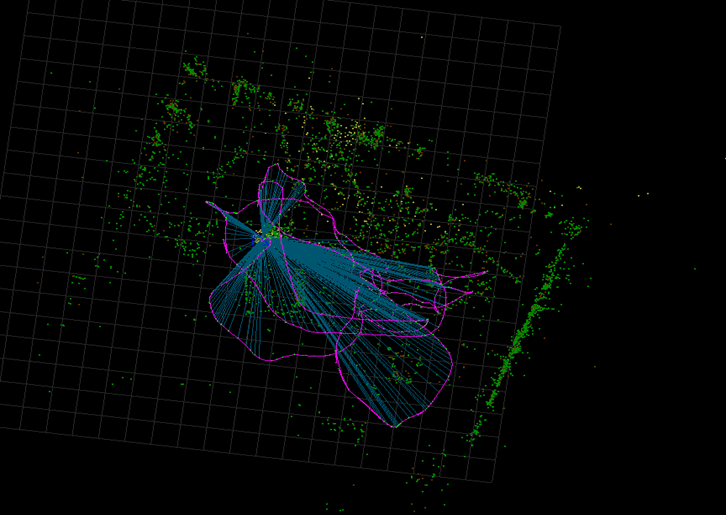

SLAM (Simultaneous Localization and Mapping) enables a platform to estimate its pose and concurrently build a 3D map of an unknown environment using sensor data. Because of its central role in AR/VR, autonomous driving, and robotics, SLAM has attracted wide attention in both academia and industry.

Overview

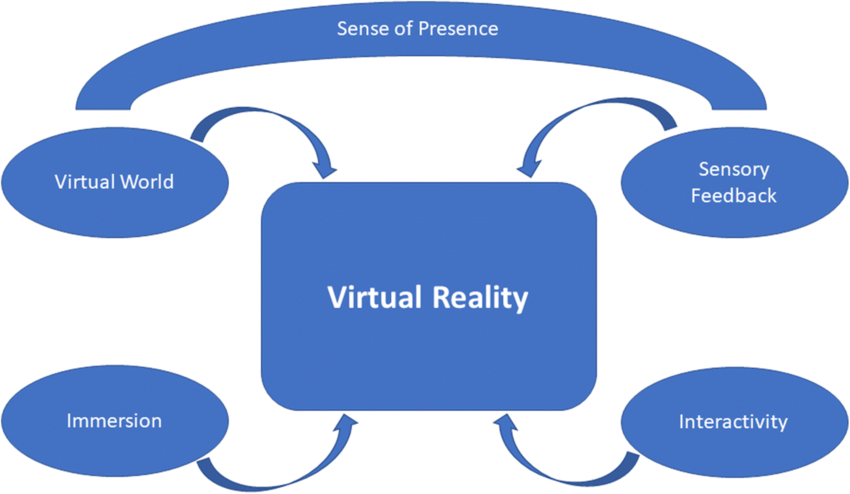

SLAM provides two key capabilities: spatial localization and environment reconstruction. Mapping captures the geometric structure of the physical world and enables overlaying virtual AR/VR content onto real scenes. Localization, often expressed as 6DoF motion tracking, ensures consistent alignment of virtual and real content from different viewpoints.

XRSLAM Features

Modular design

XRSLAM is a C++ SLAM library in the OpenXRLab spatial computing platform. The algorithm implements a lightweight visual-inertial odometry (VIO) based on a monocular camera and an IMU, supports desktop and mobile platforms, and achieves near-state-of-the-art accuracy on public datasets such as EuRoC. It can process typical smartphone inputs at real-time frame rates around 30 fps.

Internally the framework is modular, accepting multiple sensor inputs and producing real-time camera poses via sensor fusion and optimization. The core VIO pipeline includes IMU preintegration, image feature matching, visual-IMU linear alignment for initialization, and sliding-window optimization. Feature matching is implemented using OpenCV optical flow.

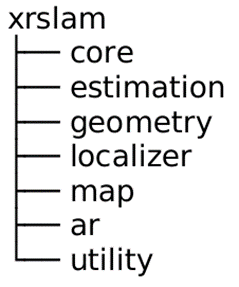

The codebase organizes functions and classes by categories such as core modules, state estimation, multi-view geometry, visual localization, map structure, AR rendering, and utilities, which facilitates extension and integration.

XRSLAM supports flexible multi-sensor configurations. The released version uses monocular camera plus IMU input; future extensions include stereo, depth cameras, and wide-angle optics.

Cross-platform development

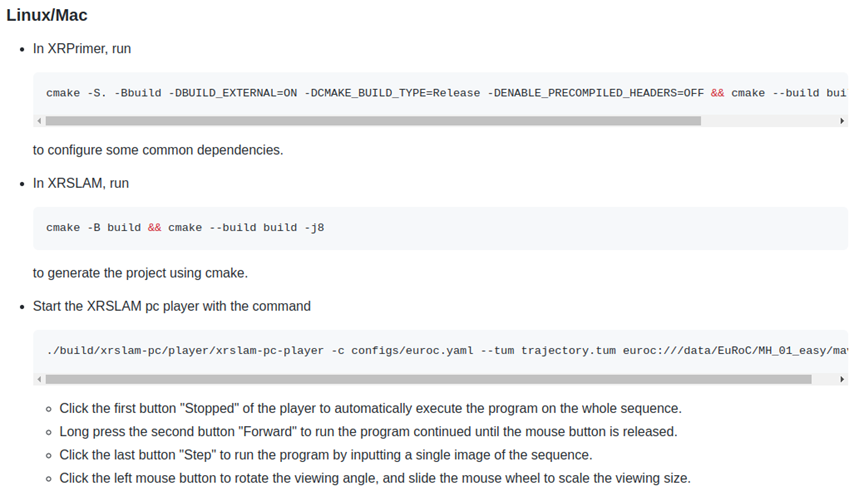

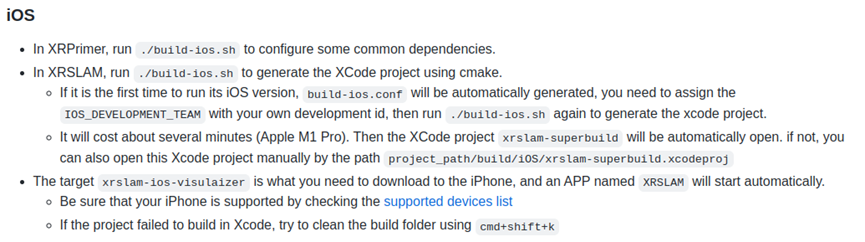

The core library depends only on common libraries such as Eigen, OpenCV, and Ceres Solver, and these are included in the XRPrimer base library. XRSLAM supports building and running on Linux, macOS, Android, and iOS. The released package includes complete build flows and runnable demos for Linux/macOS and iOS.

Documentation and demos

Documentation and tutorials cover topics such as building and running on PC platforms and developing AR demos on mobile platforms.

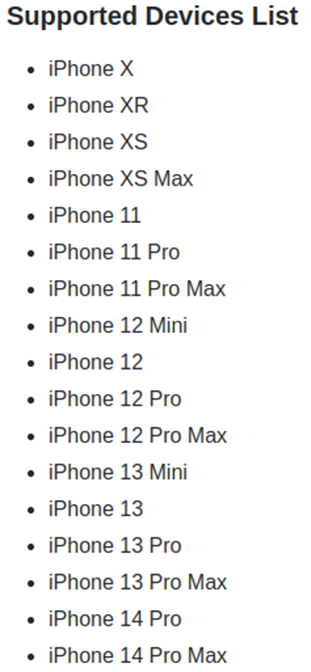

VIO operation requires prior calibration of multi-sensor parameters. The project provides calibration parameters for recent iOS devices to simplify running the supplied AR demo.

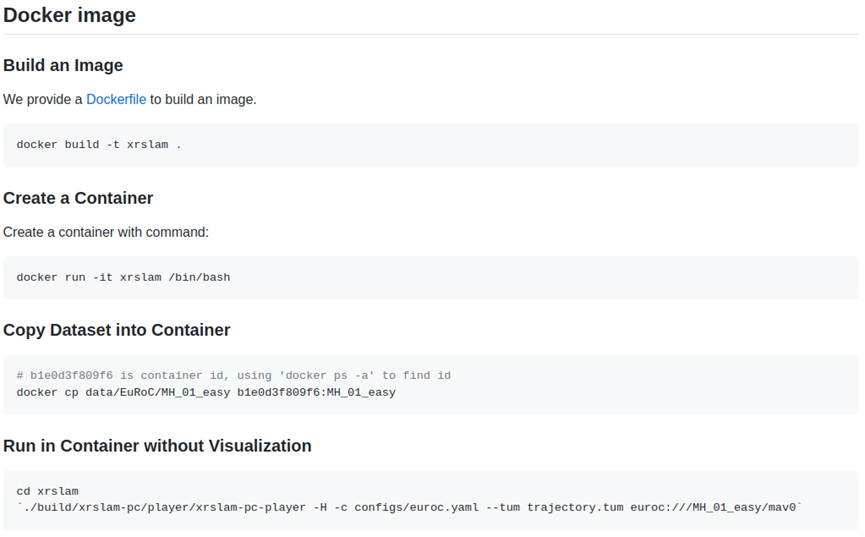

To reduce environment setup issues, a Docker image is provided to standardize the development environment.

Performance Metrics

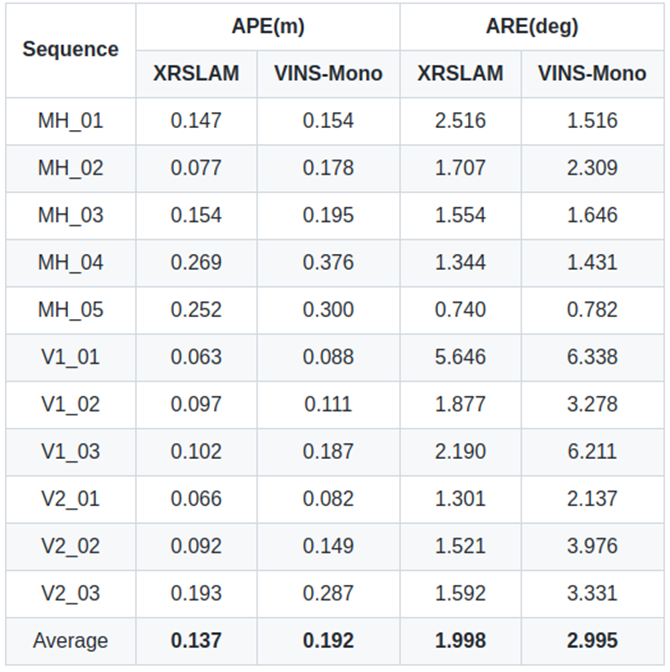

XRSLAM was evaluated on the EuRoC dataset and compared with VINS-Mono. VINS-Mono results were reproduced from its open-source code. The evaluation shows XRSLAM achieves a clear accuracy advantage on these tests. XRSLAM is also capable of real-time operation on mobile devices, with processing efficiency supporting frame rates above 30 fps.

The presented accuracy metrics do not include loop closure functionality.

Conclusion

SLAM is a mature yet active research area with many open challenges. XRSLAM offers an open, concise, cross-platform, and extensible base for further development and research in SLAM and spatial computing.

ALLPCB

ALLPCB