Overview

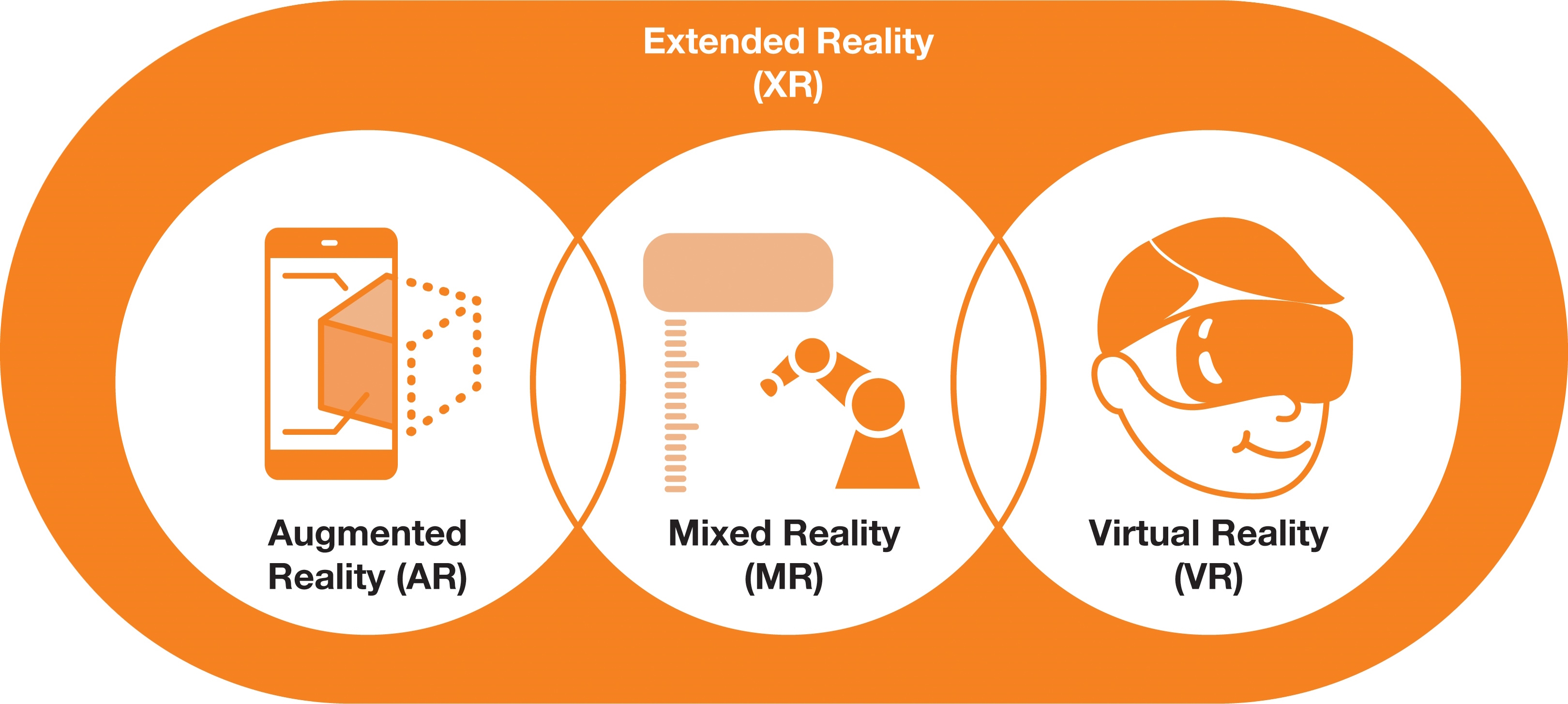

For engineers working in AR, VR, XR, product design, or simulation, ray tracing is a technology worth understanding. It represents one of the most significant advances in graphics since the emergence of 3D graphics, and it is moving from specialized film and advertising production toward mobile, wearable, and automotive embedded markets through new, more efficient approaches to ray tracing.

Why lighting matters

The realism of any 3D scene depends largely on lighting. In traditional rasterized rendering, light maps and shadow maps are precomputed and then applied to a scene to simulate its appearance. While this can produce attractive results, these techniques are ultimately approximations of lighting. Ray tracing simulates how light actually behaves in the real world to produce more accurate, procedural reflections, translucency, emission, and material appearance.

In the real world, light rays emitted from a source hit objects and then interact with those objects. Depending on surface properties, the rays reflect to other surfaces, and this process continues, producing light and shadow.

How ray tracing works in computers

In computers, ray tracing—or more precisely, path tracing—operates in a reversed manner compared with real-world photon paths. Rays are cast from the camera viewpoint into the scene, and algorithms compute how each ray interacts with the surfaces it hits based on those surfaces' properties. Each ray is then traced as it bounces between objects until it reaches a light source. The result is a scene lit in a way that resembles illumination by a real-world light source, with realistic reflections and shadows.

Real-time performance challenges

Historically, real-time execution of this process has been infeasible for embedded devices and even high-end workstations because of the heavy computational load.

Many people first encountered ray tracing in feature animation films; for example, the opening shot of Toy Story 4 showing reflections in a puddle. Those scenes, however, required months of rendering on dedicated server farms, which is not acceptable for games where scenes must be generated in real time at 30 frames per second or higher.

Recent advances and hybrid approaches

Real-time ray tracing in games was previously impractical because of its computational cost. That has changed with hybrid methods that combine the speed of rasterization and the visual accuracy of ray tracing. Running games with ray tracing features on a phone would have been unthinkable a few years ago, but that is becoming feasible.

Although real-time ray tracing solutions for mobile devices have existed for some time, a complete supporting ecosystem was initially lacking. This has been changing. In 2018, NVIDIA released hardware for the desktop gaming market that enabled hybrid real-time ray tracing. Even NVIDIA did not have games that fully exploited the hardware at launch, which highlights how challenging it is to build an ecosystem for a new technology. Since then, many game developers, including Bethesda and Unity, have started to adopt ray tracing, and next-generation consoles introduced in 2020 incorporated ray tracing features. Ray tracing will also begin to appear in other markets, such as AR and VR.

Implications for engineers

As ray tracing becomes more accessible and people begin to experience it on personal machines, demand will grow for ray tracing in mobile devices, VR headsets, and game consoles. Engineers should become familiar with the latest developments in this technology to keep pace with the evolving industry.

ALLPCB

ALLPCB