Overview

I have worked in AR for several years with a focus on visual localization. This article summarizes several research directions and technical challenges in AR visual positioning.

AR and VR: basic distinction

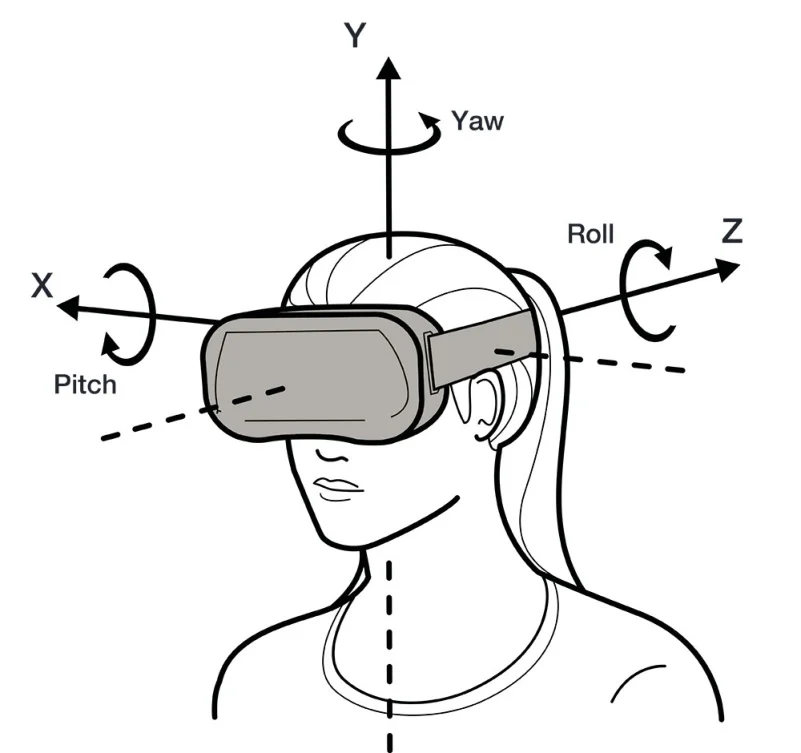

VR is virtual reality, a process of replacing the real world with a virtual one that typically requires a bulky device to enter. AR is augmented reality, a combination of virtual and real content, and it is a key technology for the metaverse. AR devices are generally much lighter than VR headsets. Smartphones provide the most direct and convenient entry to AR for most users, while AR glasses represent the next generation of devices.

Core technology: visual localization

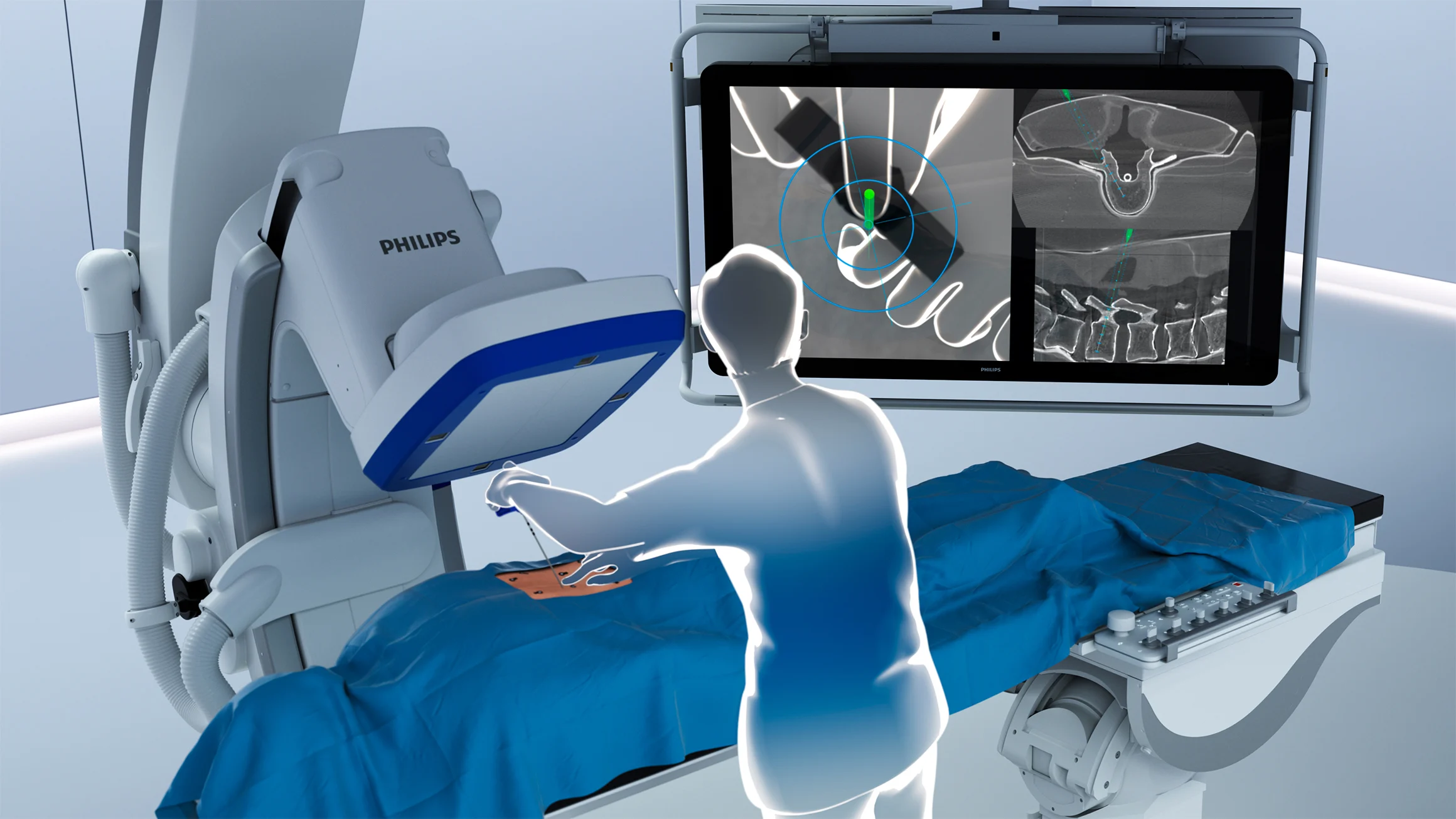

The core AR technology is visual localization, also referenced as spatial computing. Main visual positioning system (VPS) approaches separate into two layers: the map construction layer and the localization service layer. Map construction can use SLAM or Structure from Motion (SfM); currently SfM is the dominant approach. SfM is widely used in visual 3D reconstruction pipelines (sfm + mvs + mesh + texture) and corresponds to the aerial triangulation module in photogrammetry. A company that only replicates mature 3D reconstruction software such as photoscan, realitycapture, ContextCapture, inpho, or pix4d is unlikely to succeed unless a major firm pursues an in-house implementation for strategic reasons. The global localization service layer depends on the prior map produced by the map construction stage.

Current VPS work items

Map construction layer

1) Large-scale AR map construction. SfM scales poorly as the number of photos increases. How can maps of 30,000 or 100,000 images be constructed efficiently? Using out-of-the-box tools such as colmap or openmvg for such large datasets often leads to unacceptable project delivery timelines.

2) Automated map quality assessment. Traditional evaluation uses costly lidar or manual total-station surveying to measure accuracy, which is time-consuming and labor-intensive.

3) Map storage and simplification. A scene can generate a huge number of 3D points, including elements useful for localization and many points that are irrelevant. Storing everything is impractical, so map simplification strategies are required.

Localization service layer

4) Long-term robustness. Approaches such as superpoint plus superglue are effective for many scenarios, but long-term robustness remains a key challenge.

5) Sparse matches and pose reliability. Even with state-of-the-art superpoint and superglue algorithms, some scenes yield very few matches, producing unreliable PnP poses. Is a purely point-based map sufficient, or should the map representation be augmented to higher dimensions?

6) Multi-source map updates and crowdsourcing. This is one of the hardest problems and sits at the intersection of map construction and localization services. Mapping sensors and end-user device sensors may differ, yet data captured by many users with phones or glasses can enrich and complete prior maps. How can mobile or wearable captures be used to update and maintain maps in real time?

Map-based vs mesh-based localization

Most VPS techniques are map-based. An alternative line of research questions whether large-scale 3D models are strictly necessary. See the paper "Are Large-Scale 3D Models Really Necessary for Accurate Visual Localization?" and the later work "MeshLoc: Mesh-Based Visual Localization" for approaches that explore mesh-based localization instead of dense point maps.

Summary of optimization points

Possible optimization areas include scalable SfM for very large photo collections, automated map quality evaluation, map compression and semantics-aware simplification, long-term matching robustness, enriching map representations beyond sparse points, and robust crowdsourced map updating.

ALLPCB

ALLPCB