Today, robots are increasingly used in manufacturing and warehouse logistics. In these environments, human workers and robots are often separated in their activity spaces. Enabling meaningful interaction between humans and robots is therefore important.

Communication Gaps Between Humans and Robots

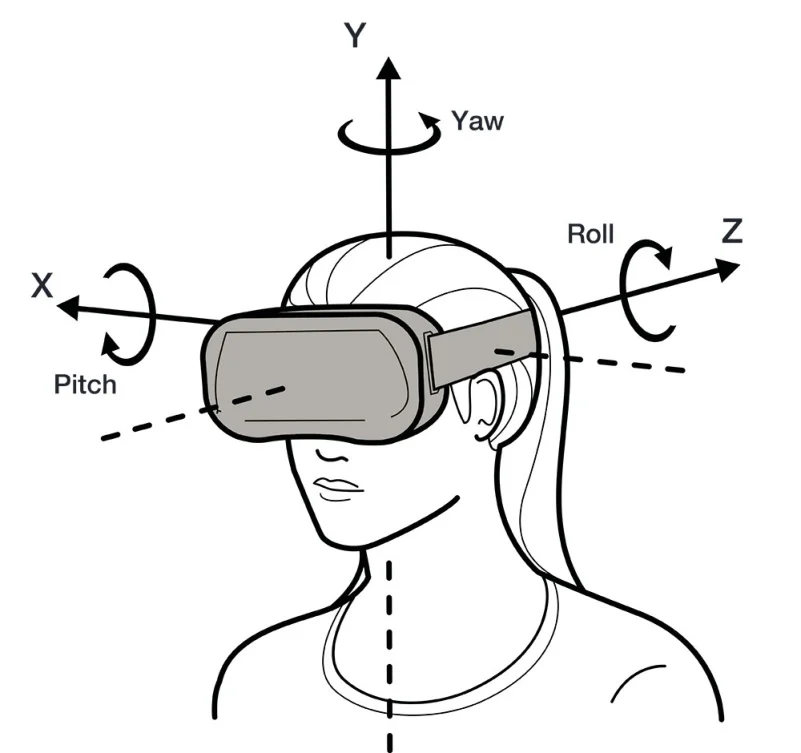

Humans typically communicate using language and gestures, while robots exchange information in digital forms such as textual commands. This mismatch can cause miscommunication between humans and robots.

Augmented reality (AR) can visualize robot behaviors to bridge this communication gap. AR also offers a high-bandwidth, low-ambiguity alternative communication channel.

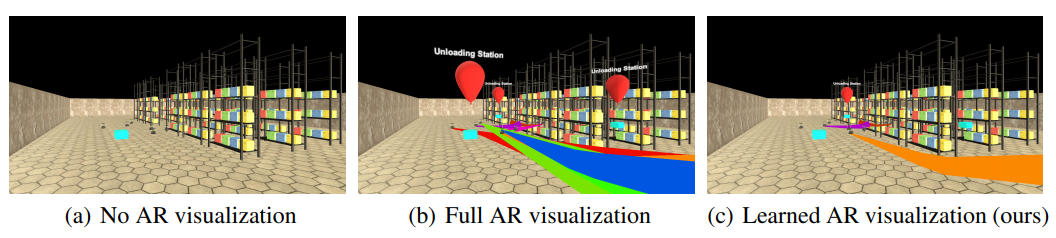

Current AR visualization systems are manually designed and can suffer from either too little or too much visual information. If visualization is too sparse, the AR interface fails to assist users; if it is too dense, users may be overwhelmed.

VARIL: Imitation-Learned AR Visualizations

To improve interaction between humans and multi-robot teams, researchers applied imitation learning to make AR visualizations adaptive. They developed the Visualizations for Augmented Reality using Imitation Learning (VARIL) framework.

The paper authors are Kishan Chandan (PhD student), Jack Albertson (undergraduate), and Shiqi Zhang, from the Department of Computer Science at Binghamton University, State University of New York.

How VARIL Supports Effective Interaction

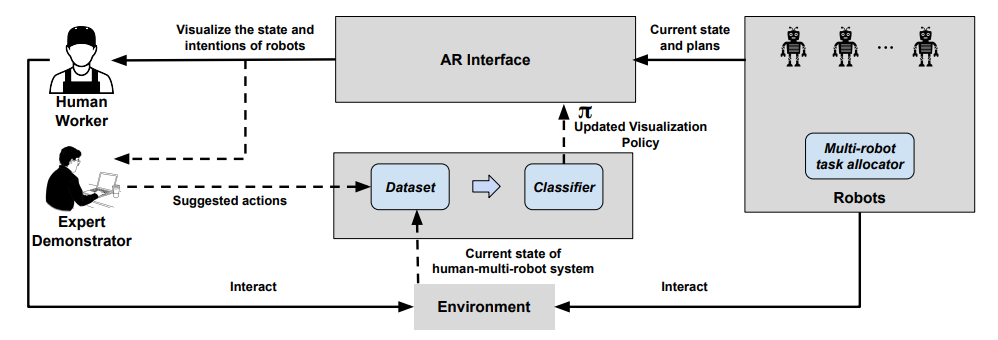

VARIL helps humans and robots interact effectively by analyzing the states of robots and humans to decide what information should be visualized, when, and in what form.

Figure: VARIL framework

Concretely, VARIL uses an AR interface to track multiple robot states simultaneously and dynamically select AR visual actions, such as helping a human handler perceive robot positions and planned trajectories.

VARIL models two distinct human roles: a worker and an expert. In a scenario where a group of robots and a human operate in the same space, robots continuously share state and plans through the AR interface while the human uses the interface to monitor the robot team.

Human workers collaborate with the robot team to complete tasks. During demonstrations of VARIL, a human expert indicates which information should be visible or hidden at specific times. When a new policy is generated, the AR device updates visualizations to mimic the expert demonstrator's recommendations. The expert participates only during the policy-learning phase; once the device has learned the policy, the expert is no longer required.

Task Performance Under Different AR Visualization Strategies

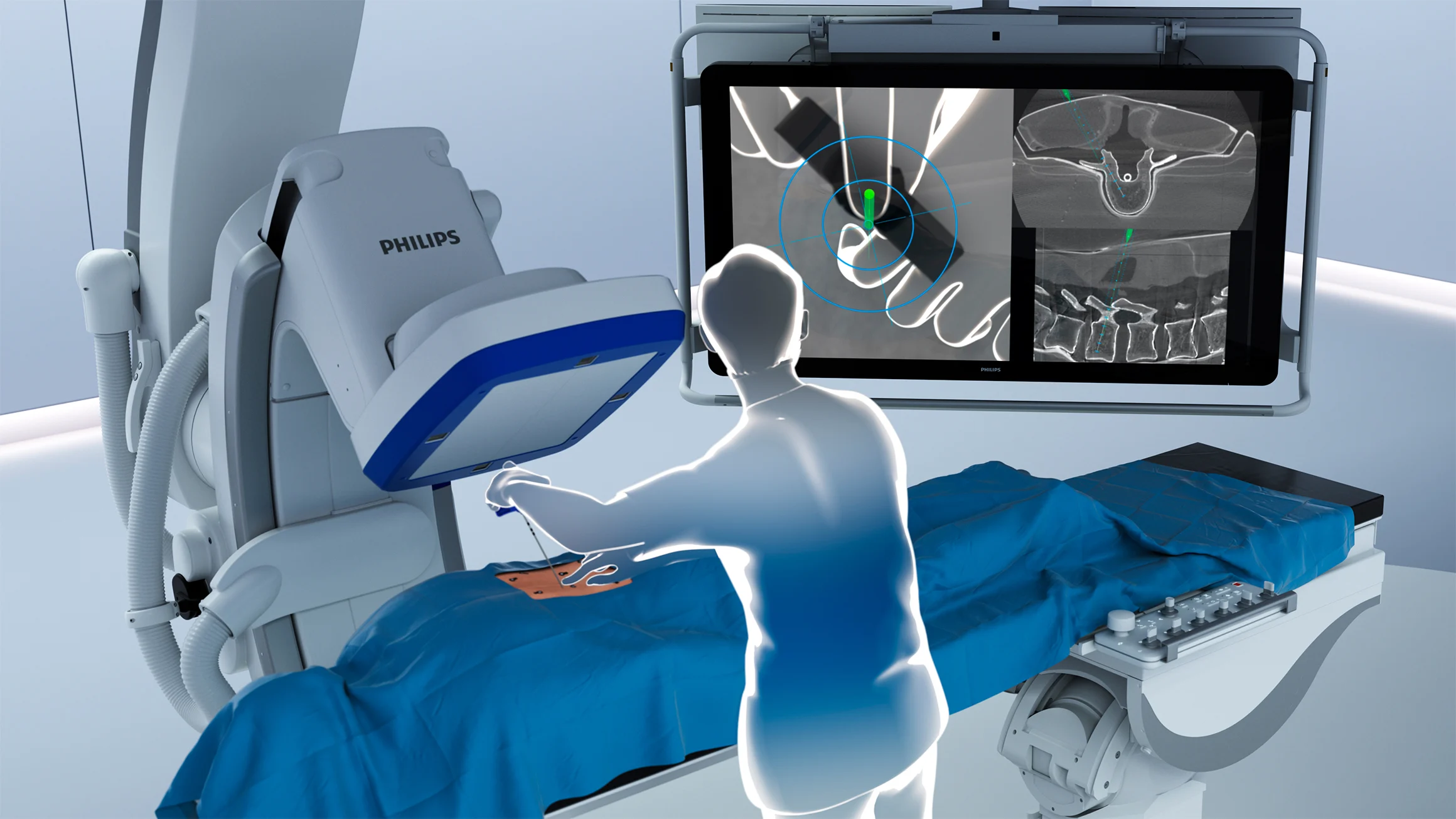

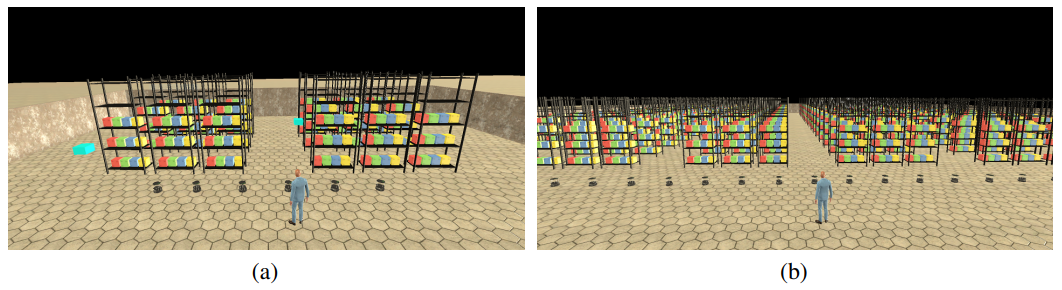

To examine how virtual humans and robots perform tasks under different AR visualization strategies, researchers created a simulated warehouse testbed with an integrated lab. The platform facilitates human-robot collaboration and supports empirical evaluation of the VARIL framework.

Figure: Virtual human and robots completing tasks under different AR visualization strategies

In the simulated warehouse, the researchers configured 12 robots and one virtual human to cooperate on delivery tasks. Robots transported objects to various drop-off stations while the human assisted robots waiting at those stations with unloading.

Figure: Two warehouse scales used in experiments

Without AR visualization, human workers cannot determine robot positions or plans. With full AR visualization, the virtual human can be overwhelmed by visual indicators. Under imitation-learned AR visualization (VARIL), the AR agent uses a learned policy to dynamically select visualization strategies based on human-robot interactions.

After tasks, the researchers collected a dataset of 6,000 state-action pairs. They trained the AR agent on this dataset using iterative imitation learning algorithms.

Comparing visualization strategies showed that conflicts between actions and the policy decreased sharply as imitation learning iterations increased. The trained policy reduced robot waiting time compared with the initial policy.

The researchers also evaluated VARIL's effect on user experience with 25 human participants. In the simulated warehouse, each participant controlled a virtual human and completed a subjective questionnaire.

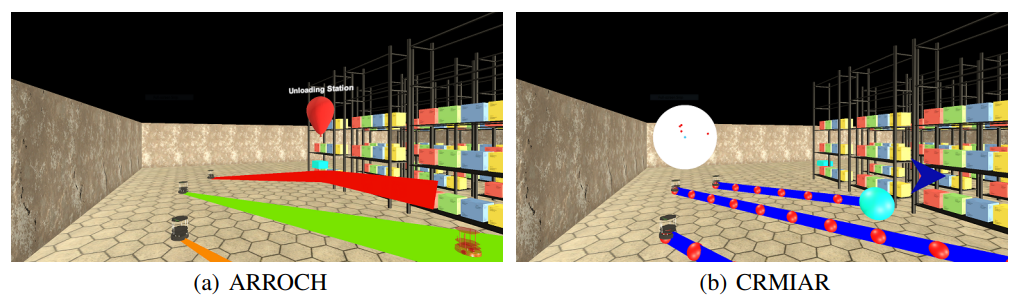

Figure: Two baseline visualization systems used for comparison

Results from human-subject experiments indicated that VARIL significantly improved human-robot interaction efficiency and reduced user distraction compared with two baseline visualization systems from the literature.

Quantitatively, VARIL improved task completion efficiency for human-multi-robot teams and reduced the time robots waited to unload by 15%.

Simulation and Future Work

Although the experiments used robots to demonstrate AR human-robot interaction, building and evaluating such systems in the real world is challenging. Real-world trials require human workers to walk between delivery stations, and each navigation operation can take considerable time. Real-time collaboration among robots can lead to early termination of trials if a robot becomes stuck behind a dynamic obstacle.

Open-source simulators can replicate warehouse environments and avoid these issues. While simulators do not eliminate all human-robot collaboration challenges, they allow AR researchers to focus on algorithm design rather than extensive software engineering.

The research team plans to further evaluate VARIL's user experience through surveys and to optimize VR human-robot collaboration systems.

References

Kishan Chandan, Jack Albertson, Shiqi Zhang, CoRL (2022).

ALLPCB

ALLPCB