Overview

BearID is a project that uses facial recognition to identify individual brown bears (Ursus arctos), estimate population size, and monitor their movements and health. Identifying known brown bears enables the team to develop and refine animal facial recognition methods that can be applied to other species.

Project Team and Motivation

ARM chief engineer Ed Miller leads BearID and is the project's software developer. He runs the nonprofit in his spare time with technical partner Mary Nguyen and conservation lead and field researcher Melanie Clapham. ARM's flexible working arrangements have allowed Ed time to test software in the field and develop usage guidelines. BearID aims to support conservation by adapting facial recognition methods for other threatened wildlife species.

Ed notes that scientists are under increasing pressure to draw broader conclusions from research with fewer resources. New techniques for monitoring wild brown bear populations and posing broader research questions could be especially valuable if they can be replicated using camera traps that monitor other species.

Approach

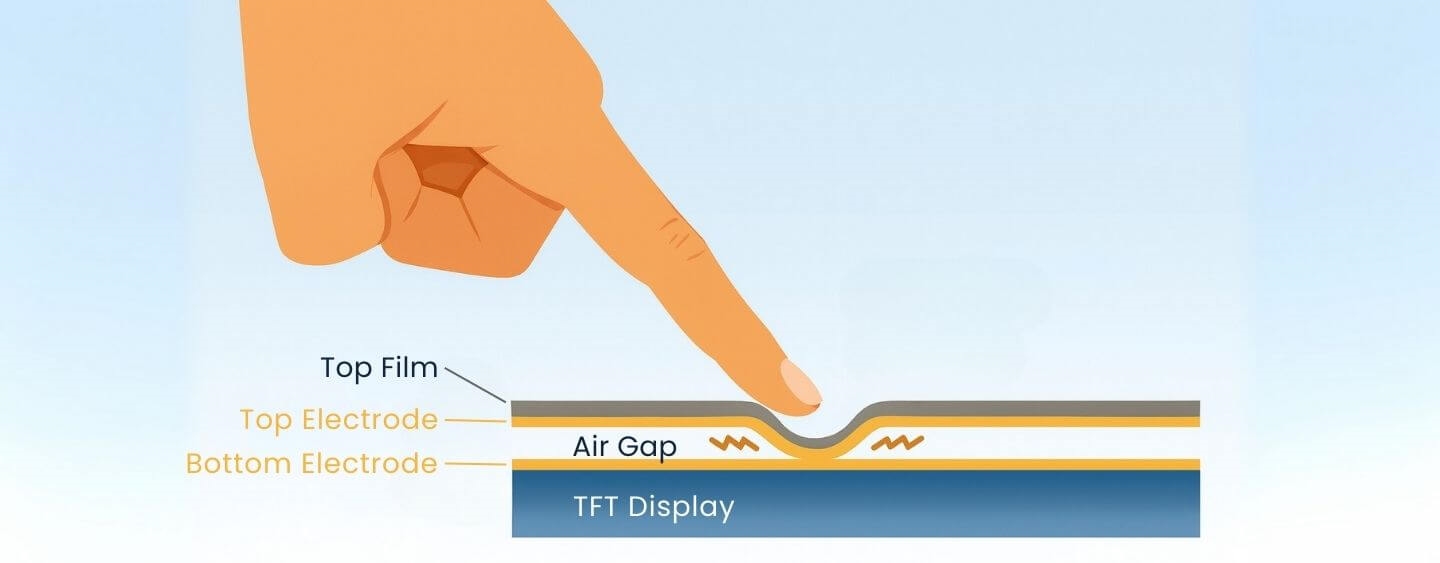

The detection software immediately identifies and marks the facial triangle formed by each bear's eyes and nose.

The project idea began when Ed and Mary watched brown bears at Brooks Falls in Katmai National Park via Explorer.org live cameras and considered applying deep learning and AI to detect bears' faces and ears. Faces and ears are among the most stable features on bears, whereas weight, coat color, and markings can change seasonally. Focusing on these features also reduces the amount of data processing required for positive identification.

After reading about Google FaceNet and reviewing work on dog-face recognition, the team used the Dlib machine learning toolkit and relevant algorithms, since dogs have facial features similar to bears and provided an advantageous starting point for training.

Field Deployment

BearID deployed test camera traps disguised on trees. The project began in 2016 when Ed and Mary started exploring deep learning possibilities. Melanie Clapham joined the following year and began using BearID in the field. The first test site was at Katmai National Park, where Explorer.org broadcasts live summer footage of bears fishing at the falls.

The team’s dataset of 150 includes a second group of bears from the Knight Inlet area of British Columbia, where much of Clapham's research is based. Beyond counting wild bears and monitoring population trends, habitat and land-management questions have become important.

Clapham built a camera-trap network to monitor movements and observe how bears use different areas. Good or bad years can affect land use. Some territories are on Indigenous lands, and Clapham has developed collaborative approaches with local communities to provide counts, locations, and information that inform regulation and land management.

Ed and Mary are working to expand the application to cover all eight bear species. Many of these bears are in human care, such as in zoos or sanctuaries, and photographing them is often easier. The team has completed an initial full recognition test for the Andean bear and is beginning to extend the work to other bears.

While brown bears have limited contact with humans in remote areas of Alaska and British Columbia, in other regions interactions are closer. In South America, human-bear conflicts are a significant issue. For example, villages monitor Andean bear movements and maintain warning systems.

Technical Challenges

BearID runs in cloud services, primarily Microsoft Azure, thanks to funding and credits from Microsoft AI for Earth. Most machine learning training is done on large GPU-based machines, while field use relies on ARM-based systems. Initially the team used laptops and tablets, but Ed is developing a Raspberry Pi 4B version that can run on-site: it will read images from an inserted storage card, store them locally, perform analyses, and write results back to the card.

Power is always a constraint, but Melanie wanted capability to run and analyze data on-site rather than waiting to transport results back to a university. Ed is therefore exploring accelerators for the Raspberry Pi or alternative ARM platforms with machine learning acceleration.

In the long term, the goal is for the camera itself to detect and identify bears, then send alerts or movement and population data to a base station over a low-power transmission protocol, enabling near real-time movement information.

ALLPCB

ALLPCB