Overview of virtual reality technology

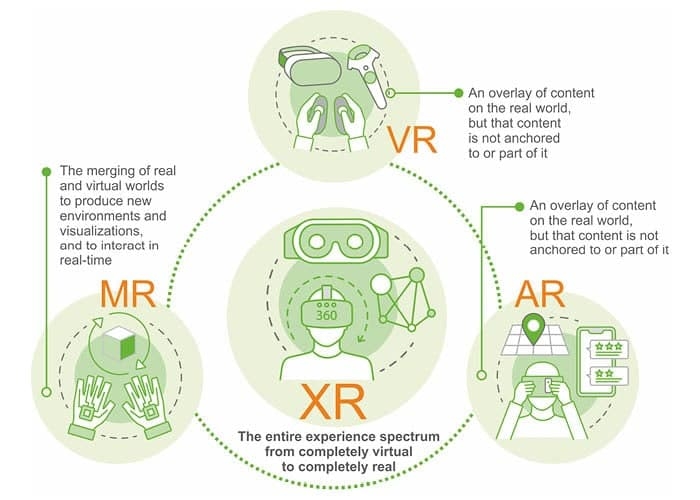

Virtual reality, also called immersive reality, attracted attention from the scientific and engineering communities in the 1990s. Its emergence created a new research area for human-machine interaction interfaces, provided new interface tools for intelligent engineering applications, and offered new methods for large-scale data visualization across many types of engineering. The technology is characterized by computers generating an artificial virtual environment, constructed as a three-dimensional space using computer graphics or by encoding other real environments into the computer to produce a realistic "virtual environment," giving users a visual sense of immersion. These applications have changed how people use computers for multi-disciplinary data processing, especially when handling large amounts of abstract data, and they can generate significant economic value in many fields.

An early startup's shift into motion capture

A few years ago, Nuoyiteng was an obscure startup founded with bootstrap funding, holding a number of carefully developed algorithms but uncertain about their best applications. The company debated its future direction, and an early flagship product was a golf swing trainer.

Although the company did not come from long experience in the computer graphics or film industries, its entry into motion capture was likely an exploratory choice. This is evident from the somewhat unconventional local coordinate orientations they initially defined for character models.

Despite its modest beginnings and work that seemed unrelated to everyday life or the VR industry, the company generated strong attention. Its influence within China and internationally, and its development prospects, have likely already outpaced many teams still focused primarily on VR headsets and panoramic content.

What is motion capture?

Motion capture is the process of recording a series of spatial key points from real-world motion, combining them into mathematical parameters, and ultimately presenting them. Essentially, it converts live performances into computer graphics animation. The spatial key points typically correspond to joint centers of mechanisms or connections in a biological skeleton. By placing sensors or markers at these key points, motion data can be collected and then mapped onto virtual 3D characters.

1) Areas motion capture can cover

A common distribution of human character key points is as follows. It does not precisely reflect the motion of every small joint or the more than 200 bones and muscles in the human body, but it is sufficient for most film and game production needs.

The head, neck, spine, and hip belong to the body's central axis, while the shoulders, upper arms, forearms, hands, upper legs, lower legs, and feet are symmetrically distributed on the left and right. In total, about 18 key positions are typically recorded. More complex character rigs may include separate left and right pelvis points, additional spine locations, or extended capture for fingers and toes, though these are incremental changes rather than fundamental differences.

The motion capture principle for other character types is similar, although in many cases a suitable performer cannot be found to reproduce the desired actions on stage.

Recording motion data: a core challenge

How to record the motion information of these key positions is a central problem faced by many motion capture systems and engineering efforts.

ALLPCB

ALLPCB