Summary

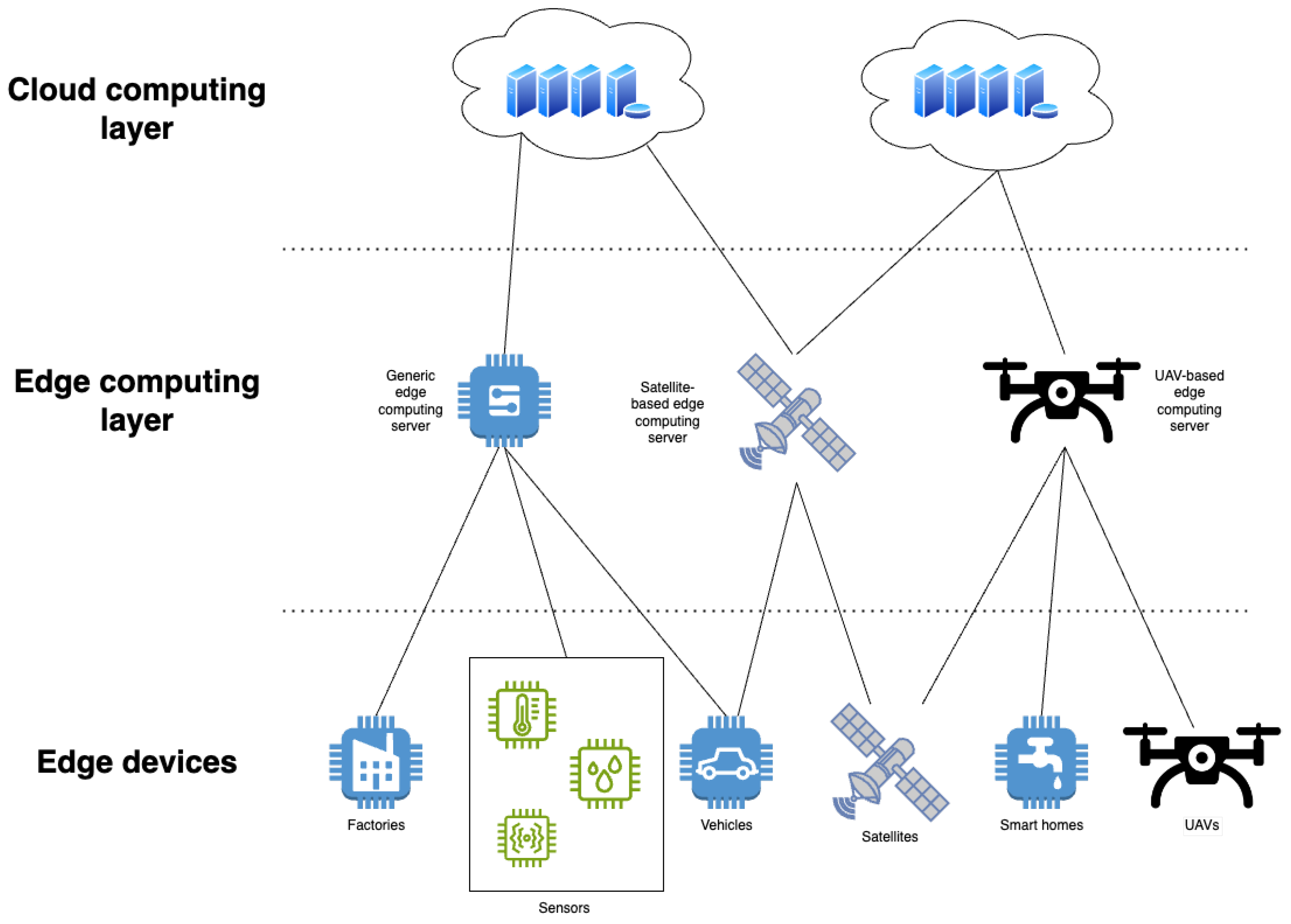

Existing cross-morphology robot control typically requires separate strategies for different hardware, resulting in high development cost and poor generalization. This project uses natural language as a unified interface so users can command different robot morphologies to perform the same task. A hierarchical reinforcement learning framework is proposed: a high-level vision-language model (VLM) parses tasks and generates intermediate instructions, and low-level reinforcement learning (RL) policies adapt those instructions to morphology-specific actions. Fast training of cross-morphology policies is performed in simulation, with final deployment on real hardware for validation.

Approach

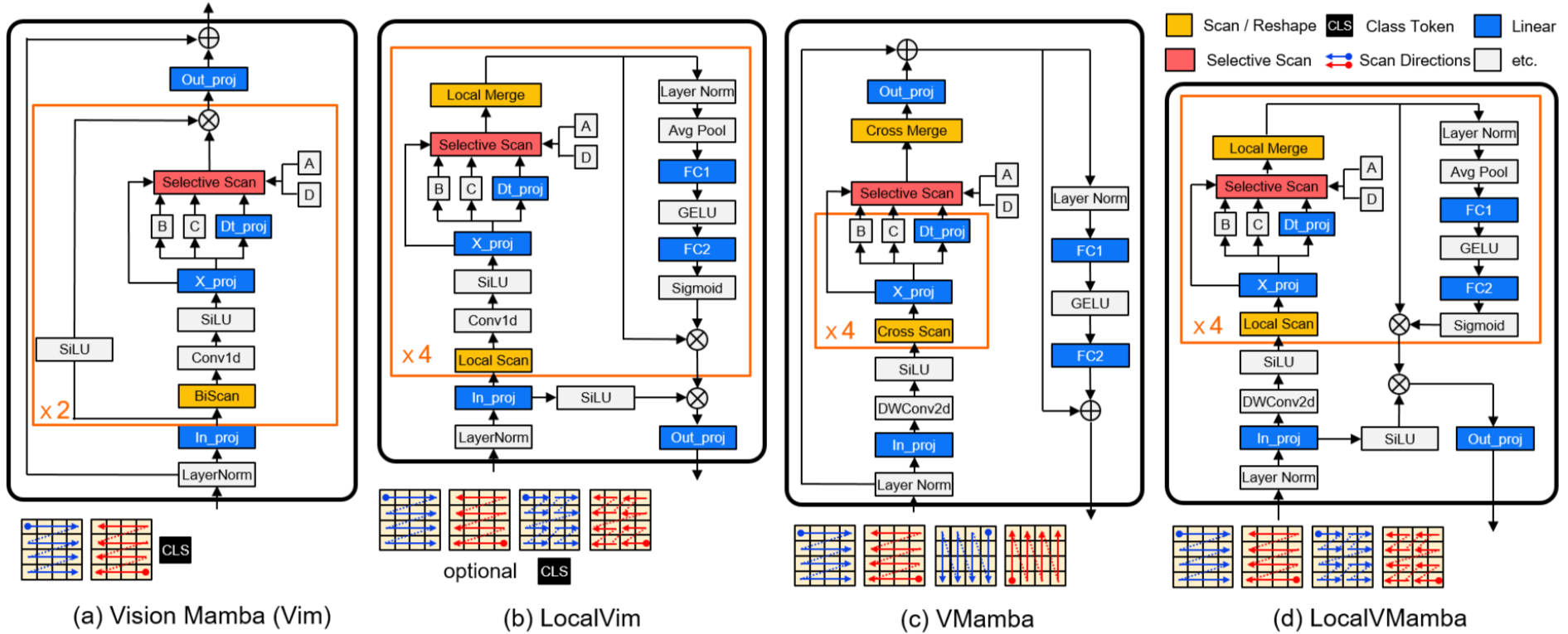

The proposed architecture uses a hierarchical RL structure. The high-level VLM converts visual and natural language inputs into standardized intermediate instructions (for example, "turn left 30 degrees"). The low-level RL policy, parameterized by robot morphology, maps those intermediate instructions to concrete low-level actions (for example, quadruped gait or wheeled steering). Simulation is used for rapid cross-morphology policy training. Real-world validation uses X5 RDK humanoid hardware and quadruped platforms to demonstrate the same language instruction executing efficiently on different morphologies. Experiments show advantages in dynamic obstacle avoidance, complex terrain adaptation, and task replanning, offering a lower-cost, higher-generalization solution for cross-morphology robot control.

Simulation and Real-World Deployment

The plan uses a mujoco-based simulation with multi-morphology robot models for verification, followed by attempts at cross-platform real-world deployment. In simulation, the same instruction "bypass obstacle and enter the room on the right" drives wheeled and quadruped robots to plan different paths and execute different motion patterns. Real-world demos use a custom wheeled platform and Petoi Bittle. Later iterations will expand language interaction capabilities.

The X5 system's RGB camera and IMU data feed into the high-level VLM and the low-level policy. A ROS2 bridge receives low-level policy outputs of target joint angles and converts them into motor control commands.

Training Strategy

After training the quadruped policy, the high-level VLM is frozen and only the low-level policy is fine-tuned to adapt to humanoid and wheeled robots. The simulation environment randomly generates obstacles, terrain variations, and lighting changes to validate policy robustness. The project compares the efficiency and required compute of the hierarchical approach with end-to-end RL strategies.

Example tasks include: a wheeled robot executing "move down the corridor, turn right at the second doorway" and a legged robot performing "avoid floor debris and place the specified object at the designated location".

Hardware and resources: one engineering machine for RL training (or equivalent cloud compute), 3D printing for custom parts, and upgraded servomotors as the drive motors (TBD).

VLM Role and Model Evaluation

The project treats the VLM role closer to a vision-language assistant (VLA) focused on scene understanding and interaction, rather than pure vision-and-language navigation (VLN) centered on path planning. The VLM is not required to output precise position estimates, especially when depth sensing is not available; a coarse distance estimate sufficient to output the next-step instruction is acceptable.

Open-source VLMs were evaluated:

- openVLA: produces end-to-end outputs that depend on specific morphology and is therefore unsuitable for the cross-morphology goal.

- LLaVA: locally deployable 7B model; spatial perception was insufficient and runtime throughput did not meet requirements (about 1 item/min).

- Qwen-72B fine-tuning: collected data by photographing 80+ scenes with corresponding prompt descriptions and fine-tuned via the official API. Fine-tuned deployment costs were too high (about 160 RMB/hour), so that path was abandoned.

Revised plan: do not require the VLM to estimate exact positions. Instead, have it output coarse distance judgments and the next-step task instruction. The native Qwen-72B model can then satisfy this requirement without expensive fine-tuning.

Low-Level Mapping and RL

The goal is to map intermediate action instructions to robot joint angles and torques, and, when possible, make this mapping cross-morphology. This requires addressing variable-length output issues before cross-morphology low-level mapping can be considered verified.

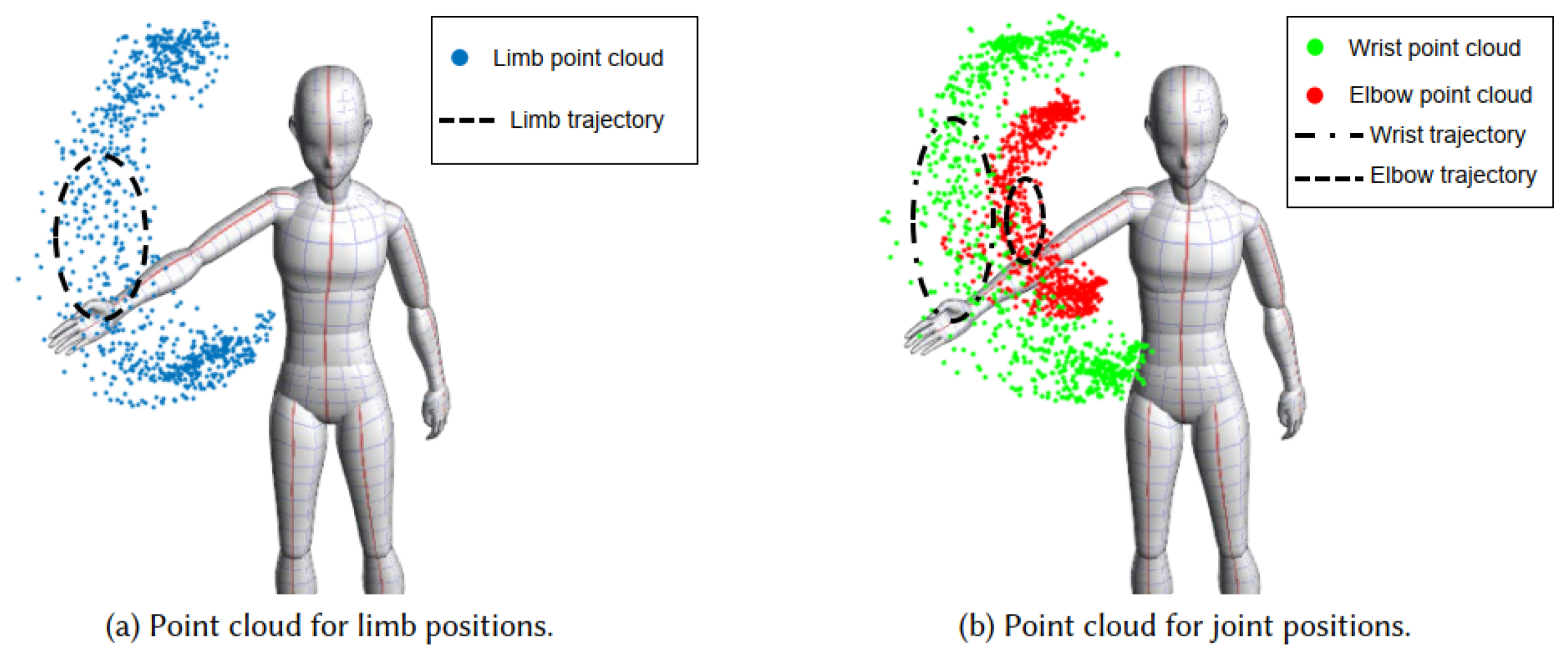

The RL effort focuses on the quadruped robot. A Mujoco simulation environment was implemented using Stable-Baselines3 to train policies. Basic requirements include stable straight walking and turning. Given the low frequency of the VLM (about 1 item/s), an additional velocity-tracking controller is required for real-time obstacle avoidance; this component is also implemented via RL.

Project Timeline

- Week 1: Build simulation scenes; model and control tests for legged and wheeled robots.

- Week 2: Build high-level VLM; complete multimodal control input and achieve intermediate instruction generation accuracy > 80%; perform sim-to-real optimization and demo on X5 RDK wheeled platform.

- Week 3: Deploy low-level quadruped PPO policy training in simulation; initial cross-morphology task tests.

- Week 4: Attempt high-precision simulation in 3dgs scenes.

Current Status and Objectives

Simulation environment setup and model construction are complete. Next steps prioritize data acquisition, reinforcement training, and real-world deployment. The objective is to complete the translation from natural language to intermediate action instructions and demonstrate morphology-agnostic behavior for simple tasks such as locomotion.

ALLPCB

ALLPCB