Introduction

When discussing augmented reality (AR) and its applications, one of the first things that comes to mind is the head-up display (HUD). HUDs are used in aviation and automotive applications to present relevant aircraft or vehicle information to users without requiring them to look down at an instrument panel.

Research shows humans process visual information much faster than written text. AR is similar to its close relative virtual reality (VR) in that it enhances a user’s perception of the surrounding environment. The key difference is that AR overlays virtual objects such as text or other visual elements on the real world, enriching or augmenting it. That enables users of AR systems to interact with their environment more safely and efficiently, unlike VR where users are immersed in a fully artificial environment. The combination of augmented and virtual elements is often described as mixed reality (MR). Many of us already use AR in everyday life, for example for mobile navigation or location-based games such as Pokémon GO.

VR, AR, and MR

HUDs represent one of the simplest practical AR applications. More advanced AR systems with wearable functionality are often called smart augmented reality. Market research firm Tractica predicted the market could reach $2.3 billion by 2020.

AR Applications and Use Cases

AR is advancing into many sectors, including industrial, military, manufacturing, medical, social, and commercial markets. A wide range of use cases is driving adoption. In commercial contexts, AR is frequently integrated into social media experiences, for example, identifying people you are talking with and surfacing background information. AR can also let consumers visualize products that are otherwise hard to visit, such as cars, yachts, or buildings.

Many AR applications rely on wearer-mounted smart glasses. These devices can increase efficiency in manufacturing environments by replacing paper manuals and showing assembly instructions. In healthcare, smart glasses facilitate sharing patient records and detailed views of injuries, providing treatment information for first responders and emergency room staff.

Example: Smart Glasses in Industrial Settings

A typical example is a large parcel logistics company that uses AR smart glasses to read barcodes on shipping labels. After a barcode is read, the smart glasses use the site WiFi infrastructure to communicate with backend servers and determine the package’s final destination. Once the destination is known, the glasses prompt the user where to place the package for continued dispatch.

Even without specific use cases, designing an AR system involves balancing conflicting requirements such as performance, security, power consumption, and future compatibility. These factors must all be considered to deliver an effective AR solution.

Implementing AR Systems

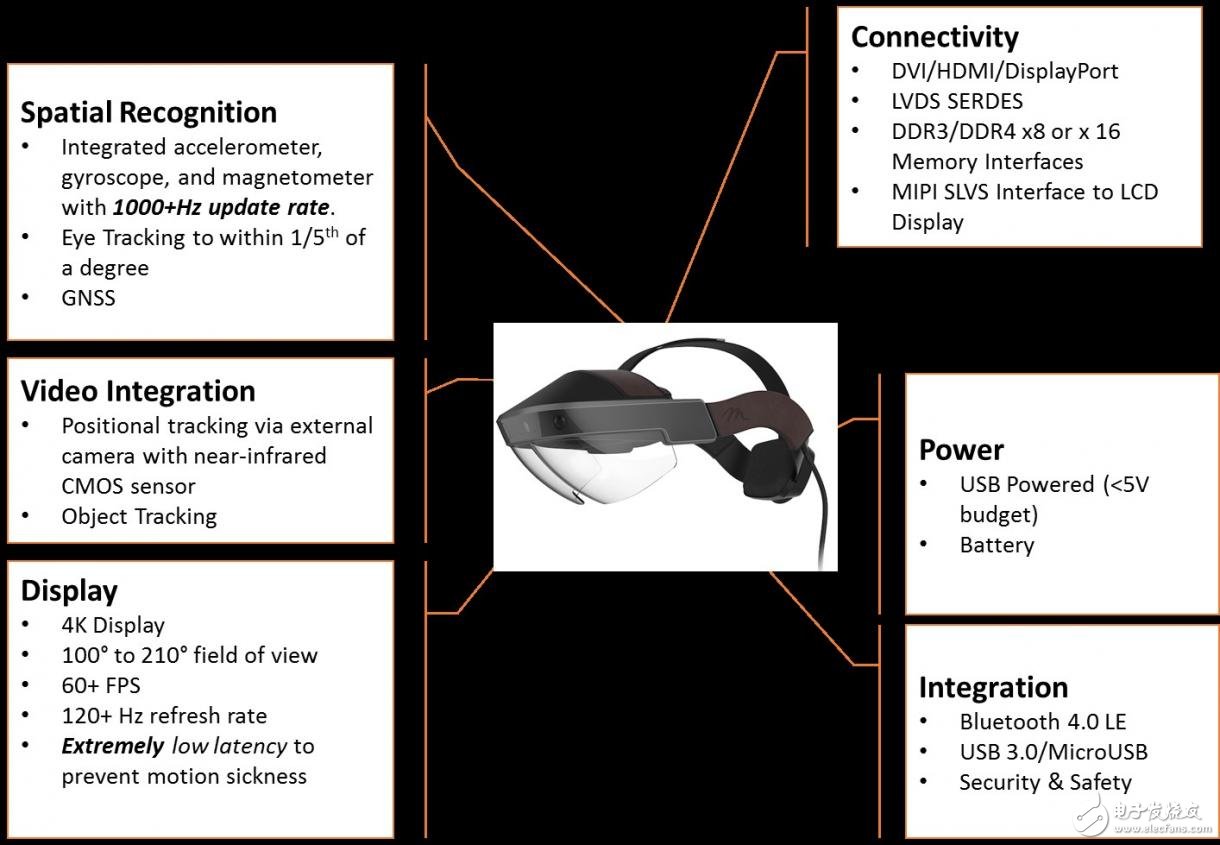

Complex AR systems must support multiple cameras and sensors and process data from them so the system can understand its surroundings. Camera sensors may operate in different spectral bands, such as infrared or near-infrared. Sensors may also provide inputs outside the electromagnetic spectrum to detect motion and orientation, for example MEMS accelerometers and gyroscopes, and positioning from global navigation satellite systems (GNSS). Embedded vision systems that fuse information from multiple heterogeneous sensors are generally referred to as heterogeneous sensor fusion systems. AR systems also demand high frame rates and the ability to perform real-time analysis, extracting and processing information from each frame. The processing capability required to meet these demands drives component selection.

AR System Breakdown

All Programmable Zynq-7000 SoCs or Zynq UltraScale+ MPSoCs are commonly used as the processing core for AR systems. These devices are heterogeneous processing systems that combine ARM processors with high-performance programmable logic. Zynq UltraScale+ MPSoCs are a next-generation family that also include an ARM Mali-400 GPU. Some members of the family include hardened video codecs that support H.265 and HEVC standards.

These devices let designers partition system architecture between processors and programmable logic to implement real-time analysis while offloading traditional processing tasks to the software ecosystem. The programmable logic can be used to implement sensor interfaces and processing, providing several benefits, including:

- Parallel implementation of N image-processing pipelines to meet application requirements.

- Flexible interconnects that allow designers to define and connect arbitrary sensors, communication protocols, or display standards, providing flexibility and an upgrade path.

To implement image-processing pipelines and sensor-fusion algorithms, developers can leverage high-level synthesis capabilities in tools such as Vivado HLS and SDSoC. These tools include specialist libraries, including OpenCV support. To shorten time to market, many teams also use third-party IP developed specifically for AR and embedded systems. IP providers include Xylon, which supplies LogiBRICKS IP cores that integrate quickly into the Vivado design flow, and Omnitek, which provides a range of IP building blocks for AR requirements such as real-time stitching and 3D processing modules.

Designers must also account for AR-specific features. Systems must connect to cameras and sensors observing the user’s environment while running the algorithms required by the target application. They typically need to track the user’s eyes and estimate gaze direction to determine what content should be displayed. Eye tracking is implemented by adding cameras that observe the user’s face and running eye-tracking algorithms. Once implemented, the system can determine the user’s gaze and selectively render content for the AR display to optimize bandwidth and processing. However, detection and tracking themselves are computationally intensive tasks.

Power and Energy Efficiency

Most AR systems are portable and untethered, often worn as smart glasses. Delivering these processing capabilities in power-constrained environments presents unique challenges. Zynq SoC and Zynq UltraScale+ MPSoC devices offer competitive performance per watt and support multiple options to reduce operating power. In extreme cases, the processors can enter standby modes where programmable logic representing a significant portion of device resources is powered down until woken by any source. When an AR system detects it is idle, these options can extend battery life. During active operation, unused processor units can be power-managed via clock gating. In programmable logic, following design rules such as efficient use of hardened blocks, careful planning of control signals, and intelligent clock gating in unused regions can achieve high power efficiency.

Security and Tamper Protection

Some AR applications, such as sharing medical records or production data, require high levels of information assurance (IA) and threat prevention (TP), particularly because AR systems are mobile and may be misplaced. Information assurance requires confidence in the data stored on the system and in the data transmitted and received. For integrated AI and secure systems, designers can use Zynq secure boot capabilities to enable encryption and use AES, HMAC, and RSA for authentication. When devices are properly configured, developers can use ARM TrustZone and a hypervisor to create secure, isolated environments that are inaccessible to untrusted code.

For threat prevention, these devices include on-chip XADC modules that can monitor supply voltage, current, and temperature to detect attempts to tamper with the AR system. If tampering is detected, Zynq devices provide options such as logging the event, erasing secure data, or preventing the device from reconnecting to supporting infrastructure.

Conclusion

AR systems are being adopted across commercial, industrial, and military sectors. They introduce a set of conflicting requirements: high performance, system-level security, and energy efficiency. Using Zynq SoCs or Zynq UltraScale+ MPSoCs as the processing core can address these challenges by combining programmable logic and processors, enabling real-time processing, flexible sensor interfaces, strong security features, and efficient power management.

ALLPCB

ALLPCB