1. Current state of AR/VR products

All AR/VR products require accurate spatial tracking and positioning to enable full head and hand interaction and to provide a convincing virtual experience.

Cardboard-style devices

These are the simplest VR devices, originating from Google's Cardboard. They use two convex lenses to display split-screen content from a phone and rely on the phone's built-in IMU to infer head motion and update the view. From 2015 many white-label units began shipping in China, but overall VR quality depends on the phone performance and lens optics, so these devices provide only an entry-level VR experience.

Some early VR offerings from video and game companies were of this form, typically paired with a simple Bluetooth controller for menu navigation. Google later introduced Daydream to improve the Cardboard experience, releasing Pixel and Pixel XL phones and the Daydream Viewer with a controller that includes an IMU. Daydream requires phones above a certain hardware specification to avoid motion sickness. With more phones supporting Daydream since 2016, user experience for this class of device has improved.

All-in-one headsets with head-only control

All-in-one headsets run without a phone or PC. Most products released in 2016 adopted 1080p or 2K displays (some use dual 2Kx2 panels) with optimized aspheric lenses, improving image quality and distortion. However, many of these units only support simple head motion control, requiring users to remain stationary and use head movements for input, without hand interaction. External accessories with spatial tracking functions, such as IMU-equipped controllers, motion backpacks, or tracking spheres, are used to improve interaction.

All-in-one headsets with spatial tracking

Spatial tracking and positioning generally follow two approaches: inside-out and outside-in. Inside-out uses sensors built into the headset to perceive external space and perform localization. Outside-in uses external sensors to observe the headset and controllers and provide positioning data. For all-in-one headsets, inside-out solutions are favored because they avoid pre-installation of external sensors, allowing users to operate the device anywhere. Several major manufacturers' second-generation all-in-one headsets released around 2017 adopted spatial tracking to enhance user experience, especially for VR games.

PC VR

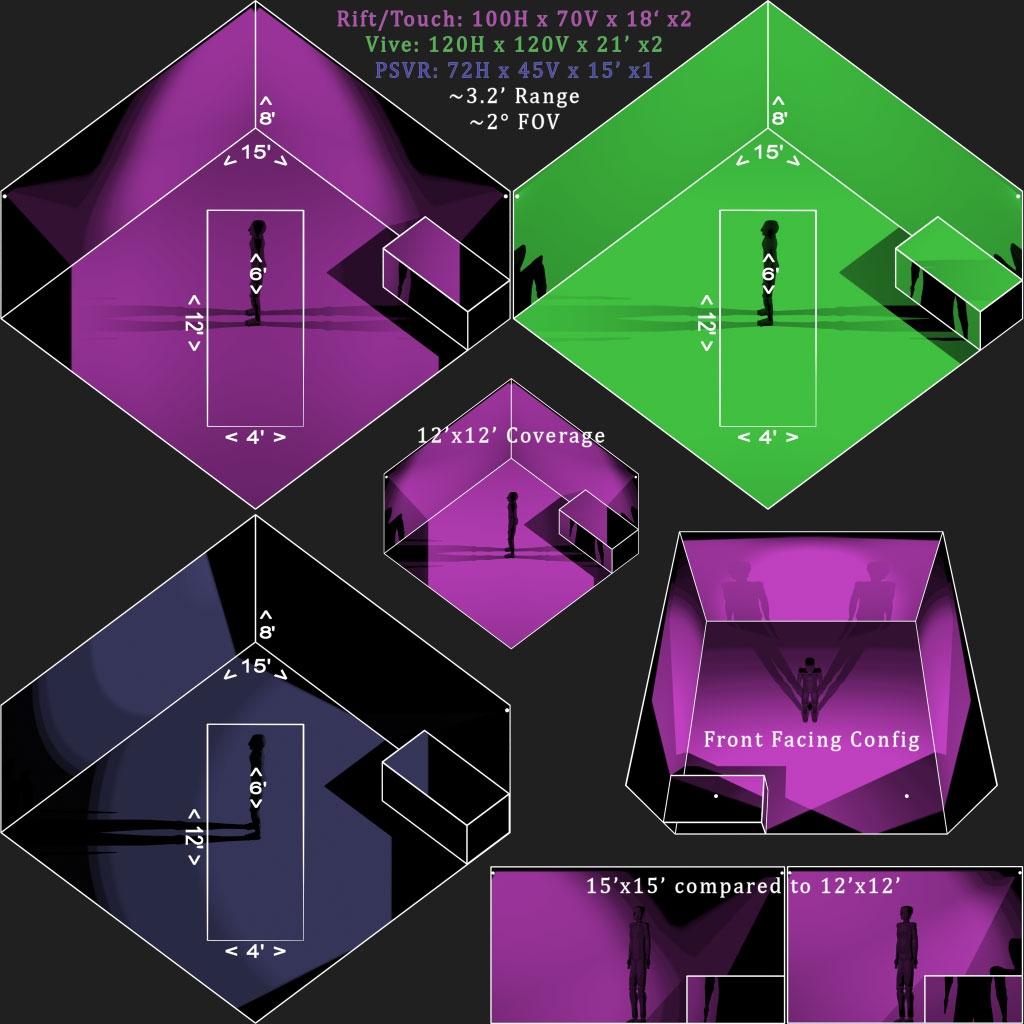

Representative PC VR systems include HTC Vive, Sony PSVR, and Oculus Rift. After their 2016 launches, HTC Vive became common in VR arcades and was used for many industry customizations. Sony sold about one million PSVR units within six months of launch, helping raise overall VR experience levels. These systems typically use external spatial tracking. For example, Vive uses external base stations that emit infrared laser scans; the headset and controllers sense the emitter signals to compute precise position and movement. PSVR and Oculus use external sensors to detect visible or infrared light emitted or reflected by the headset and controllers, allowing precise tracking of relative positions and trajectories and enabling more realistic VR interactions.

In contrast, many AR devices only project virtual content without integrating it with the real environment. These designs provide 6DOF head tracking but cannot fuse virtual objects with the physical environment. For example, some commercially known AR headsets support 6DOF motion tracking but lack full spatial mapping.

Some AR companies focus on first-person live streaming and only present captured information and video in the headset, so spatial mapping is not required. Other AR devices under development include an integrated TOF depth camera to enable 6DOF tracking plus spatial mapping.

Currently, devices with the best spatial tracking and environment matching include Lenovo's Phab2 Pro, based on Google Tango, and Microsoft's HoloLens. Both combine 6DOF tracking with SLAM to achieve spatial localization and motion tracking, enabling virtual objects to blend with the real environment so they appear fixed from any viewpoint. HoloLens generally provides higher SLAM accuracy and stability than Tango, which relates to hardware platform, sensor selection and quantities, and core algorithms.

Additionally, many companies in China are developing AR products. One notable device resembles HoloLens in both form and functionality and reportedly achieves comparable 6DOF+SLAM performance.

2. Categories of spatial positioning technologies

Spatial tracking and positioning can be broadly divided into two main categories: inside-out and outside-in.

1. Inside-out spatial tracking

Inside-out tracking uses onboard sensors to perceive the external environment, analogous to how human vision perceives surroundings. One major inside-out approach is SLAM. SLAM stands for simultaneous localization and mapping. It enables a device to start in an unknown position, estimate its trajectory while building an incremental map of the environment, and thereby achieve self-localization and navigation. Most AR products today use inside-out positioning.

Representative SLAM-based products include the Lenovo Phab2 Pro (Tango) and Microsoft HoloLens. Both combine depth sensing, fisheye cameras, and IMU fusion for motion tracking; they differ in depth sensing method and the number of fisheye cameras and tracked feature points. Tango uses TOF depth sensing, while HoloLens uses structured light. HoloLens also uses more fisheye cameras and additional structured-light emitters, which improves accuracy and stability, especially in low light or through glass. Tango implementations are evolving to support multiple fisheye cameras.

Lenovo Phab2 Pro uses a TOF depth camera, a 155-degree FOV fisheye motion camera, and an RGB camera. The fisheye camera fused with IMU data performs feature matching to estimate a motion trajectory, while the TOF cloud data provides depth. Tango's area learning allows quick relocalization when previously seen features are recognized, ensuring rapid startup in familiar spaces. The Tango working principle is not detailed here.

Microsoft HoloLens uses a distinctive architecture that includes a dedicated HPU (holographic processing unit) alongside CPU and GPU. It achieves standalone spatial tracking without a PC. HoloLens uses two structured-light depth cameras and four structured-light emitters, plus two fisheye cameras (one per eye side), enabling detection of more environmental feature points and providing higher localization accuracy and stability than Tango.

Another inside-out method is marker-based tracking, which places visual markers such as QR-like patterns, colored shapes, or light points in the environment. The device detects these markers and infers its position and motion. HTC Vive is an example of marker-based outside technology; its working principle is described below.

Vive's two fixed base stations emit rotating infrared laser scans. Each base station contains an infrared LED array and two rotating emitters oriented along orthogonal axes, producing X and Y scans with a fixed phase relationship. The headset shell has 32 photodiodes arranged on its surface facing different directions. These sensors detect the arrival time and phase relationship of the X and Y scans from the base stations. By computing phase differences, the system precisely determines headset position and motion trajectory. Vive controllers use a similar design with multiple photodiodes.

An alternative outside-in package from a China-based company provides Vive-like spatial tracking for headsets without native tracking. The package includes an external dual-camera module and two controllers with tracking light spheres. The dual-camera module mounts externally on the headset and captures the controllers' light spheres to determine controller positions. The controllers report their six-DOF information via Bluetooth; the module fuses pose data and light-sphere positions to provide full tracking. This approach works under any ambient lighting and offers high precision, but it does not map the overall room geometry; it supplies controller positional data to upgrade headsets without native spatial tracking.

2. Outside-in spatial tracking

Outside-in tracking is a mature approach for VR. Sony PSVR and Oculus Rift use similar outside-in schemes. Oculus often combines external cameras with active infrared markers for higher precision and faster response. PSVR uses a system derived from PlayStation MOVE and Kinect-like principles, with external stereo depth cameras for motion recognition and tracking. The headset and controllers have light indicators or colored spheres; external cameras monitor their motion paths. The PS station also receives IMU data from headset and controllers via Bluetooth and fuses visual and IMU data to compute full poses and trajectories.

Compared with Vive, PSVR's spatial tracking accuracy is lower. Although PSVR offers comfortable ergonomics, lower tracking precision can affect game experience. Sony filed patents indicating a potential next-generation approach similar to fixed emitter methods, which could improve precision and provide full 360-degree tracking without blind spots.

Oculus uses active infrared tracking: IR LEDs on the headset and controllers are captured by external IR cameras to calculate motion trajectories and spatial positions.

One limitation of camera-based outside-in tracking is occlusion: if the user faces away from the external cameras, the cameras may not detect the light points on the headset or controllers, leading to possible tracking loss. This issue does not occur with systems that use rotating base station emitters, such as Vive.

Another outside-in marker-based approach is to fix dual cameras in the environment and place tracking light spheres on the headset and controllers. The cameras record 3D positions and motion data and send it to the headset via Bluetooth or Wi-Fi for processing and display, enabling free movement and interaction within a virtual environment.

ALLPCB

ALLPCB