Introduction

With rapid advances in artificial intelligence, neural networks have shown significant potential and wide applicability across many fields. However, the complexity and scale of neural network models continue to grow, challenging traditional serial computation approaches, such as slow computation and long training times. Therefore, parallel computing and acceleration techniques have become essential to improve performance and efficiency, meeting demands for fast responses and large-scale data processing.

Fundamentals of Parallel Computing for Neural Networks

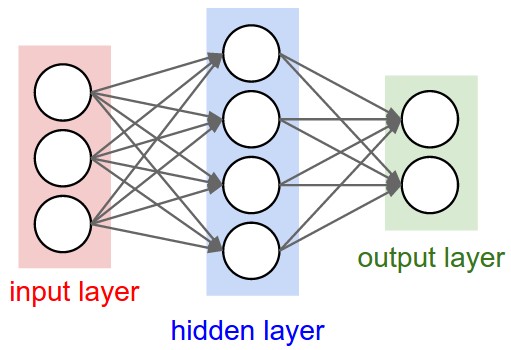

Parallel computing for neural networks refers to dividing the network's computation tasks into multiple subtasks that run concurrently on multiple processing units, thereby increasing overall processing speed. The idea is rooted in the network structure: connections and computations among neurons have a degree of independence and parallelism. For example, in feedforward networks, computations of neurons within a layer can often be executed in parallel because a layer's outputs depend only on the previous layer's outputs and the current layer's weights.

Main Parallelization Approaches

Data parallelism

Data parallelism splits the training dataset into multiple subsets, with each processing unit, such as a GPU or CPU core, handling one subset. Each subset independently performs forward and backward propagation to compute local gradients, which are then aggregated to update the network weights. This approach suits large datasets and makes effective use of hardware parallelism.

Model parallelism

For very large models that cannot fit into a single processing unit, model parallelism assigns different parts of the model to different processing units. For example, different layers or groups of neurons can be placed on separate GPUs. During computation, processing units must communicate to exchange intermediate results and complete forward and backward passes. Model parallelism addresses hardware memory limits but introduces significant communication overhead, requiring careful design and optimization.

Acceleration Techniques for Neural Networks

Hardware acceleration

GPU (graphics processing unit) acceleration: GPUs provide many parallel compute cores suitable for the heavy matrix and vector operations in neural networks. Compared with traditional CPUs, GPUs can handle more computations in the same time frame, substantially accelerating training and inference.

Dedicated accelerators: Examples include Google's TPU (Tensor Processing Unit), which is designed specifically for neural network workloads and offers higher energy efficiency and compute performance. TPUs perform well on large-scale training and inference tasks and can integrate with existing frameworks such as TensorFlow.

Software acceleration

Algorithm optimization: Improving network architectures and computation methods reduces computational complexity and redundant operations. Examples include using more efficient activation functions and optimizing the steps in backpropagation to increase speed without degrading model performance.

Mixed precision computing: Using lower-precision formats for parts of the computation, such as 16-bit floating point instead of 32-bit, can improve computational and storage efficiency with minimal impact on accuracy. Combined with hardware support for mixed precision, this can further accelerate training and inference.

Advantages of Parallel Computing and Acceleration

Faster computation

Parallel computing and hardware acceleration can significantly reduce training time, helping models converge faster and speeding up research and development. In deployment, faster inference can meet real-time requirements in scenarios such as autonomous driving and intelligent surveillance.

Handling large-scale data and models

Parallel computing enables neural networks to process larger datasets and more complex model structures, improving generalization and performance for real-world problems.

Energy and cost savings

Hardware acceleration can increase computational energy efficiency, reducing energy consumption and operating costs for the same workload. Parallel computing also improves hardware utilization, minimizing resource waste.

Challenges and Research Directions

Communication overhead

In parallel and distributed setups, especially model parallelism, communication between processing units can become a performance bottleneck. Designing efficient communication strategies and algorithms to reduce latency and data transfer volume is a key research area. Techniques such as asynchronous communication and compressed data transfers can help optimize communication.

Hardware-software co-optimization

Maximizing hardware acceleration requires tight coordination at the software level. With a wide range of hardware accelerators and programming models, achieving efficient hardware-software collaboration and developing general, user-friendly parallel computing tools remains an open challenge.

Automatic parallelization and optimization

Manual design of parallel strategies and optimizations often demands extensive expertise and must be tailored to specific models and hardware. Research into automatic parallelization techniques and intelligent optimization algorithms that generate efficient parallel plans based on the model and hardware environment can reduce development effort and improve system performance.

Parallel computing and acceleration techniques play a critical role in advancing and deploying artificial intelligence. By selecting appropriate parallelization strategies and acceleration technologies, practitioners can improve computational efficiency and performance to handle growing data volumes and increasing task complexity. The field still faces several challenges that require ongoing collaboration between academia and industry to drive further innovation and capability improvements.

ALLPCB

ALLPCB