The combination of artificial intelligence (AI) and sensors has broad and rapidly developing applications across many fields, particularly in automation, the Internet of Things (IoT), healthcare, smart cities, and Industry 4.0. Sensors collect physical data from the environment, such as temperature, pressure, light, sound, and acceleration, while AI analyzes and processes that data to support intelligent decisions or automatic responses.

Edge AI combined with sensor technologies is advancing quickly and promoting innovation in areas such as intelligent monitoring and predictive maintenance, edge computing for smart cities, autonomous driving and ADAS systems, smart medical devices, precision agriculture, Edge AI accelerators, and embedded platforms. The integration of Edge AI and sensors is driving automation and intelligence across industries. As hardware and AI algorithms continue to advance, these applications will become more capable and efficient.

Sensors provide the real-world data that AI uses to perceive its environment. There are many sensor types, each offering different physical measurements, and AI can analyze, learn from, and make decisions based on those measurements. Common sensor types that are often combined with Edge AI include image sensors, radar sensors, acoustic sensors, inertial sensors, pressure and strain sensors, environmental sensors, biomedical sensors, LiDAR, and infrared sensors. Combined with sensor fusion and AI techniques, these sensors enable more efficient data analysis and decision-making.

Vision Sensors Help Machine Learning See the World

Vision sensors with embedded machine learning capabilities are demonstrating strong potential in fields such as automation, industrial inspection, smart cities, and healthcare. These sensors include on-device ML models that can process and infer locally. By implementing machine learning at the edge, sensors can perform real-time image classification, object recognition, and behavior monitoring without transferring large volumes of data to the cloud.

Many vision sensors include hardware accelerators such as digital signal processors (DSPs), field-programmable gate arrays (FPGAs), or dedicated neural processing units (NPUs) to increase processing speed and reduce power consumption for ML tasks. Some high-end vision sensors can update their models as new data is collected to improve accuracy. For example, in industrial inspection, sensors can continuously learn new defect patterns to optimize product inspection.

Vision sensors with embedded machine learning are used in industrial inspection and automation, public safety and smart cities, smart retail, medical applications, and autonomous driving and ADAS systems. The deep integration of Edge AI and vision sensors reduces reliance on cloud computing, lowers latency, and improves data privacy. As the technology evolves, sensors may support automated data labeling and model tuning, reducing the need for human intervention and improving deployment efficiency. These sensors are already applied in many intelligent scenarios and are likely to see further development in industrial, urban management, medical, and retail domains.

AI Vision Sensors with Embedded ML and Edge Computing

Commercial AI vision sensors commonly provide embedded machine learning and edge computing capabilities and are used across a range of applications. Several representative AI vision sensor platforms are described below.

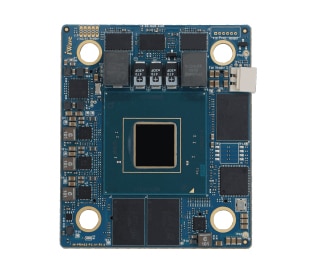

1. NVIDIA Jetson series

The NVIDIA Jetson series integrates GPUs and AI accelerators to support strong machine learning compute capability. Variants such as Jetson Nano, Jetson Xavier NX, Jetson AGX Xavier, Jetson Orin, and Jetson TX2 address different application requirements, including autonomous systems, smart cities, robotics, drones, and Edge AI computing. With connected cameras, Jetson modules can be used for object detection, pedestrian recognition, and behavior analysis.

The Jetson series delivers efficient inference performance; its GPU and tensor cores provide substantial compute for visual processing tasks. Combined with Edge AI platforms, Jetson supports real-time processing of data from multiple cameras and sensors, reducing latency and cloud dependence for applications that require low latency and high-precision computation.

Jetson also has a rich development ecosystem and provides the JetPack SDK, which includes tools for deep learning, computer vision, and GPU acceleration, enabling developers to rapidly develop and deploy AI vision applications.

2. Intel RealSense D400 series

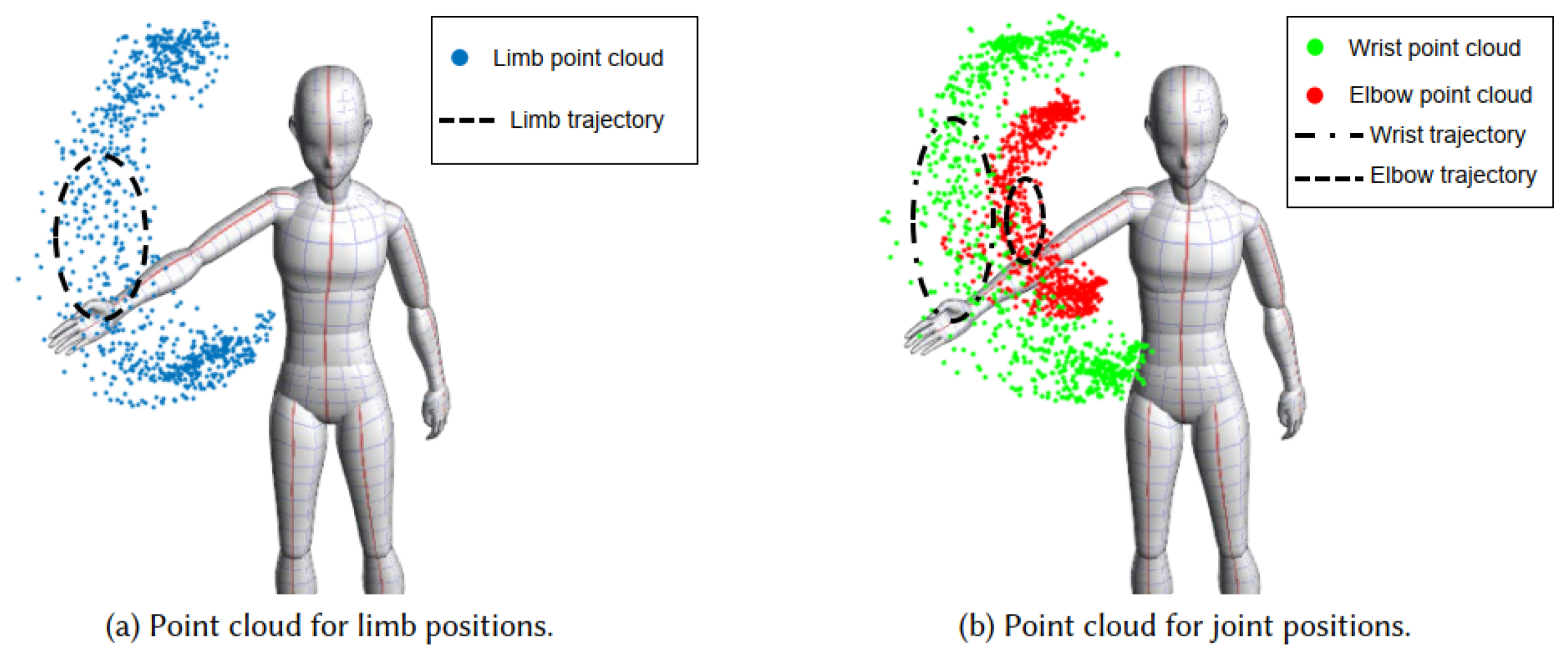

The Intel RealSense D400 series are vision sensors with depth sensing capabilities and real-time processing of depth images. Models such as D415, D435, and D455 use infrared cameras and stereo vision to support 3D depth detection, and are commonly used for robot navigation, industrial automation, virtual reality, object tracking, and human pose analysis. They support real-time 3D reconstruction and depth perception.

Based on binocular stereo vision, the D400 series uses two cameras and triangulation to generate accurate depth maps, allowing the sensors to perceive object shape and distance for 3D reconstruction and object recognition. They include a dedicated RealSense processing chip for real-time depth computation and are designed for embedded and Edge AI applications with low power consumption for extended operation. The D400 series performs reliably across varying lighting and environmental conditions and can provide accurate depth data at longer ranges.

In the depth sensing domain, the RealSense D400 series plays an important role. As machine learning and AI models advance, depth sensors will be capable of running more complex models at the edge to enhance real-time perception and analysis.

3. Luxonis OAK-D series

The Luxonis OAK-D series are AI cameras based on the Myriad X VPU that support embedded deep learning models and Edge AI processing. Equipped with stereo cameras and on-board AI accelerators, OAK-D cameras can perform object detection and tracking for applications such as autonomous navigation, industrial robotics, and intelligent monitoring, providing real-time 3D depth perception and AI inference.

OAK-D integrates computer vision and depth sensing for embedded scenarios and emphasizes efficient on-device inference. The cameras combine an AI accelerator, stereo vision, depth sensing, and an RGB camera, and provide open-source support through tools like OpenCV and DepthAI, offering developers a flexible programming environment to develop, test, and deploy AI applications.

The OAK-D product line includes OAK-D, OAK-D Pro, and OAK-D Lite variants that perform real-time AI inference, 3D depth perception, and environmental sensing. With embedded AI acceleration and depth sensing, these cameras are commonly used in embedded AI applications.

4. Himax WiseEye series

The Himax WiseEye series targets low-power AI vision applications and includes models that support tasks such as face recognition, gesture recognition, and object detection while consuming minimal energy. These sensors are suitable for battery-powered devices and are used in IoT endpoints, smart home products, access control systems, and portable devices.

WiseEye devices incorporate low-power image sensing, on-device AI capabilities, and power management techniques to run complex AI tasks at low energy cost. They support real-time on-device inference to avoid data transmission delays, making them suitable for scenarios requiring immediate responses. WiseEye supports flexible hardware interfaces and an open software environment for developer integration.

5. Sony IMX500 series

Sony IMX500 is a CMOS image sensor that integrates on-sensor AI processing. By embedding an AI processing unit within the image sensor, the IMX500 can perform image analysis and inference locally without relying on external processors or cloud services, reducing latency and preserving data privacy. It is used in smart cameras, retail monitoring, autonomous systems, and smart city applications where reducing data transfer and enabling real-time analysis are important.

The IMX500 includes Sony DSP and NPU components designed for AI inference and image processing, enabling on-device object detection, recognition, and classification without transferring large image datasets to a central processor or the cloud. This local inference capability reduces network bandwidth use and improves processing efficiency.

In addition to AI inference, the IMX500 retains Sony's image processing capabilities to capture high-quality images in low-light and high-contrast environments. The sensor supports multiple deep learning frameworks and models, allowing developers to optimize models for specific applications.

6. Useful Sensors Person Sensor

The Useful Sensors Person Sensor is a compact, low-power AI vision sensor for person detection and basic facial recognition and tracking. Designed for lightweight Edge AI computing, it can detect faces and perform basic face recognition on-device, suitable for embedded systems and IoT applications. The Person Sensor offers a low-power, plug-and-play option for applications such as access control, automation, and smart devices, although its capabilities are limited compared with more advanced vision platforms.

The sensor supports on-device inference to avoid cloud-based processing delays and privacy risks, with built-in models optimized for face detection and recognition. Due to its simplified design, the Person Sensor is focused on basic facial tasks and has limited detection range and field of view, making it unsuitable for large-scale scene coverage.

7. OpenMV Cam H7 Plus

The OpenMV Cam H7 Plus is a low-cost AI vision sensor targeted at embedded machine vision development. It uses an ARM Cortex-M7 microcontroller and supports TensorFlow Lite for model inference, making it suitable for education, IoT, robotics, and DIY projects for tasks such as object recognition, color tracking, and optical character recognition.

The H7 Plus pairs a camera module with a microcontroller to provide local image processing and basic deep learning capabilities. With a 480 MHz STM32H743 ARM Cortex-M7 microcontroller, it can run lightweight ML models such as convolutional neural networks (CNNs) for real-time vision tasks. It supports MicroPython and offers expansion interfaces for integration with other sensors and devices, enabling construction of complex IoT systems. While it provides good value for basic AI tasks, its compute performance and image quality are limited compared to higher-end platforms and may not meet the requirements of applications that demand very high image fidelity.

8. Nicia Vision

Nicia Vision is an AI vision sensor designed for embedded applications to provide real-time object detection, classification, and tracking. The sensor combines AI inference capabilities with low-power design, making it suitable for edge environments such as IoT devices, smart home systems, automation, and industrial use.

Nicia Vision includes an embedded AI inference engine for efficient edge computation and supports a range of vision tasks from object recognition to scene analysis. Its low-power characteristics make it suitable for battery-powered devices and long-running intelligent equipment. Relative to high-end AI platforms, Nicia Vision is optimized for lightweight vision tasks and may require custom model development and optimization, which assumes developers have some experience with deep learning.

See reference [ABX00051] for additional technical information on Nicia Vision.

Sensor Fusion Enables Efficient AI Data Analysis and Decisions

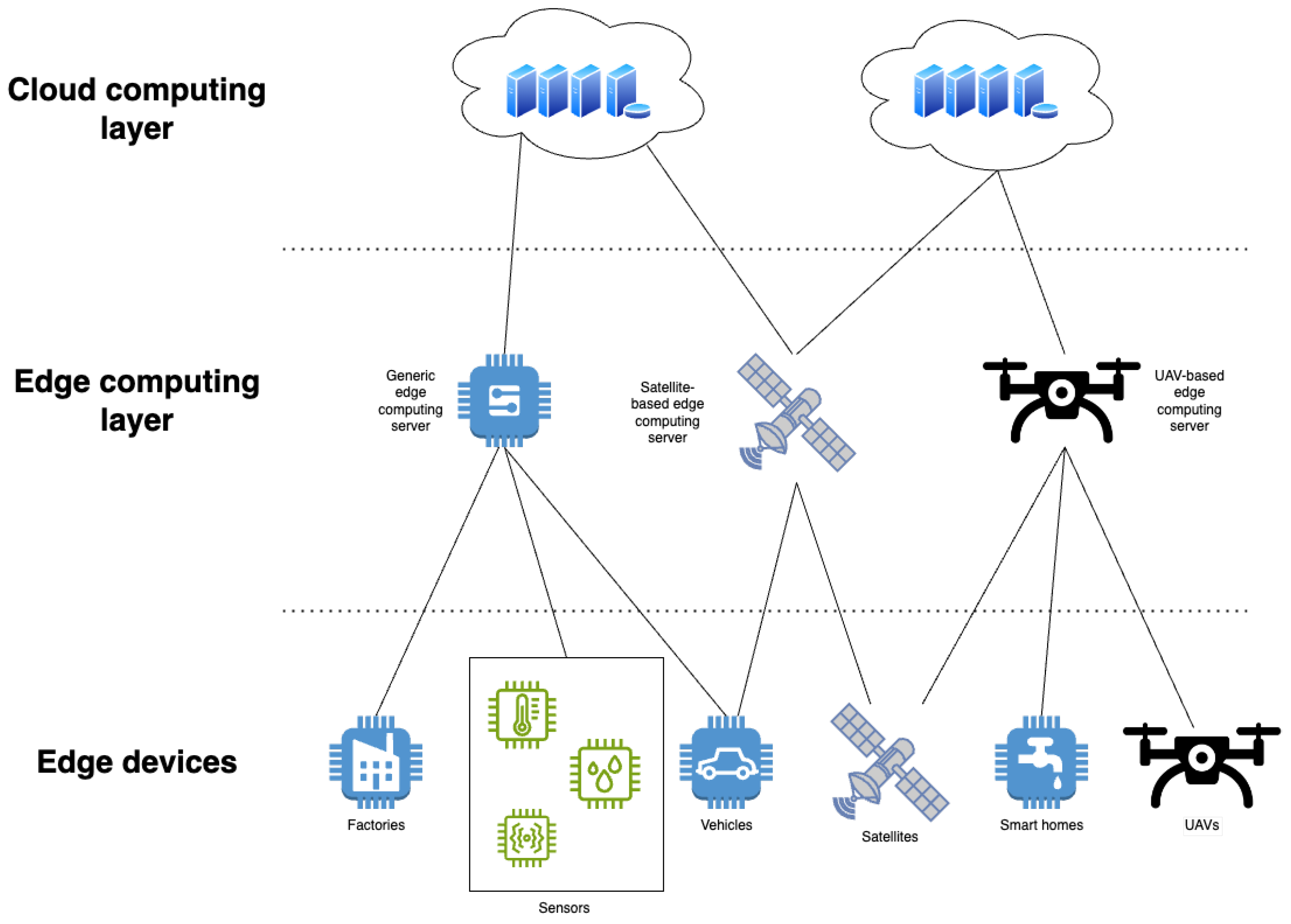

Sensor fusion refers to integrating data from multiple sensor types to improve perception accuracy and reliability. In AI applications, combining sensor fusion with AI techniques enables more efficient data analysis and decision-making. This approach is increasingly important across domains from autonomous driving to smart city systems.

Sensor fusion improves data accuracy and stability because each sensor type has specific strengths and limitations. For example, image sensors provide high-resolution visual detail in sufficient light but perform poorly in low light, while radar can better penetrate fog or rain. Combining data from multiple sensors compensates for individual shortcomings. AI algorithms can process and integrate these multi-source data streams to produce more reliable and accurate results.

Sensor fusion enables multidimensional perception because different sensors capture complementary information: image sensors provide visible-light images, acoustic sensors capture sound, and infrared sensors measure thermal radiation. By fusing these data, AI systems obtain richer environmental representations and can perform more precise analysis. For example, autonomous vehicles fuse camera, radar, and LiDAR data to achieve accurate localization and obstacle avoidance in complex environments.

Sensor fusion can also improve decision and response speed. AI systems that analyze fused, multi-modal data can perform comprehensive analysis in short timeframes and deliver faster responses. In healthcare, combining biomedical sensor data such as heart rate, blood oxygen, and blood pressure with environmental sensors allows AI to assess patient status quickly and provide real-time alerts.

Combined with AI advances, sensor fusion supports more intelligent applications such as smart homes, intelligent transportation, and urban monitoring. For example, fusing pressure and strain sensor data with image and environmental sensor data can enable automatic recognition of object shape, size, and environmental changes, improving the responsiveness of robots and automation systems.

Sensor fusion is an important driver for AI application development and can accelerate progress in autonomous driving, healthcare monitoring, smart cities, Industry 4.0, and intelligent robotics. Challenges remain, including data synchronization, processing complexity, algorithm optimization, and low-power design. As edge computing grows, on-device processing of sensor data combined with AI will further enhance real-time analysis capabilities and enable additional real-time applications.

Conclusion

Sensors combined with AI are evolving rapidly. These sensors not only collect data but also enable real-time processing, analysis, and prediction through AI, improving automation and intelligence across industries. With advances in edge computing, 5G networks, and AI algorithms, the integration of sensors and AI will play an increasingly significant role across more application areas.

The combination of sensor fusion and AI is a key trend for intelligent applications. By fusing data from different sensor types, AI systems can obtain more accurate and comprehensive information and make more effective decisions. This technology has broad prospects in autonomous driving, healthcare, smart cities, and industrial automation, and will continue to support technological development across these fields.

ALLPCB

ALLPCB