Overview

Smartphones and tablet computers use multitouch interfaces every day. When you swipe a screen or pinch to zoom an image, the device senses those finger interactions in real time. This article explains the basic concepts and common implementation methods of multitouch technology.

What is multitouch

Multitouch is an input technique that allows a user to control graphical applications with multiple fingers. It combines human-computer interaction methods with hardware sensors so users can interact without traditional peripherals such as a mouse or keyboard.

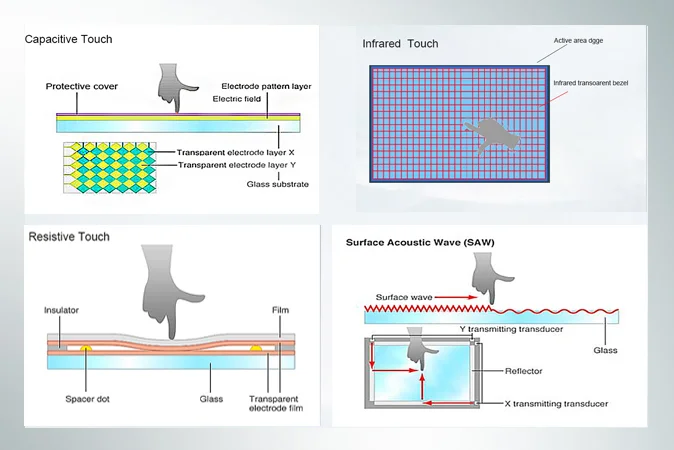

Physical sensing mechanisms

Technologies that let a system detect physical touch include temperature sensing, force sensing, high-speed cameras, infrared sensing, optical sensing, resistance changes, ultrasonic receivers, microphones, laser amplitude sensors, and shadow sensing.

Common multitouch implementations

LLP (laser light plane). Introduced by Microsoft in the LaserTouch project and later developed by community groups, this approach projects an infrared plane across the screen. When the plane is interrupted by a finger, reflected infrared light is captured by a camera below the screen and analyzed to determine touch events.

FTIR (Frustrated Total Internal Reflection). A layer in the screen is illuminated by LEDs. When a user presses the surface, the internal reflection pattern changes. Sensors detect the change in light and localize the contact point.

TouchLight. Developed by Microsoft, TouchLight uses a projection-based method to illuminate the interaction area with infrared. When the projected field is interrupted, cameras capture the reflection and the system processes the data to respond.

Optical touch. Cameras or optical sensors mounted at the top edges of the display capture gestures and touch locations. The captured information is converted into coordinates for further processing.

Simple multi-touch gestures and the XY-axis method

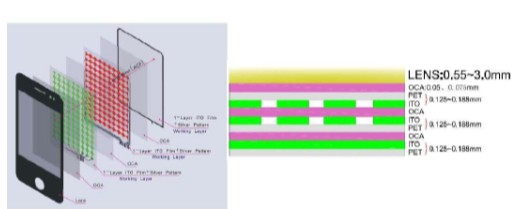

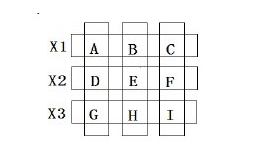

Basic multi-touch gestures, such as two-finger pinch or rotate, can be recognized by detecting the motion directions of two contact points even if their exact positions are not fully resolved. A simple implementation uses separate X and Y sensing channels. For example, dividing the conductive ITO layer into X and Y axes allows the system to sense two touches, but sensing a touch and precisely localizing it are distinct problems.

With single-touch input, each axis typically shows one distinct peak that identifies the touch coordinate. With two simultaneous touches, each axis may show two peaks. Those peaks can be produced by different combinations of touch points, creating ambiguity. Some systems attempt to resolve this by using timing information, assuming touches are not truly simultaneous. However, simultaneous touches still present ambiguity for purely axis-based systems.

Applications and considerations

Multitouch interfaces are being explored in new application areas. Examples include using automobile windshields as information displays and gesture-controlled interfaces for vehicle systems. Such approaches could enable new interaction models, but safety, distraction, and usability implications need careful evaluation before deployment.

Other research applies touch sensing to athlete training, using predefined motion patterns to monitor performance. Compared with camera-based methods, direct touch sensing can offer simpler and more structured measurement in some scenarios. As research continues, additional practical applications are likely to emerge.

ALLPCB

ALLPCB