Overview

Microprocessor applications in artificial intelligence (AI) are becoming increasingly widespread and deep, forming an important driving force for AI technology development. This article examines the core roles of microprocessors in AI, specific application cases, technical challenges and mitigation approaches, and future trends, with the goal of providing a clear technical overview of their applications and significance.

1. Core roles of microprocessors in AI

1.1 Providing computing power

As the central component of computing systems, a microprocessor's computing capability directly determines the processing speed and efficiency of AI systems. As AI technologies advance, demand for computing power grows, especially for complex algorithms such as deep learning. Modern microprocessors increase computing capabilities through advanced process nodes, multicore architectures, large caches, and other techniques to meet the high-performance computing needs of AI systems.

1.2 Optimizing algorithm execution

AI depends on algorithms, and microprocessors execute those algorithms. By providing instruction sets and hardware accelerators, microprocessors can be optimized for specific classes of AI algorithms to improve execution efficiency. For example, for compute-intensive tasks in deep learning such as matrix multiplication and convolution, microprocessors can accelerate these operations with integrated vector processing units (VPU), tensor processing units (TPU), and similar accelerators to significantly increase execution speed.

1.3 Enabling low-power design

Many AI devices must operate for extended periods, so low-power design is critical. Microprocessors reduce power consumption by using low-power techniques such as dynamic voltage and frequency scaling and low-power standby modes, and by integrating power management units (PMU). These measures effectively lower device power draw and extend operating time, which is especially important for portable AI devices such as smartphones and wearables.

2. Application examples

2.1 Smart home

Smart home is a major application area for AI. Microprocessors act as the central controllers in smart home systems, receiving data from various sensors and processing it with AI algorithms to enable automation and intelligent management. For example, smart locks can implement fingerprint unlocking using an integrated microprocessor and fingerprint sensor; smart speakers can provide voice interaction using a microprocessor coupled with speech recognition algorithms.

2.2 Autonomous driving

Autonomous vehicles are another key AI application. They must process real-time data from cameras, radar, lidar, and other sensors, and perform complex decision-making and control. Microprocessors are crucial in these systems, handling sensor data and running onboard AI for path planning, object detection, and collision avoidance. Microprocessors also need to communicate and exchange data in real time with other vehicle control systems to ensure safety and stability.

2.3 Smart manufacturing

Smart manufacturing, a component of Industry 4.0, is an important industrial application of AI. Microprocessors are widely used in intelligent equipment and production lines, collecting and processing production data in real time to enable automated control and intelligent management. For example, industrial robots use embedded microprocessors and sensors for precise control and autonomous navigation; production lines leverage microprocessors and IoT technologies for real-time data acquisition and analysis to optimize workflows and improve efficiency.

3. Technical challenges and solutions

3.1 Explosive data volumes

AI development leads to exponential growth in data volumes, placing higher demands on microprocessor data-processing capabilities. To address this, manufacturers introduce higher-performance, higher-capacity products and optimize algorithms and architectures to improve data-processing efficiency. Distributed computing and cloud computing also provide approaches for handling large datasets.

3.2 Power consumption

Long operating times of AI devices increase power requirements. To reduce energy consumption, microprocessor designers employ various low-power techniques and optimize algorithms and architectures to lower processor power draw. Energy-efficient power management solutions also help reduce overall system power consumption.

3.3 Security and privacy

Widespread AI deployment raises security and privacy concerns. As a core component of AI systems, microprocessor security and reliability directly affect overall system safety. To protect system integrity, manufacturers incorporate security measures during design and production, and use built-in security chips and cryptographic algorithms to secure data transmission and storage.

4. Future trends

4.1 Proliferation of heterogeneous computing

As AI application scenarios grow in scope and complexity, single-purpose microprocessors struggle to meet all requirements. Heterogeneous computing architectures will become a mainstream approach, combining different processor types such as CPUs, GPUs, FPGAs, and TPUs into a unified platform to handle diverse workloads. This approach leverages the strengths of each processor type to improve overall performance and efficiency.

4.2 Rise of customized chips

With increasingly diverse and specialized AI application requirements, customized chips will gain importance. Application-specific chips can be designed and optimized for particular use cases to achieve higher performance and lower power consumption, making them well suited for domains such as healthcare, finance, and education.

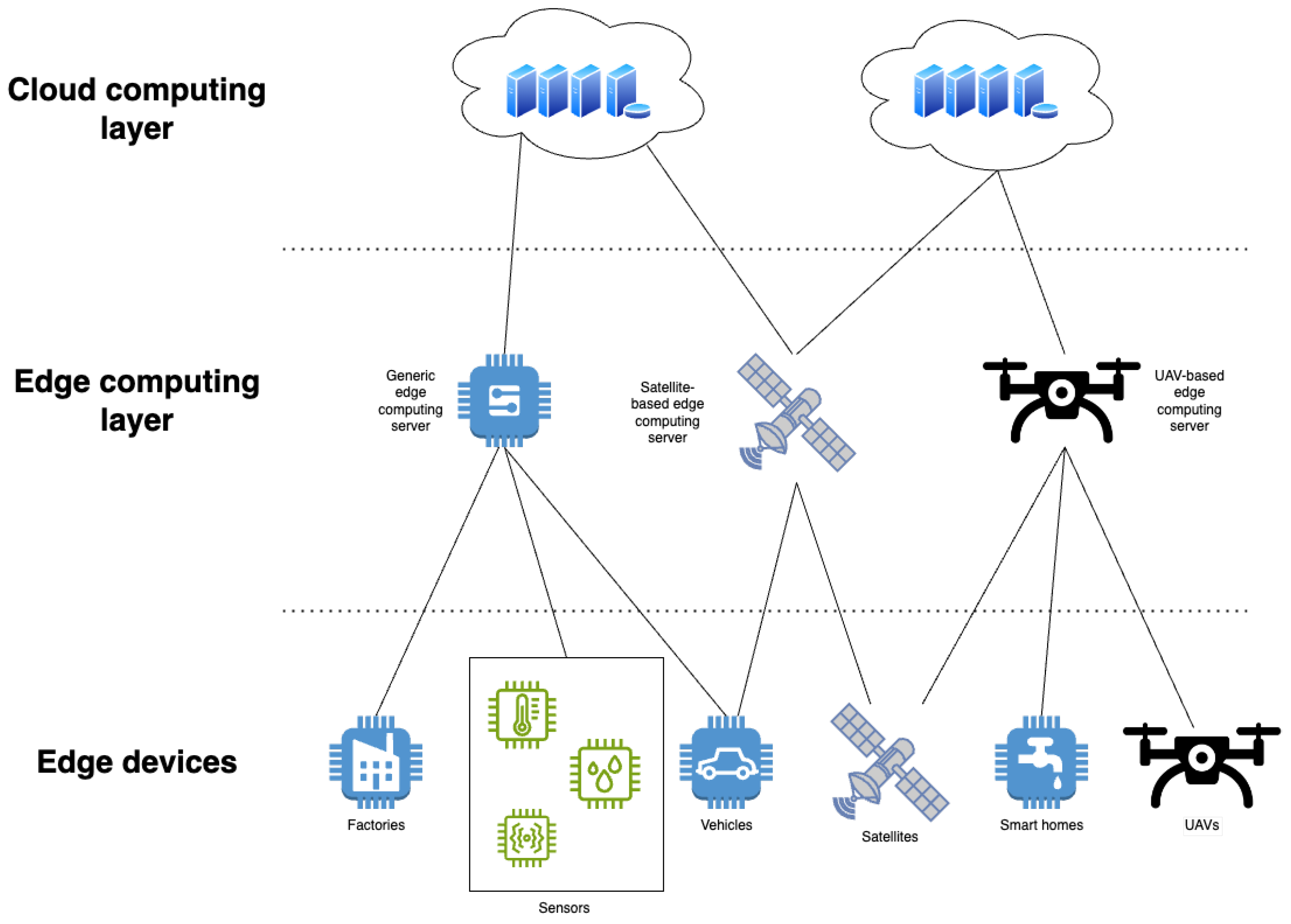

4.3 Growth of edge computing

As IoT technologies expand, edge computing will become a key trend. Edge computing moves computation and data processing toward the network edge, namely devices or local data centers, to reduce latency, increase responsiveness, and protect data privacy. Microprocessors for edge devices must provide high efficiency, low power consumption, and robust data-processing capabilities to run complex AI algorithms locally.

4.4 Exploration of neuromorphic computing

Neuromorphic computing, which models the brain's neurons and synaptic connections, offers a potential path to more efficient and intelligent computation. Applications of microprocessors in neuromorphic computing are still nascent, but research institutions and companies are exploring this area. Neuromorphic microprocessors designed with biologically inspired computing units and architectures may achieve lower power use, higher parallelism, and improved learning capabilities.

4.5 Integration with quantum computing

Quantum computing remains experimental, but its potential for vast computational power has attracted significant interest. As quantum technologies mature, integration between traditional microprocessors and quantum processors may become feasible. Quantum microprocessors would exploit qubits' superposition and entanglement to perform computations beyond the capabilities of classical processors, requiring new design paradigms and engineering approaches.

5. Conclusion

Microprocessors, as the central components of computing systems, play an increasingly broad and deep role in artificial intelligence. They provide computing power, optimize algorithm execution, and enable low-power designs, supporting the advancement of AI technologies. As technologies and application scenarios evolve, microprocessor applications in AI will become more diverse and specialized. Continued innovation in design and cross-disciplinary collaboration will be necessary to address challenges such as growing data volumes, energy efficiency, and security, and to fully realize the technical advantages of microprocessors in AI systems.

ALLPCB

ALLPCB