Introduction

Since its introduction, the Transformer has transformed the NLP field because of its superior ability to model complex dependencies in sequences. Although pretrained language models based on the Transformer have achieved strong results across nearly all NLP tasks, they have a preset sequence length limit. That makes it difficult to extend their success to longer sequences beyond those seen during training, i.e., length extrapolation. To improve Transformers' length extrapolation, many extrapolatable positional encodings have been proposed.

Motivation

Humans can understand potentially unbounded-length discourse by recognizing components and structure, even with limited exposure. In NLP, this ability is called length extrapolation: training on short context windows and inferring on longer ones. Despite impressive progress of neural networks across tasks, length extrapolation remains a major challenge.

The Transformer's capacity comes at the cost of quadratic computation and memory complexity with respect to input length, which leads to predefined context-length limits for Transformer-based models, typically 512 or 1024 tokens. Processing long sequences with a Transformer is therefore difficult. Fine-tuning existing models with longer context windows is generally either harmful or expensive. Moreover, training Transformers directly on long sequences is infeasible because high-quality long-text data are scarce and the quadratic cost is prohibitive. Consequently, length extrapolation appears to be a practical approach to relax context-length limits while reducing training overhead.

Recent large language models based on the Transformer, such as Llama and GPT-4, have attracted significant attention in industry and research. Even these capable models still enforce strict context-length limits and can fail on length extrapolation, which constrains their broader adoption. Although GPT-4 supports a context window up to 32k tokens, in practice this is still insufficient. As model capabilities increase, expectations for context use also grow, and existing methods that rely on long context windows place even higher demands on that length.

Background

The Transformer was originally introduced as an encoder-decoder architecture, where both encoder and decoder are composed of identical layers. Each encoder layer consists of two sublayers: a self-attention layer and a position-wise feedforward network. Each decoder layer contains an additional cross-attention sublayer that attends to encoder outputs. Below is a concise description of an encoder layer. Given an input sequence of embeddings, an encoder layer is defined as follows.

In attention, query, key, and value are computed by linear projections. Compatibility scores are computed as the dot product between query and key, scaled by a factor. A row-wise softmax converts compatibility scores into weights. The weighted sum of values is the output of the attention sublayer. The feedforward network consists of two linear transformations separated by a ReLU activation. Residual connections and layer normalization are applied around each sublayer for stability.

To allow the model to jointly attend to information from different representation subspaces at different positions, multi-head attention is typically used. In short, H heads mean computing self-attention H times using different projection matrices, then concatenating the outputs along the feature dimension to obtain the final output. From this description, the encoder layer is permutation-equivariant or order-invariant, since both the attention and feedforward sublayers are permutation-equivariant. That is, for any permutation of the input sequence, the layer output is permuted in the same way. This permutation-equivariant property does not align with the inherently ordered nature of human language and can be mitigated by injecting positional information into the Transformer.

Positional Encoding for Length Extrapolation

Intuitively, length extrapolation is strongly correlated with absolute sequence length and token positions. When the Transformer was introduced, researchers proposed sinusoidal positional embeddings and claimed they can extrapolate to longer sequences than those used in training. The idea that simply changing the way positions are represented can enable length extrapolation has been widely supported and demonstrated. Therefore, developing better positional encoding methods has become a primary approach to enhance Transformer length extrapolation.

There are various methods to integrate position information into Transformers, collectively referred to as positional encodings (PEs). Table 1 summarizes characteristics of different extrapolatable PEs. We categorize PEs as absolute positional encodings (APE) or relative positional encodings (RPE). With APE, each position is mapped to a unique representation. RPE represents positions based on the relative distance between two tokens.

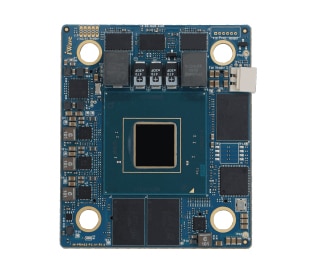

ALLPCB

ALLPCB