Overview

The red imported fire ant is a major agricultural pest that bites, chews electrical wiring, and can cause fires. Current control methods lack precision and efficiency and cannot keep pace with the species' spread. This project implements an AI-vision and autonomous navigation robot for detection and targeted bait delivery to continuously locate and treat fire ant nests, reducing infestation impact on farmland and communities.

Innovation and key features

Product highlights

- Vision system: High-performance camera with AI-based vision for accurate and rapid detection of fire ant nests.

- Autonomous navigation: Route planning with LIDAR and IMU sensors for traversal over fields, grass, and rough terrain.

- Robotic arm bait deployment: Multi-degree-of-freedom arm with high-precision servos for targeted bait placement.

- Tracked chassis: Shock-mitigating, lunar-rover-style tracked design for stable operation on uneven terrain.

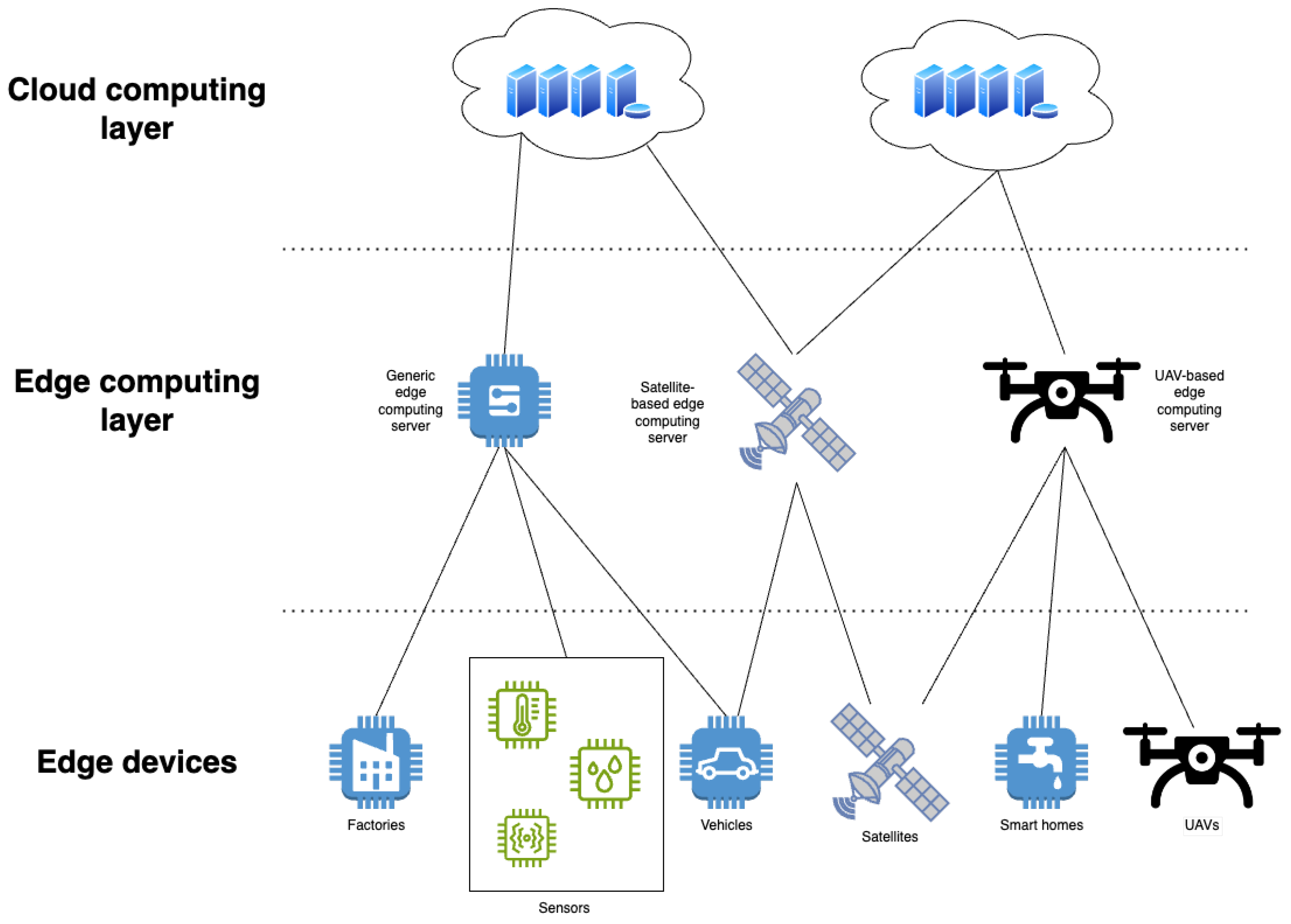

- Cloud analytics: Real-time data upload and analysis to study nest distribution and optimize treatment strategies.

Technical innovations

- Suspension and shock absorption: Eight dampers to stabilize mobility and protect onboard equipment.

- Lunar-rover-style chassis: Passive rocker-arm suspension at the front to maintain contact on rough surfaces and improve obstacle traversal.

- Robotic arm with negative-pressure pump: Closed-loop control for precise bait pickup and release.

- Coordinated tracked control: Tight hardware-software integration for precise patrol and automatic course adjustment toward nests.

- YOLO-SRC algorithm: Modified detection algorithm optimized for small, mobile targets like ant nests.

- Precise bait metering: Intelligent control of bait dosage to maximize effectiveness while minimizing ecological impact.

Capabilities

Functional features

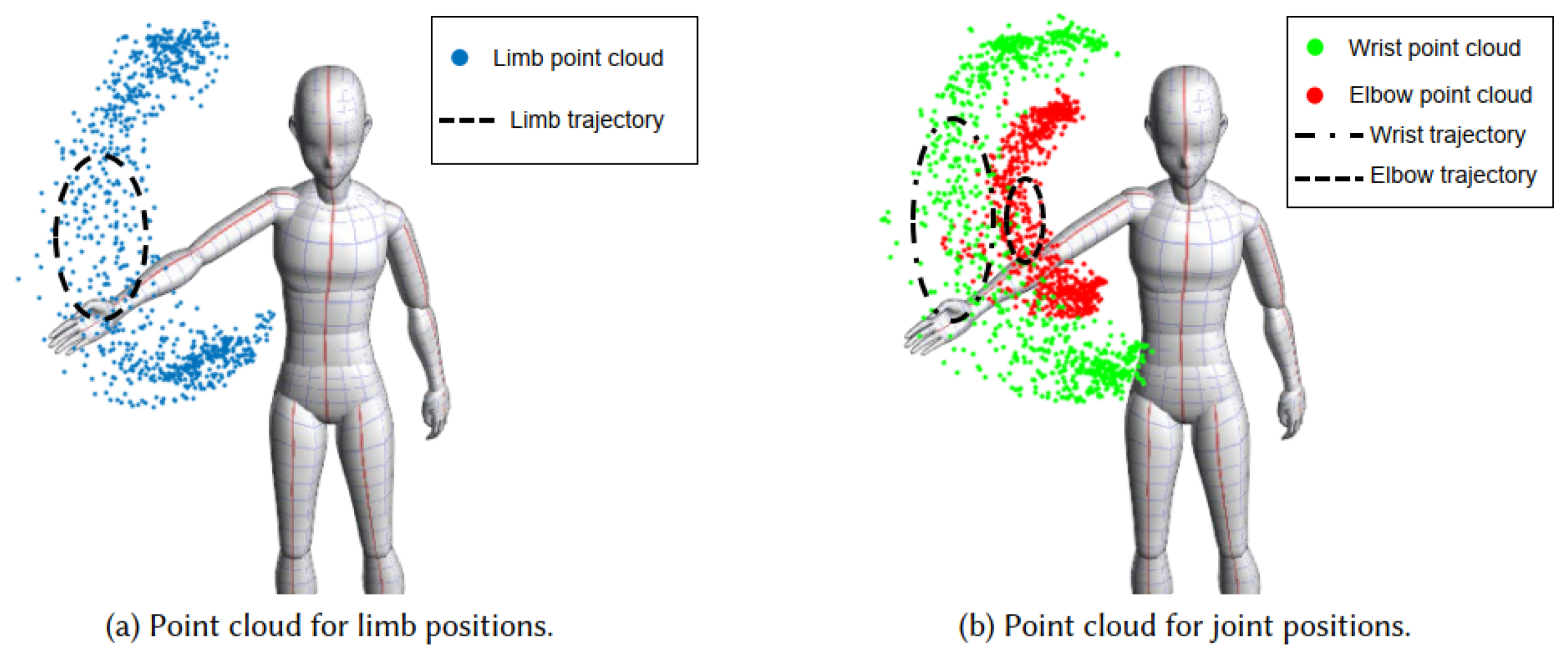

- Intelligent patrol: Based on ROS, the robot follows predefined global waypoints, constructs 3D point-cloud maps in real time, explores autonomously, avoids obstacles, and detects fire ant nests.

- Targeted treatment: When approaching a nest, the robotic arm and negative-pressure pump retrieve bait and, guided by vision correction, place bait precisely to avoid wastage or non-target impacts.

- Ecological control: Bait dosage is controlled to improve kill rate while reducing environmental pollution and avoiding harm to non-target ant species.

- Remote monitoring: Patrol routes and treatment records are visualized on web and mobile interfaces for remote oversight.

- Predictive search: Cloud models predict nest distribution in unsurveyed areas and generate search routes to guide the robot for efficient coverage.

Design process

The design process began with problem definition and requirements analysis, identifying limitations of manual inspection and existing market products. The proposed solution integrates a tracked chassis, robotic arm, and electronic control system. The chassis uses dampers and lunar-rover-inspired mechanics for improved off-road performance. The arm uses high-precision servos and vision for accurate bait deployment. Autonomous navigation combined with cloud data integration enables efficient detection and treatment of fire ant nests, meeting the objective of an automated control system.

System implementation

System overview

The robot follows planned patrol routes for nest detection and can prioritize GPS-designated high-risk zones for search and treatment. During treatment, the chassis positions the robot near the nest, the arm coordinated with a negative-pressure pump extracts bait and aligns over the nest using vision and control systems, then deposits the bait to ensure efficacy and minimize environmental impact.

Patrol paths and treatment data are visualized on web and mobile interfaces. Cloud prediction models use known nest data to infer likely distributions in unsearched areas and guide route optimization. The system applies a "biocontrol" concept through sustained detection, efficient search, and cloud-assisted planning to control fire ant populations and reduce ecological and economic harm.

Hardware system

Overall hardware

The electronic control system manages the tracked chassis motors and the robotic arm. An upper-level i5 host sends speed information to a lower-level controller, which drives the chassis toward the nest and controls the arm and the negative-pressure pump to pick up and accurately place bait under vision guidance.

Mechanical design

A. Chassis

The system uses a tracked, lunar-rover-inspired drive structure, with motors driving the tracks for movement.

Shock absorber system

Eight shock absorbers enhance off-road performance and protect onboard equipment from vibration. On uneven ground, idler wheels move upward under load and the dampers provide downward force to maintain track-to-ground contact, absorbing terrain-induced vibration and reducing body oscillation for a more stable ride.

Lunar-rover-style suspension

The front section uses a passive rocker-arm suspension between two idler wheels. The rocker-arm is mounted on a linkage-based suspension to improve articulation and allow the front assembly to adapt to rocky terrain by adjusting track contact area, increasing the vehicle's ability to overcome obstacles.

Bait hopper and layout

The bait hopper forms the main body of the chassis, with transformer and battery mounted beneath to save space and protect components. The hopper geometry is higher at the sides and lower in the center to channel powdered bait to the center for easier collection. Secure mounting mechanisms fix large batteries and motors to prevent displacement under vibration.

Tracked design for off-road use

The tracks are optimized for field conditions by increasing width and thickness to improve stability and traction, reduce rollover risk, and enhance climbing capability.

B. Robotic arm

The arm is a three-degree-of-freedom assembly driven by bus servos and linkages. This configuration reduces load at the arm tip and concentrates weight on the chassis to lower the system center of gravity. A clamp-like fixture secures the soft hose that transports solid bait, and a depth camera plus a negative-pressure pump handle bait pickup and release. When a nest is detected, the host sends positioning commands via serial to the servo controller, which converts them to PWM signals to drive servos to specific angles. Once aligned, the pump is activated to draw bait, and then a gate opens to release bait onto the nest.

The arm comprises three modules: base rotation module, upper-arm motion module, and forearm motion module.

Base motion module

The arm mounts to a base plate with gears and a moving disk that has three threaded holes forming a triangular pattern with screw-type universal ball joints to prevent tilt from an unstable center of gravity. Servos drive the gearing for smooth rotation and a bearing reduces friction.

Upper-arm module

A paired upper-arm assembly includes a central cylindrical hole to route the bait delivery tube and protect it from exposure and damage. Servos control the gross positioning of the arm.

Forearm module

The paired forearm uses a crank-and-link mechanism driven by servos to translate motion precisely to the arm tip, enabling the end effector to reach target points accurately.

Electronic modules

The lower-level controller is built around an STM32 F407VET6 core board with an expansion board that integrates relay modules. The expansion board is used for wire management and terminations based on available connectors, primarily using P2.54 headers during assembly.

Navigation and perception system

Overview

Navigation and perception fuse local autonomous exploration, manually planned waypoint patrols, and risk-driven navigation within designated areas.

Key modules

A. SLAM

Simultaneous localization and mapping (SLAM) lets the robot localize while building a map using sensors such as cameras, LIDAR, and IMU. LOAM is a classic LIDAR-based SLAM approach with modules for point-cloud feature extraction, lidar odometry, and map construction. LEGO-LOAM reduces computation via ground-point segmentation and adds loop closure to reduce drift. Because LIDAR-only systems can drift over long runs, multi-sensor fusion is preferred. IMU provides high-rate angular velocity and acceleration data, and fusing GPS improves robustness.

Fusion strategies include loose coupling, where IMU and LIDAR are processed separately before fusion, and tight coupling, where raw LIDAR features and IMU data are fused directly. LIO-SAM uses tight coupling with IMU pre-integration to correct LIDAR distortion and integrates GPS and loop closure to provide robust, low-drift localization and mapping.

Comparative evaluation of ALOAM, LeGO-LOAM, and LIO-SAM showed that LIO-SAM produced richer details and larger mapping coverage. ALOAM fit well on the X axis but had larger errors on Y and Z. LeGO-LOAM performed well on X and Y but deviated on Z. LIO-SAM showed better overall fit across axes despite some variability likely due to IMU calibration. LIO-SAM was selected as the robot SLAM algorithm.

B. FAR Planner autonomous navigation framework

FAR Planner is a real-time path planning algorithm that extracts environment geometry from sensor data to construct a Visibility Graph for navigation. It does not require a prebuilt map and can perform global path planning and dynamic adjustments for a 300 m range within 1–2 ms.

Algorithm steps:

- Environment modeling: Convert point clouds into binary images and apply average filtering to create a smoothed image.

- Feature extraction: Extract polygonal contours from the smoothed image and match them to sensor data.

- Dynamic obstacle avoidance: Detect dynamic obstacles like people or vehicles across multiple frames and update the visibility graph in real time. When obstacles clear, original connections are restored.

- Unknown environment exploration: In unmapped areas, FAR Planner generates multiple feasible paths from current information and continuously optimizes navigation routes.

This framework enables efficient autonomous dynamic navigation in complex environments.

C. GIS-based waypoint optimization

To improve treatment efficiency, the system uses GIS-based path planning for waypoint optimization. The cloud integrates patrol paths, known nest locations, and historical infestation data. Predictive models estimate nest distribution probabilities in unsurveyed areas.

The waypoint planning algorithm considers known patrol paths, nest distribution, risk level, and user priorities, and dynamically adjusts optimal waypoints based on real-time data. Generated waypoints are transmitted to the robot via IoT, and the robot executes autonomous patrols while uploading path and nest data. The cloud system analyzes incoming data and updates waypoints to adapt to environmental changes and maintain optimal patrol strategies.

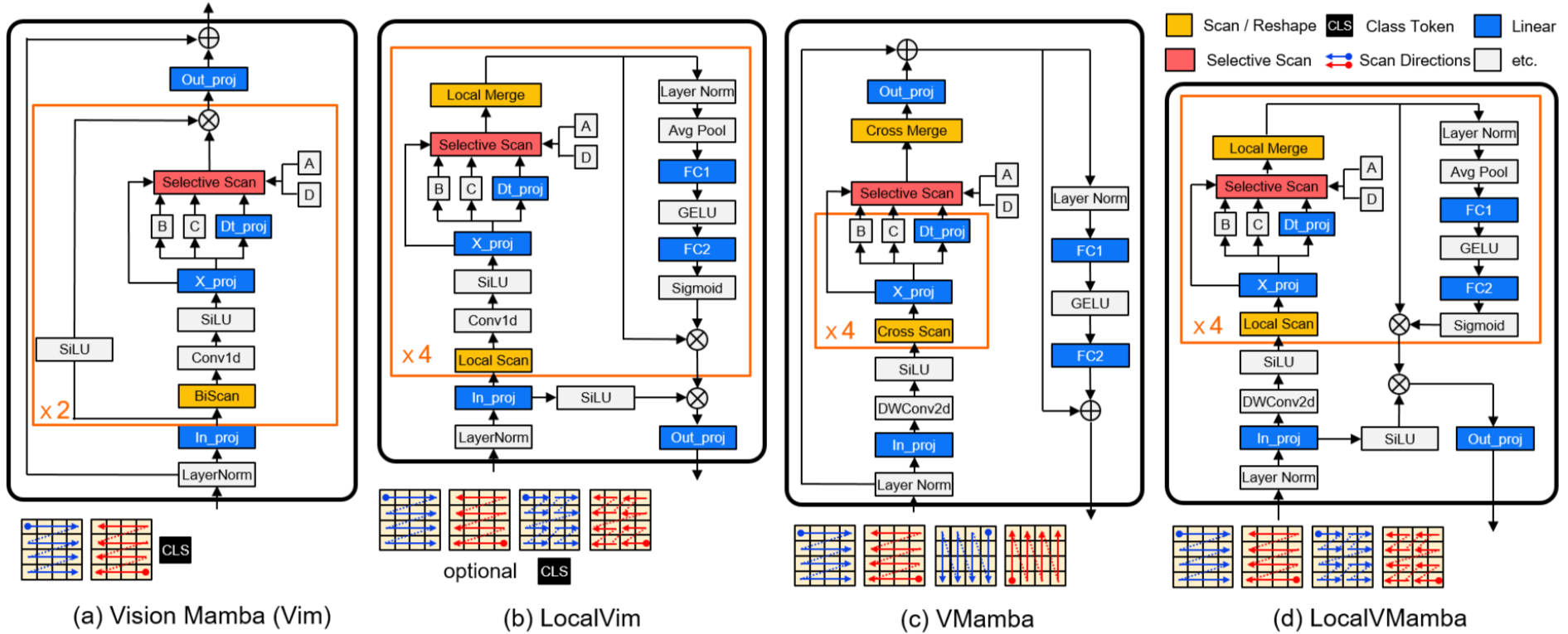

D. YOLO-SRC visual detection and localization

Outdoor detection faces challenges such as overexposure, occlusion, and motion blur. YOLO-SRC is based on YOLOv4 with improvements including synthetic noise augmentation and contrastive consistency alignment during training to improve robustness and accuracy for nest detection.

Core optimizations:

- Feature extraction enhancements to capture micro-target features for better small-target detection.

- Multi-scale feature fusion to improve detection performance across scales.

- Data augmentation and alignment training using Jensen-Shannon divergence loss to align predictions across different noise domains and strengthen anti-interference capability.

Localization workflow:

- RGB-D stereo camera captures images and YOLO-SRC outputs detection bounding boxes.

- Depth maps are aligned and denoised with mean filtering to obtain the target center depth.

- Pixel coordinates are transformed to the robot coordinate frame to estimate the spatial pose of the nest.

Software architecture

Backend

The backend uses a Tornado framework to handle high concurrency and long-lived connections and provides API interfaces. Key modules include:

- Trajectory handler: Reads robot trajectory data (latitude and longitude) for front-end mapping.

- Marker handler: Manages nest marker operations including add, update, and retrieval.

- Region query handler: Returns nest counts within user-selected areas.

- Heatmap generator: Provides nest location data for front-end heatmap rendering.

- Visualization processor: Produces charts for weather, robot status, temperature and humidity, and nest statistics.

- Authentication and session management: Handles user authentication, session control, and access permissions.

Frontend

The frontend uses a mapping API to visualize robot trajectories, nest markers, and heatmaps. Features include:

- Trajectory display: Plot robot trajectories by mapping latitude and longitude to polylines with show/hide controls.

- Marker display: Mark nest locations and present details such as photos, coordinates, and discovery time on click.

- Region selection: Query and display all nests within a user-selected area.

- Heatmap: Render nest density and distribution, with higher density shown in deeper colors.

- Charts: Display weather forecasts, robot operating status, temperature and humidity, and nest statistics.

Performance summary

- Some mechanical components are 3D printed in PLA, others in PLA-CF, with print densities above 80%.

- Bait payload capacity up to 40 kg.

- Operational endurance up to 4 hours per charge.

- Maximum speed 1 m/s.

- Visual detection accuracy exceeding 92%.

ALLPCB

ALLPCB