Human keypoint detection is a deep learning model for detecting, locating, and estimating human pose. It is widely used in sports analysis, animal behavior monitoring, and robotics to help machines interpret physical actions in real time. This algorithm emphasizes high runtime efficiency and strong real-time performance.

Benchmark Results

Performance on the dataset:

| Algorithm | mAP pose@0.5 |

|---|---|

| Person Pose-S | 86.3 |

| Person Pose-M | 89.3 |

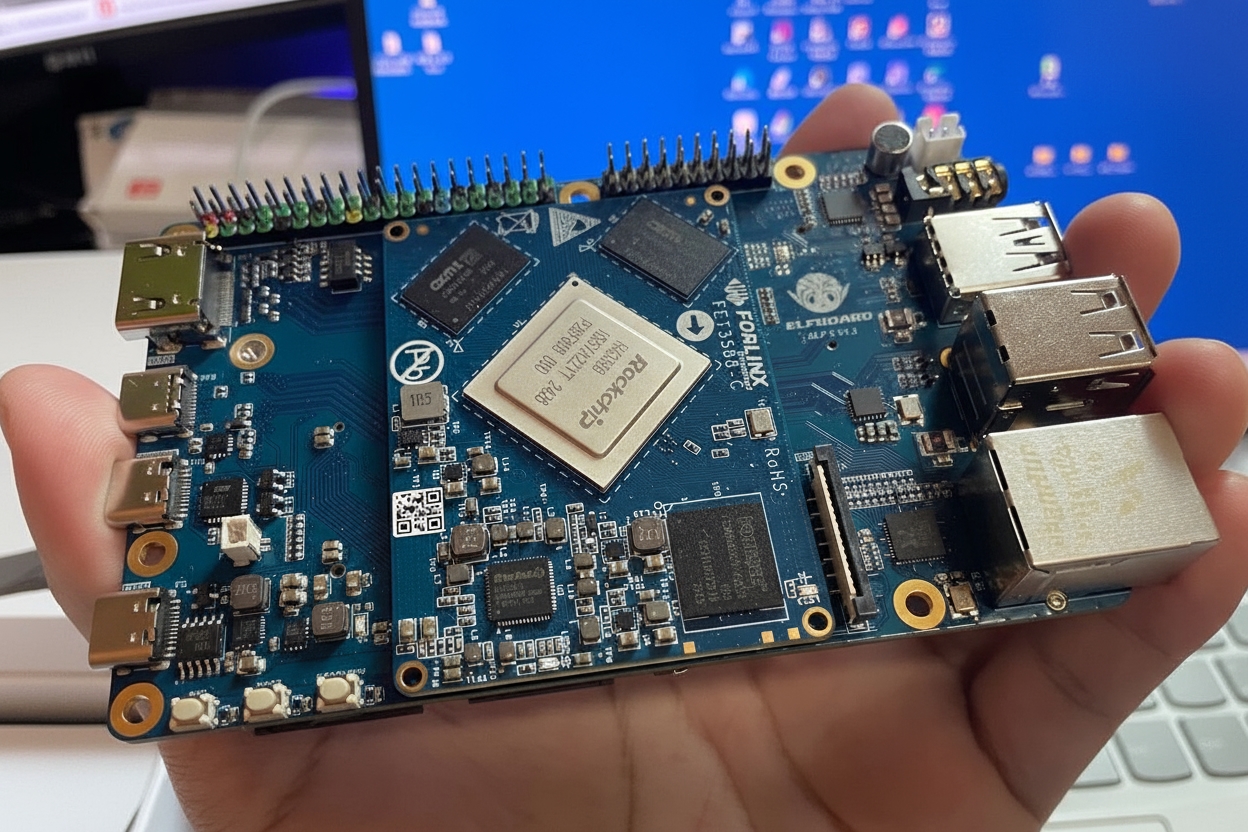

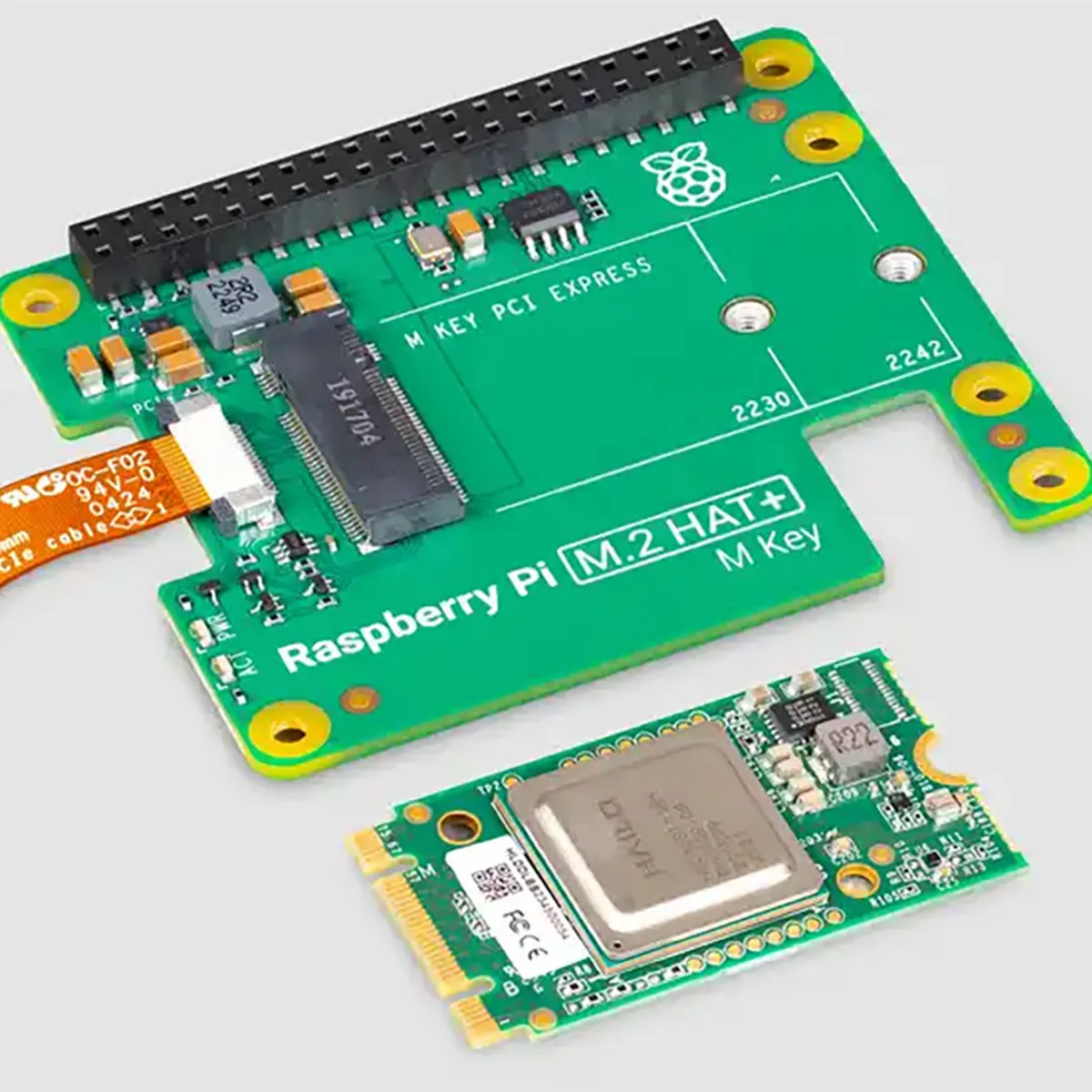

Runtime on EASY-E AI Orin-Nano (RK3576) hardware board:

| Algorithm | Runtime |

|---|---|

| Person Pose-S | 53 ms |

| Person Pose-M | 93 ms |

Keypoint Index Mapping (17 Points)

| Index | Definition |

|---|---|

| 0 | Nose |

| 1 | Left eye |

| 2 | Right eye |

| 3 | Left ear |

| 4 | Right ear |

| 5 | Left shoulder |

| 6 | Right shoulder |

| 7 | Left elbow |

| 8 | Right elbow |

| 9 | Left wrist |

| 10 | Right wrist |

| 11 | Left hip |

| 12 | Right hip |

| 13 | Left knee |

| 14 | Right knee |

| 15 | Left ankle |

| 16 | Right ankle |

Quick Start

If this is your first time reading this document, read the Getting Started, Source Management, and Programming introduction sections, and manage your project source code accordingly. It is recommended to use remote mount management to avoid the risk of code loss.

Downloading the Source Code

On the PC virtual machine, go to the NFS service directory and create a directory to store the source repository:

cd ~/nfsroot

mkdir GitHub

cd GitHubThen use git to clone the remote repository (device must have internet access):

git clone https://github.com/EASY-EAI/EASY-EAI-Toolkit-3576.gitNotes:

- Network issues may cause delays. Please wait patiently.

- If downloading from the GitHub web interface, download the entire repository rather than individual directories for this example.

Development Environment Setup

Use the adb shell to access the board development environment.

Mount the NFS directory from the NFS server using the following command:

mount -t nfs -o nolock <nfs_server_ip>:/<export_path> /home/orin-nano/Desktop/nfs/Building the Example

On the board, go to the mounted NFS directory (adjust the path as needed), then enter the example directory and run the build command:

cd EASY-EAI-Toolkit-3576/Demos/algorithm-person_pose/

./build.shModel Deployment

To run the demo, download the human keypoint detection model.

Download link (visible text only): https://pan.baidu.com/s/1ln9kclhgl6JqXtOzS3y5PQ?pwd=1234 (extraction code: 1234)

Copy the downloaded model into the _Release/ directory.

Running the Example and Results

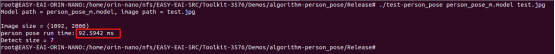

In the board Release directory, run the example program:

cd Release/

./test-person_pose person_pose_m.model test.jpgExample result image:

API details and example code are provided below.

Human Keypoint Detection API

Linking

To call the EASY EAI API library in a local project, add the following include and library paths and link options.

| Item | Value |

|---|---|

| Header directory | easyeai-api/algorithm/person_pose |

| Library directory | easyeai-api/algorithm/person_pose |

| Link option | -lperson_pose |

Initialization Function

Prototype:

int person_pose_init(const char *c, person_pose_context_t *p_person_pose, int cls_num)| Item | Description |

|---|---|

| Function | person_pose_init() |

| Input | p_model_path: model path |

| Input | p_person_pose: algorithm handle |

| Input | cls_num: number of classes |

| Return | 0 on success |

| Return | -1 on failure |

| Notes | None |

Run Function

Prototype:

std::vector person_pose_run(cv::Mat image, person_pose_context_t *p_person_pose, float nms_threshold, float conf_threshold);| Item | Description |

|---|---|

| Function | person_pose_run() |

| Input | image: input image data (cv::Mat from OpenCV) |

| Input | p_person_pose: algorithm handle |

| Input | nms_threshold: NMS threshold |

| Input | conf_threshold: confidence threshold |

| Return | std::vector: person pose detection results |

| Notes | None |

Release Function

Prototype:

int person_pose_release(person_pose_context_t* p_person_pose)| Item | Description |

|---|---|

| Function | person_pose_release() |

| Input | p_person_pose: algorithm handle |

| Return | 0 on success |

| Return | -1 on failure |

| Notes | None |

Example Routine

The example source file is _Demos/algorithm-person_pose/test-person_pose.cpp_. The workflow is shown below.

Reference example:

#include#include#include#include#include "person_pose.h"#include <iostream> // draw lines

cv::Mat draw_line(cv::Mat image, float *key1, float *key2, cv::Scalar color) {

if (key1[2] > 0.1 && key2[2] > 0.1) {

cv::Point pt1(key1[0], key1[1]);

cv::Point pt2(key2[0], key2[1]);

cv::circle(image, pt1, 2, color, 2);

cv::circle(image, pt2, 2, color, 2);

cv::line(image, pt1, pt2, color, 2);

}

return image;

}

// Draw results:

// 0 Nose, 1 Left eye, 2 Right eye, 3 Left ear, 4 Right ear, 5 Left shoulder, 6 Right shoulder, 7 Left elbow, 8 Right elbow, 9 Left wrist, 10 Right wrist, 11 Left hip, 12 Right hip, 13 Left knee, 14 Right knee, 15 Left ankle, 16 Right ankle

cv::Mat draw_image(cv::Mat image, std::vector results) {

long unsigned int i =0;

for (i = 0; i < results.size(); i++) {

// Draw face

image = draw_line(image, results[i].keypoints[0], results[i].keypoints[1], CV_RGB(0, 255, 0));

image = draw_line(image, results[i].keypoints[0], results[i].keypoints[2], CV_RGB(0, 255, 0));

image = draw_line(image, results[i].keypoints[1], results[i].keypoints[3], CV_RGB(0, 255, 0));

image = draw_line(image, results[i].keypoints[2], results[i].keypoints[4], CV_RGB(0, 255, 0));

image = draw_line(image, results[i].keypoints[3], results[i].keypoints[5], CV_RGB(0, 255, 0));

image = draw_line(image, results[i].keypoints[4], results[i].keypoints[6], CV_RGB(0, 255, 0));

// Draw upper body

image = draw_line(image, results[i].keypoints[5], results[i].keypoints[6], CV_RGB(0, 0, 255));

image = draw_line(image, results[i].keypoints[5], results[i].keypoints[7], CV_RGB(0, 0, 255));

image = draw_line(image, results[i].keypoints[7], results[i].keypoints[9], CV_RGB(0, 0, 255));

image = draw_line(image, results[i].keypoints[6], results[i].keypoints[8], CV_RGB(0, 0, 255));

image = draw_line(image, results[i].keypoints[8], results[i].keypoints[10], CV_RGB(0, 0, 255));

image = draw_line(image, results[i].keypoints[5], results[i].keypoints[11], CV_RGB(0, 0, 255));

image = draw_line(image, results[i].keypoints[6], results[i].keypoints[12], CV_RGB(0, 0, 255));

image = draw_line(image, results[i].keypoints[11], results[i].keypoints[12], CV_RGB(0, 0, 255));

// Draw lower body

image = draw_line(image, results[i].keypoints[11], results[i].keypoints[13], CV_RGB(255, 255, 0));

image = draw_line(image, results[i].keypoints[13], results[i].keypoints[15], CV_RGB(255, 255, 0));

image = draw_line(image, results[i].keypoints[12], results[i].keypoints[14], CV_RGB(255, 255, 0));

image = draw_line(image, results[i].keypoints[14], results[i].keypoints[16], CV_RGB(255, 255, 0));

cv::Rect rect(results[i].left, results[i].top, (results[i].right - results[i].left), (results[i].bottom - results[i].top));

cv::rectangle(image, rect, CV_RGB(255, 0, 0), 2);

}

return image;

}

/// Main function

int main(int argc, char **argv) {

if (argc != 3) {

printf("%s \n", argv[0]);

return -1;

}

const char *p_model_path = argv[1];

const char *p_img_path = argv[2];

printf("Model path = %s, image path = %s\n\n", p_model_path, p_img_path);

cv::Mat image = cv::imread(p_img_path);

printf("Image size = (%d, %d)\n", image.rows, image.cols);

int ret;

person_pose_context_t yolo11_pose;

memset(&yolo11_pose, 0, sizeof(yolo11_pose));

person_pose_init(p_model_path, &yolo11_pose, 1);

double start_time = static_cast<double>(cv::getTickCount());

std::vector results = person_pose_run(image, &yolo11_pose, 0.35, 0.35);

double end_time = static_cast<double>(cv::getTickCount());

double time_elapsed = (end_time - start_time) / cv::getTickFrequency() * 1000;

std::cout << "person pose run time: " << time_elapsed << " ms" << std::endl;

// Draw results

image = draw_image(image, results);

cv::imwrite("result.jpg", image);

printf("Detect size = %ld\n", results.size());

ret = person_pose_release(&yolo11_pose);

return ret;

} ALLPCB

ALLPCB