Introduction

Ubiquitous cameras and widespread facial recognition raise several common misconceptions and risks. Professor Lao Dongyan of Tsinghua University School of Law offers an analysis of these issues.

1. It’s more than simple identification

Cameras are becoming pervasive in everyday environments. Many people assume these cameras merely record public movements or deter traffic violations and therefore pay little attention to them. In fact, camera proliferation is often paired with facial recognition systems to enable far more invasive capabilities.

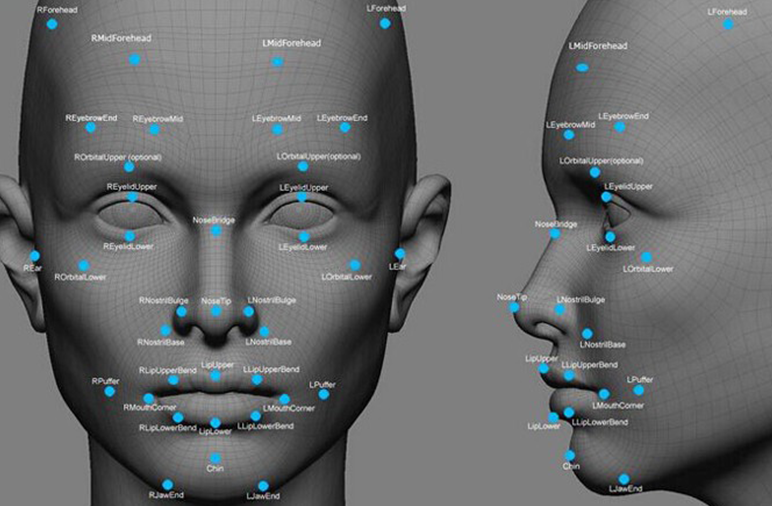

In the past year, fundamental technical advances have significantly increased recognition accuracy, and application scenarios have grown rapidly. Many people calmly or even cheerfully accept these deployments, treating facial recognition as a benign expansion of new technology. Some assume cosmetic surgery might defeat recognition, but facial biometric features such as interpupillary distance remain stable, so recognition remains effective.

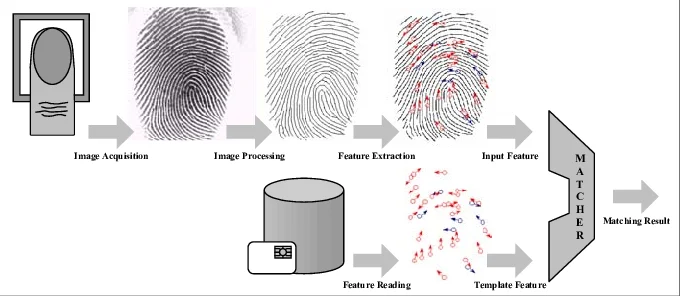

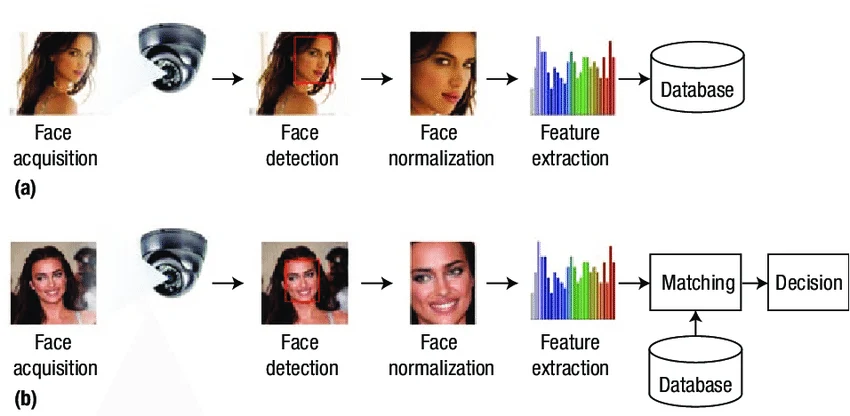

Viewing facial recognition as merely identification or verification is a major misunderstanding. Beyond capturing facial biometrics and comparing them with an existing database, the technology can map identities to daily movement trajectories, match people to vehicles, infer kinship, and identify frequent contacts. These capabilities arise from combining recognition with pervasive cameras, and whether they are used depends entirely on the controller’s intentions.

Modern facial recognition can indicate gender, estimate age, and detect facial expressions. As a form of artificial intelligence and deep learning, further advances will increase the amount of privacy-related information derivable through analysis, sufficient to build detailed user profiles for any individual.

If an omniscient and benevolent entity collected, stored, and used these data, there would be less concern. The problem is that such a presumption is unrealistic.

Reassurances like "if you have nothing to hide, you have nothing to fear" are naive. Historical cases of wrongful conviction and corruption show that abuse and concealment can and do occur. Widespread public acceptance of facial recognition is therefore often either wishful thinking or a selective underestimation of risks. In short, people make biased judgments under information asymmetry, which helps explain why facial recognition has not become a sustained public debate.

2. Problems at collection, storage, and use

Current facial recognition systems present significant issues across collection, storage, and use. These issues implicate major rights of citizens and are not limited to modest privacy sacrifices.

At the collection stage, facial recognition is non-contact and can operate at a distance and at scale without user awareness, making it highly intrusive. It can capture and record facial data without individuals’ perception. China Newsweek reported an expert estimate that people in China are exposed to cameras more than 500 times daily. Once facial recognition is deployed, there is effectively always an eye watching, making people essentially transparent.

Facial biometrics are personal information. Under current law, many collection practices likely violate legal requirements because consent was not obtained. Criminal law provisions prohibit illegally obtaining personal information without consent, or selling or providing lawfully obtained personal information to third parties; such acts may constitute offenses of infringing citizens’ personal information.

Some deployments appear to take place with apparent consent, but inadequate notice about the collector, data scope, purposes, protections, and risks means any de facto acceptance often does not constitute valid legal consent.

At the storage stage, failure to protect collected data can cause large-scale leaks. Even with reasonable protections, data face the risk of hacker intrusion. Because biometric traits are immutable, a leak creates irreversible risk and harm that cannot be easily remedied.

There have been multiple reports this year of facial data leaks. First, a China-based company that provided face detection and crowd analysis was found to have left its facial recognition database unprotected by a password, exposing 2.56 million user records. Recently, media found a marketplace selling 170,000 facial records. We do not know who will use leaked facial data, in what contexts, or whether it will lead to property loss or other rights violations. It is clear that facial data leaks present greater security risks than leaks of mobile phone numbers or account credentials.

An AI face-swap app released this year can replace a person’s face in a video using a single frontal photo. Face-swapping makes fraud and social disruption easier. Given the rise of telecom fraud, large-scale facial data leaks could cause significant societal panic and substantial individual losses.

Facial recognition systems also have technical vulnerabilities. A report in China Newsweek described a security competition where a young graduate from Zhejiang University bypassed a facial recognition access control system in two and a half minutes and replaced stored faces on the device.

Deployment of facial recognition has grown explosively, yet there are no mandatory standards governing how related data must be protected; practices depend entirely on the collectors. The result is an unpredictable risk landscape: like a powder keg that needs only a spark to trigger a major social incident.

At the usage stage, without limitations, expansion of application scenarios will inevitably produce misuse and discrimination.

Beyond security, access control, payment, and authentication, facial recognition is widely used for retail footfall analytics, community management, pension collection, tax verification, item storage, attraction entry and ticketing, and even classroom monitoring to supervise student behavior. It has also begun appearing in subway security checks. These applications indicate a tendency to overuse facial recognition, enabling mass manipulation and pervasive surveillance. The currently visible abuses are likely only the tip of the iceberg.

Facial recognition can also fuel discrimination. Studies show faces can reveal sexual orientation and yield mostly accurate predictions. That makes LGBTQ+ individuals vulnerable to discrimination. Discrimination can also arise along the lines of race, gender, ethnicity, region, and occupation.

3. Drivers: power and capital

Technical progress alone does not fully explain the rapid deployment of facial recognition. Two major forces drive its expansion: state power and capital.

From the power perspective, governments have found a convenient technical tool to implement extensive security measures. From the capital perspective, companies developing and promoting these systems aggressively expand their businesses to increase market valuation and profits.

Cooperation between state power and private capital has enabled rapid territorial expansion of facial recognition. Because current laws do not comprehensively regulate how to collect, store, transmit, use, process, or share data with third parties or publish data online, application scenarios can grow unchecked, multiplying potential risks. This is not merely alarming; it is hard to imagine the full consequences.

Two questions can be posed to this alliance: First, is capturing criminals truly the foremost task of society? Second, does capital pursuit of profit not require basic social responsibility?

Many assume facial recognition merely requires small privacy concessions in exchange for efficiency and security. But if one recognizes the technology’s nature and the many problems across collection, protection, and use, this trade looks like a pact with the devil. Individuals would not only surrender modest privacy, but potentially most or all privacy, without gaining the imagined security.

Yes, facial recognition in security contexts may apprehend a few more criminals. But can such marginal gains truly justify the irreversible risks of biometric leakage and abuse?

Consider the actual probability that ordinary people suffer criminal harm in daily life. Prior to facial recognition deployment, were citizens constantly threatened by crime? A society that proclaims high safety yet argues ordinary citizens constantly face criminal threats and therefore require pervasive surveillance is making inconsistent claims.

Such careless risk assessments suggest public perception may be shaped by narratives from power and capital. If these actors jointly entice citizens with small conveniences for large privacy costs, and citizens reciprocate by defending those actors, the result is misguided and dangerous.

4. Responses to common objections

It is worth addressing three common objections.

Objection 1: If fingerprint recognition is acceptable, why not facial recognition?

This argument lacks basic logic. Four rebuttals are relevant. First, public authorities collecting fingerprints for identity documents may have statutory authorization under specific identity laws; facial data collection generally lacks equivalent legal authorization. Worldwide, mandatory biometric collection of citizens is uncommon and often prohibited in the name of personal autonomy or constitutional protections.

Second, fingerprint collection is less intrusive than facial recognition: fingerprints are not visually observable and have weaker links to movement trajectories or other behavioral data, so monitoring potential is lower. Facial recognition combined with pervasive cameras creates much greater surveillance and higher risks of leakage and misuse.

Third, fingerprint applications are typically limited and used in contexts like personal devices or internal systems where users are aware; facial recognition is often used without individuals’ knowledge and can occur hundreds of times daily. How many people would accept being fingerprinted hundreds of times per day?

Fourth, extrapolating from fingerprint authorization to facial data ignores legal authorization requirements. If one accepts that prior fingerprint collection permits further biometric collection, what stops the next step of collecting posture, gait, or even genetic data? Legal authorization is required before such sensitive data may be collected.

Objection 2: If airports use facial recognition, why not subways or other public spaces?

This objection is misleading for three reasons. First, it assumes a premise that is not proven: facial recognition is not an essential element of airport security and has only been adopted recently in some airports. Effective airport security existed without facial recognition for many years in numerous countries.

Second, even if facial recognition is arguably useful at airports, that does not justify deploying it in all public places. Treating every context as if it requires the highest-level security measures reflects poor governance and causes unnecessary public inconvenience.

Third, even if passengers accept facial recognition for airport screening, that does not authorize reuse of facial data outside the airport’s security purpose. Data consent for airport security should not be a blanket authorization for other uses; doing so would exceed the scope of consent and constitute unlawful collection or use of biometric data.

Objection 3: Why not offer voluntary choice in subways?

At first glance, voluntary choice seems reasonable, but it is not feasible. Voluntariness requires clear and sufficient risk disclosure. If users were fully informed about facial recognition’s nature and the substantial risks in collection, storage, and use, many would likely decline efficiency gains in favor of privacy and security.

Additionally, the necessity of full-body or face screening in subways is questionable. Cities such as London, Paris, and Tokyo have experienced terrorism but have not adopted blanket person-level screening in subways after considered evaluation. The practical feasibility of offering two types of security channels in crowded Chinese subways is low; separate channels would increase security spending and divert public funds from other social needs.

Therefore, these three objections are either logically flawed, misleading, or practically infeasible.

5. Principles and regulatory recommendations

The author is not a technological Luddite nor opposed to all facial recognition deployment. However, without clear legal authorization, public authorities should not collect ordinary citizens’ facial biometric data, because such collection lacks fundamental legitimacy.

Commercial use of facial recognition, given its collection of personal information, must strictly comply with personal data protection laws. Data collection and storage must be standardized, and application scenarios must be tightly restricted.

Commercial deployments should meet at least four requirements:

1. Collectors must provide clear and sufficient notice of relevant information and risks, and obtain prior informed consent from data subjects. Without explicit consent, collectors must not provide personal data to third parties (including government agencies) or allow third-party use. Exceptions for criminal investigation or national security are possible but must be narrowly defined with strict procedures.

2. Collection procedures must be transparent, and the data scope must be limited to what is necessary for the stated purpose. Collectors must not collect facial data beyond the reasonable needs of the application scenario.

3. Collectors must fulfill data custody obligations. They should take reasonable measures to secure collected data; failure to do so should incur legal liability. If a data subject withdraws consent or requests deletion, collectors must delete the data.

4. Application scenarios must be lawful and proportionate and avoid overly intrusive measures. Data collected for a specific scenario should not be repurposed for other scenarios unless such reuse is reasonably foreseeable. Unauthorized expansion or change of use should trigger legal liability.

Facial biometric data are more sensitive than typical personal information. Consent-based legal protections are increasingly inadequate to address challenges posed by technological advances. Legal research and targeted regulatory development are necessary to identify effective and proportionate safeguards.

Biometric information, including faces, genes, fingerprints, iris patterns, and gait, is immutable. Once leaked, consequences are dire and difficult to remedy; legal remedies alone cannot fully restore pre-leak conditions.

Unless collection, storage, and use are strictly regulated by law, indiscriminate promotion of facial recognition opens a Pandora’s box. The cost is not only privacy but also the fragile security people expect.

Public debate on facial recognition should not be framed as a binary trade-off between privacy and security. The technology threatens both privacy and security. Without privacy there is no freedom; prolonged erosion could lead to a society with neither freedom nor security. Faced with that prospect, would citizens still accept short-term conveniences that enable power and capital to broadly deploy facial recognition?

Rational choices about facial recognition require clearing the fog of promised security and efficiency and resisting capture by power and capital.

ALLPCB

ALLPCB